What Is a Technical SEO Audit?

A technical SEO audit is a detailed analysis of the technical aspects of a website related to search engine optimization.

The primary goal of a technical site audit for SEO is to make sure search engines like Google can crawl, index, and rank pages on your site.

You can find and fix technical issues by regularly auditing your website. Over time, that will improve your site’s performance in search engines.

How to Perform a Technical SEO Audit

You’ll need two main tools to perform a technical site audit:

- Google Search Console

- A crawl-based tool, like Semrush’s Site Audit

If you haven’t used Search Console before, read our beginner’s guide to learn how to set it up. We will discuss the tool’s various reports below.

And if you’re new to Site Audit, you can sign up for free and start within minutes.

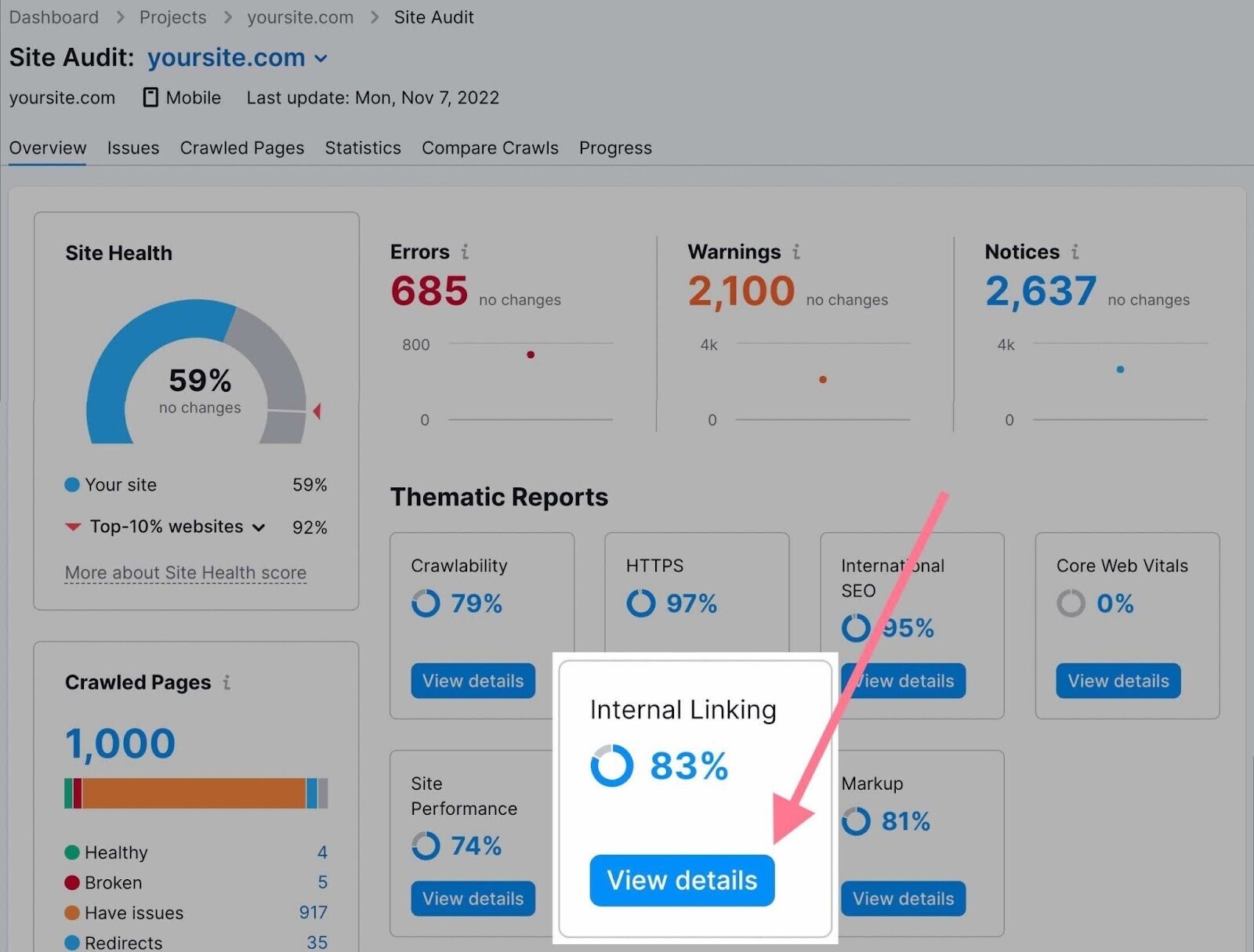

The Site Audit tool scans your website and provides data about all the pages it’s able to crawl. The report it generates will help you identify a wide range of technical SEO issues.

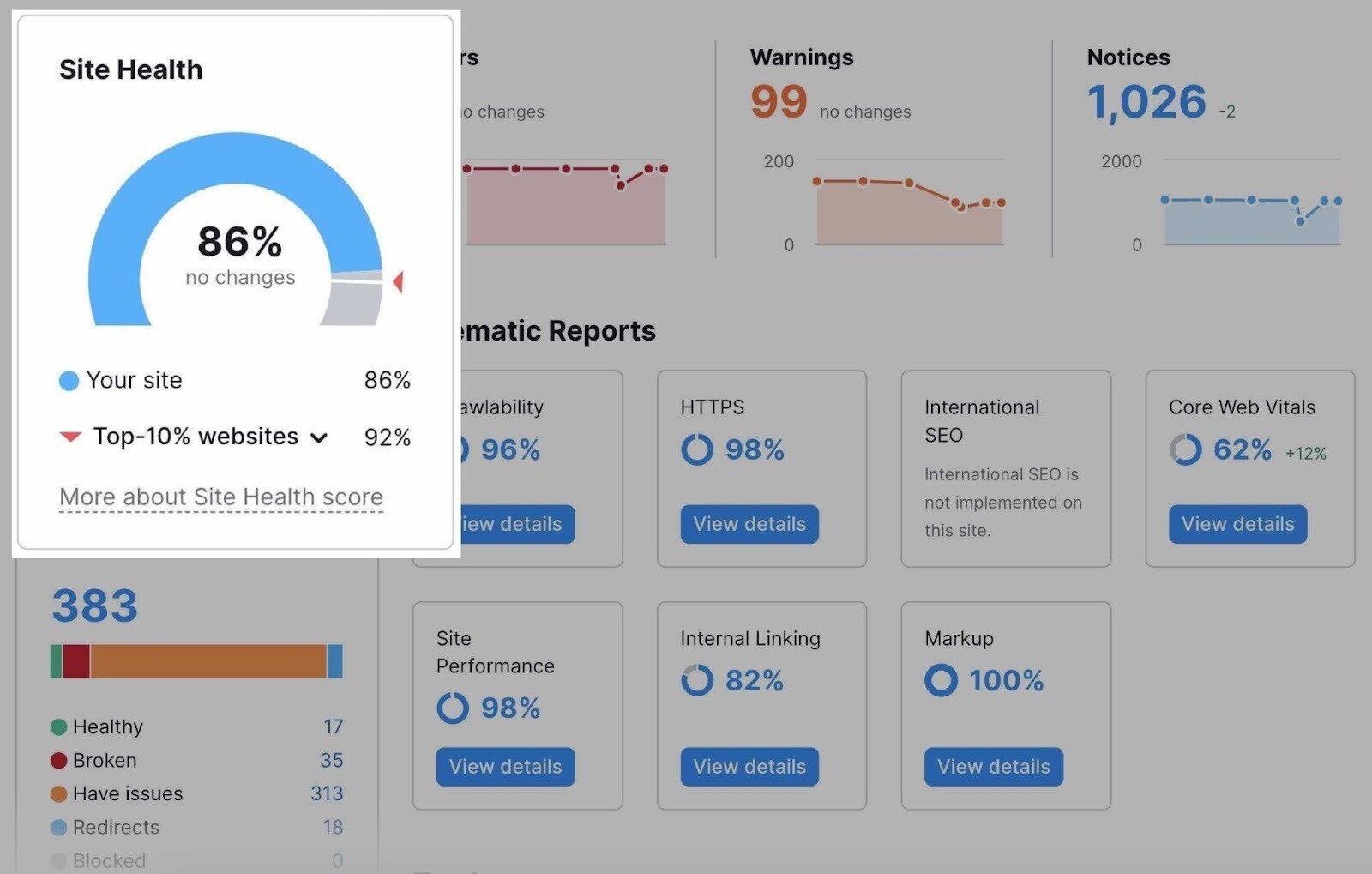

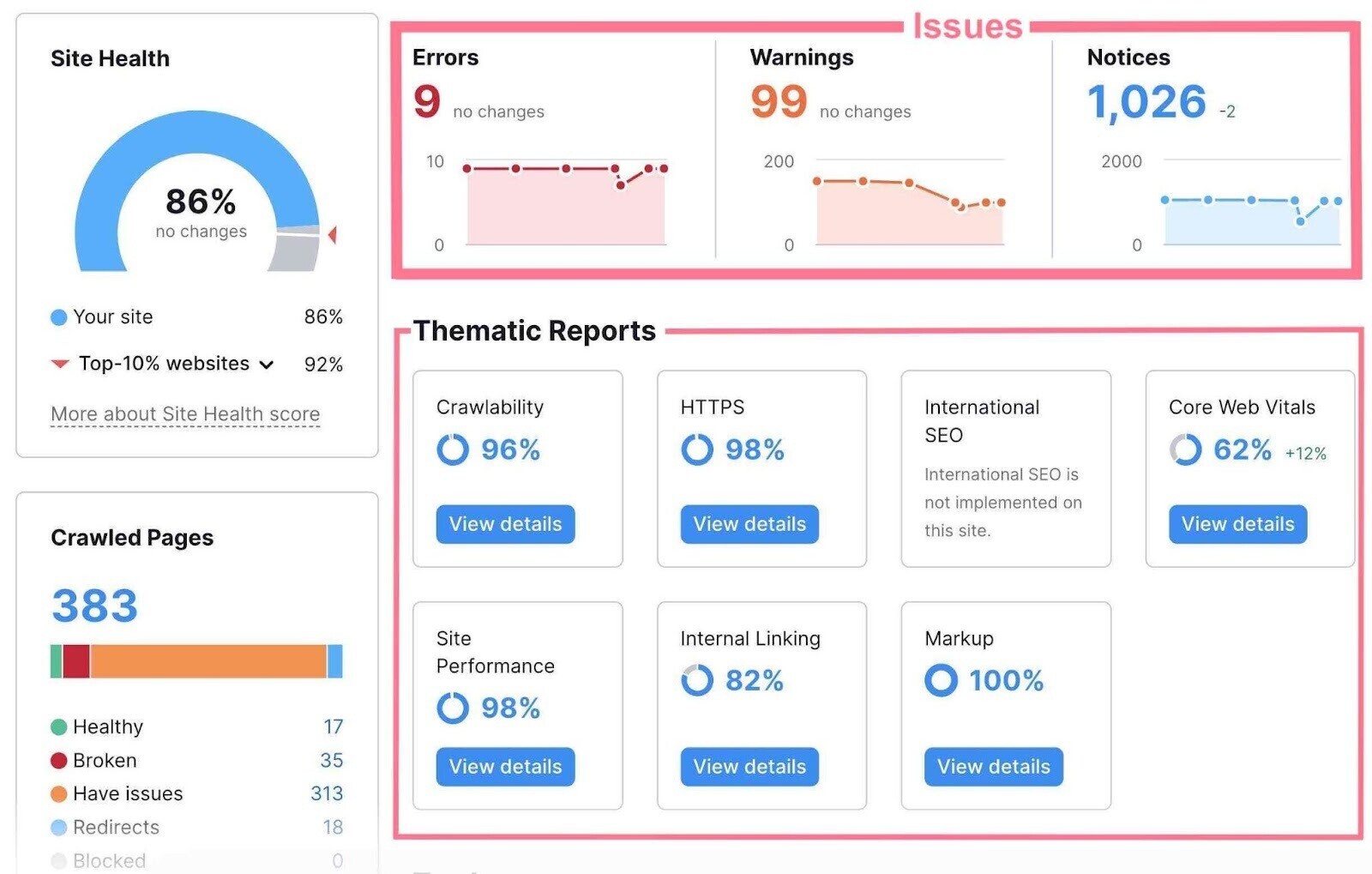

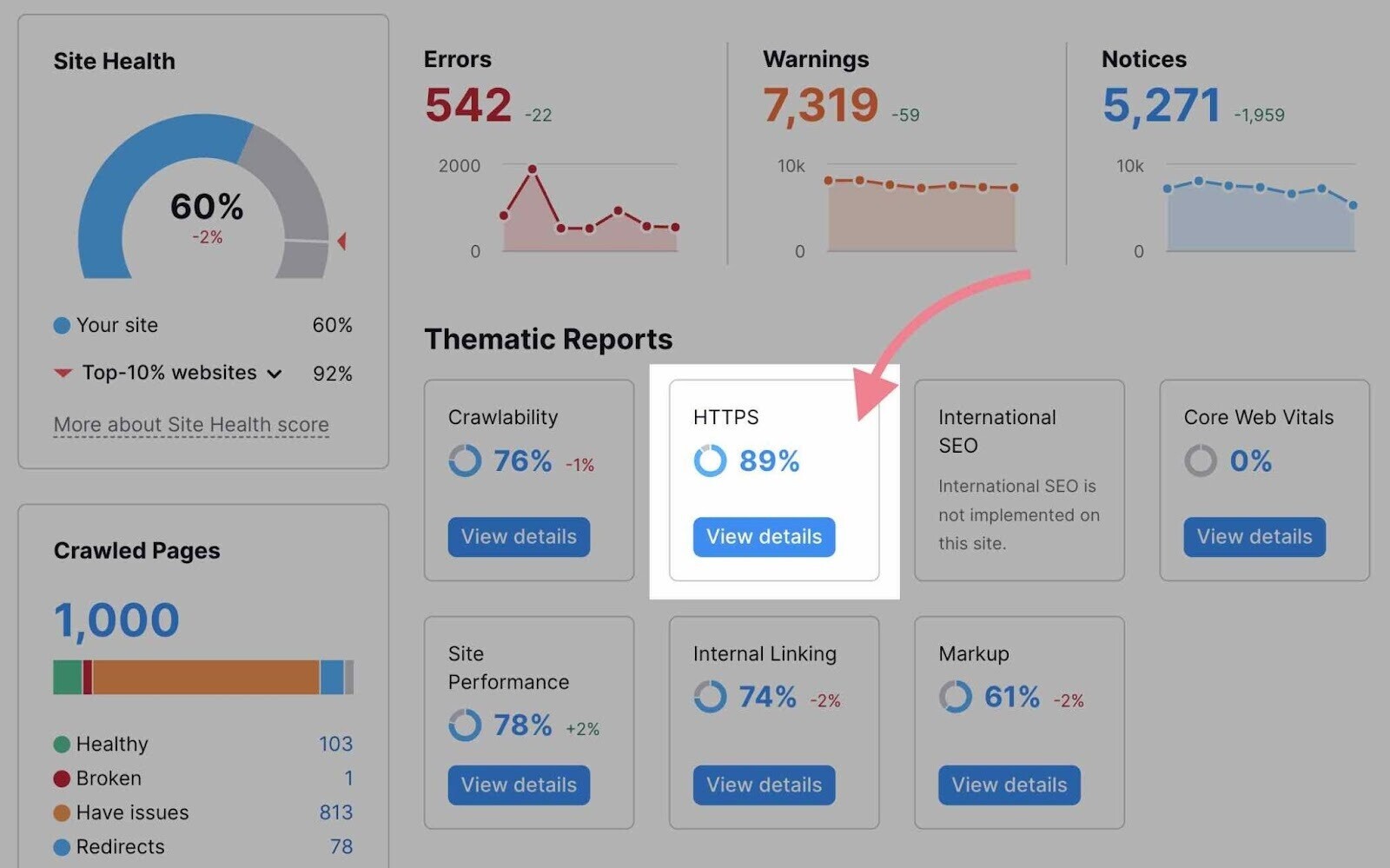

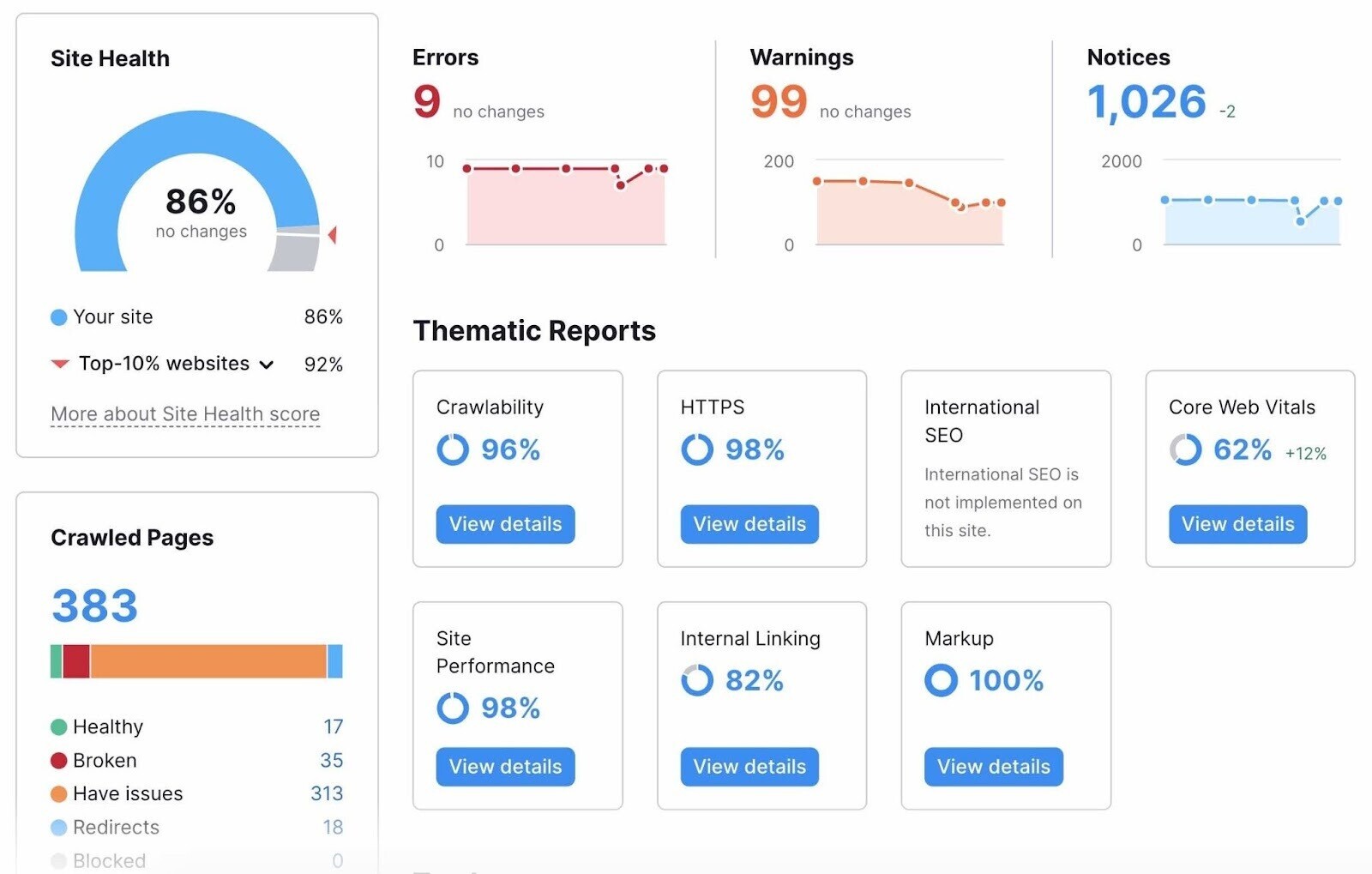

The overview looks like this:

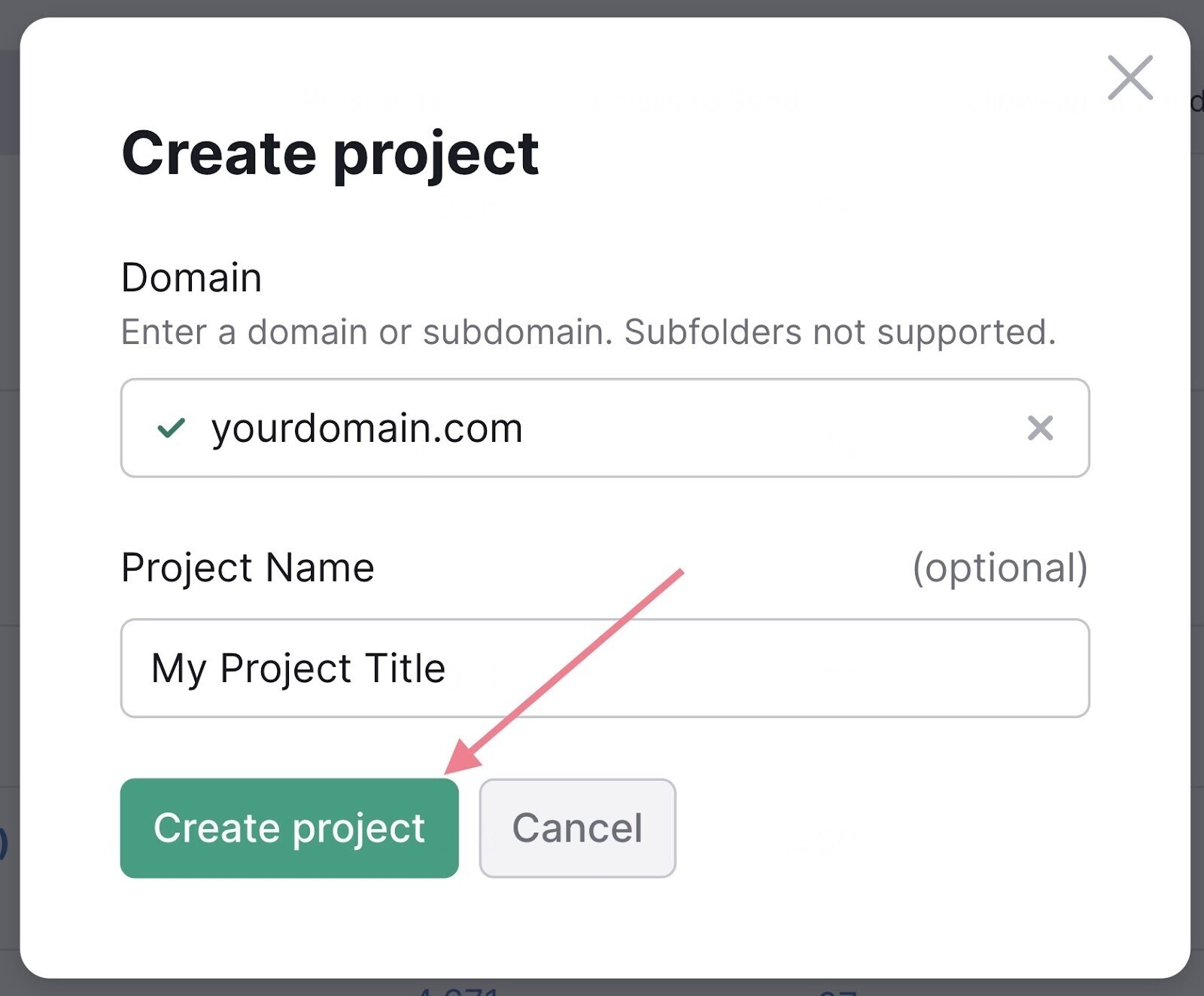

To set up your first crawl, you’ll need to create a project first.

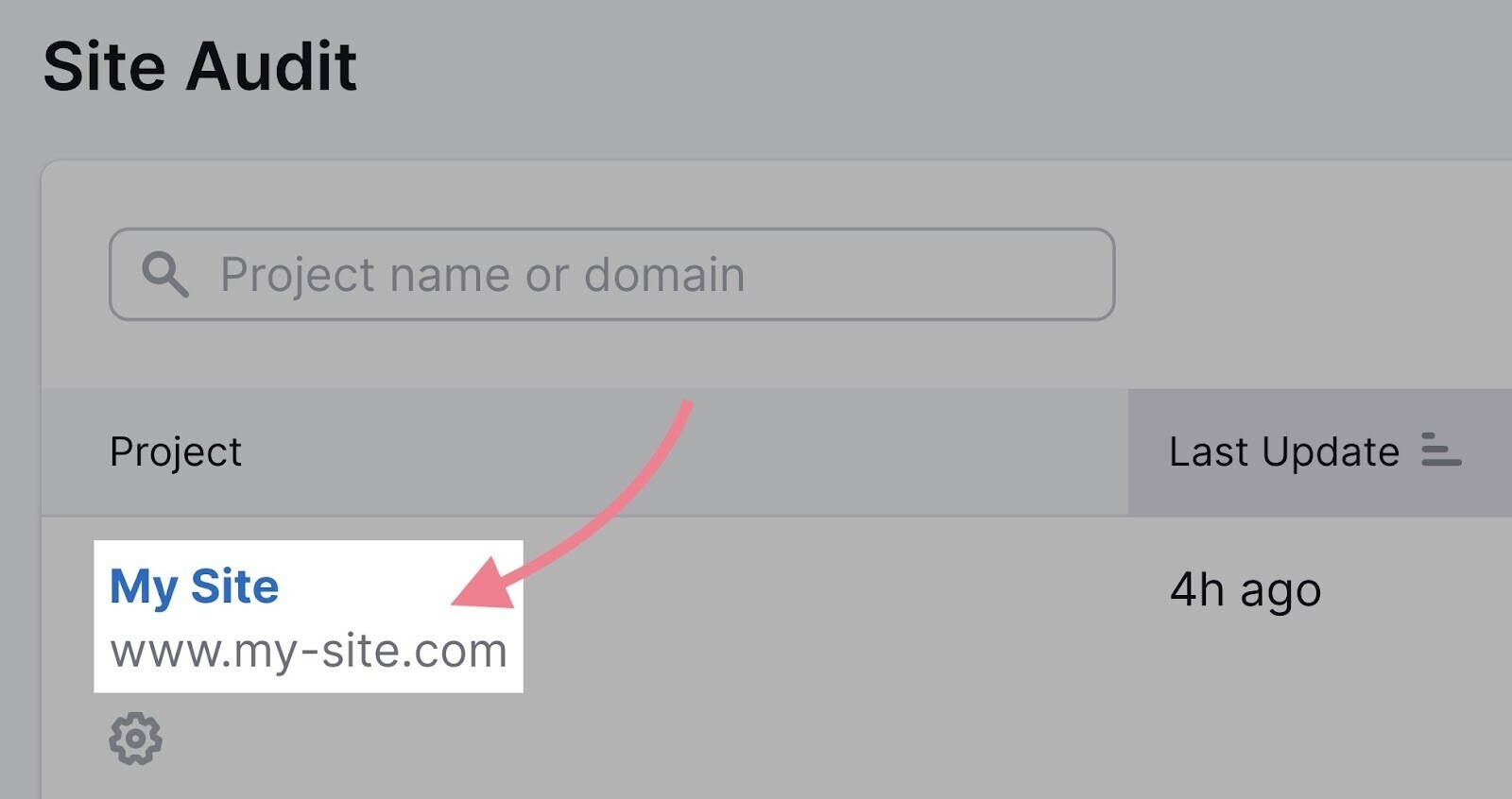

Next, head to the Site Audit tool and select your domain.

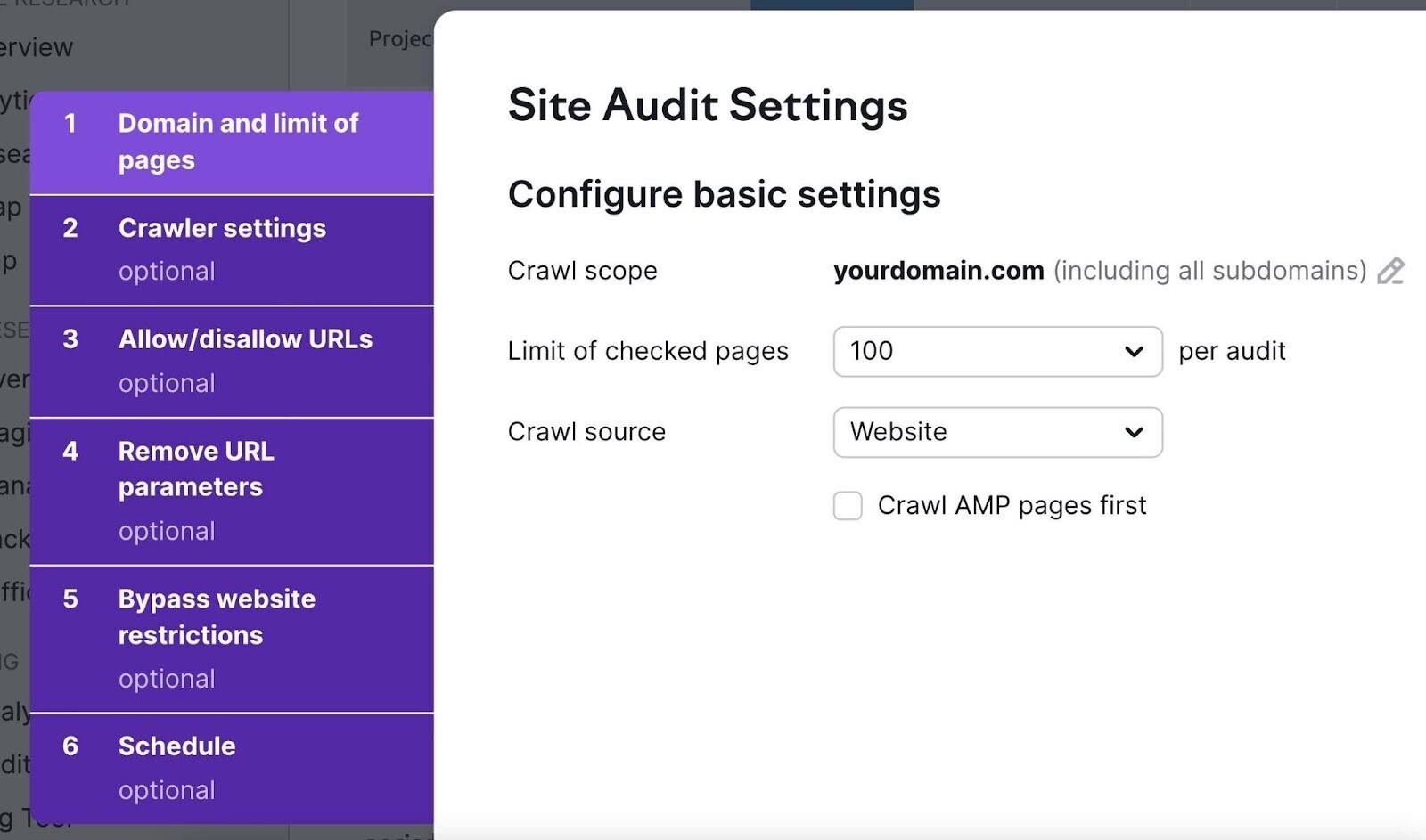

The “Site Audit Settings” window will pop up. Here, you’ll configure the basics of your first crawl. You can follow this detailed setup guide to get through the settings.

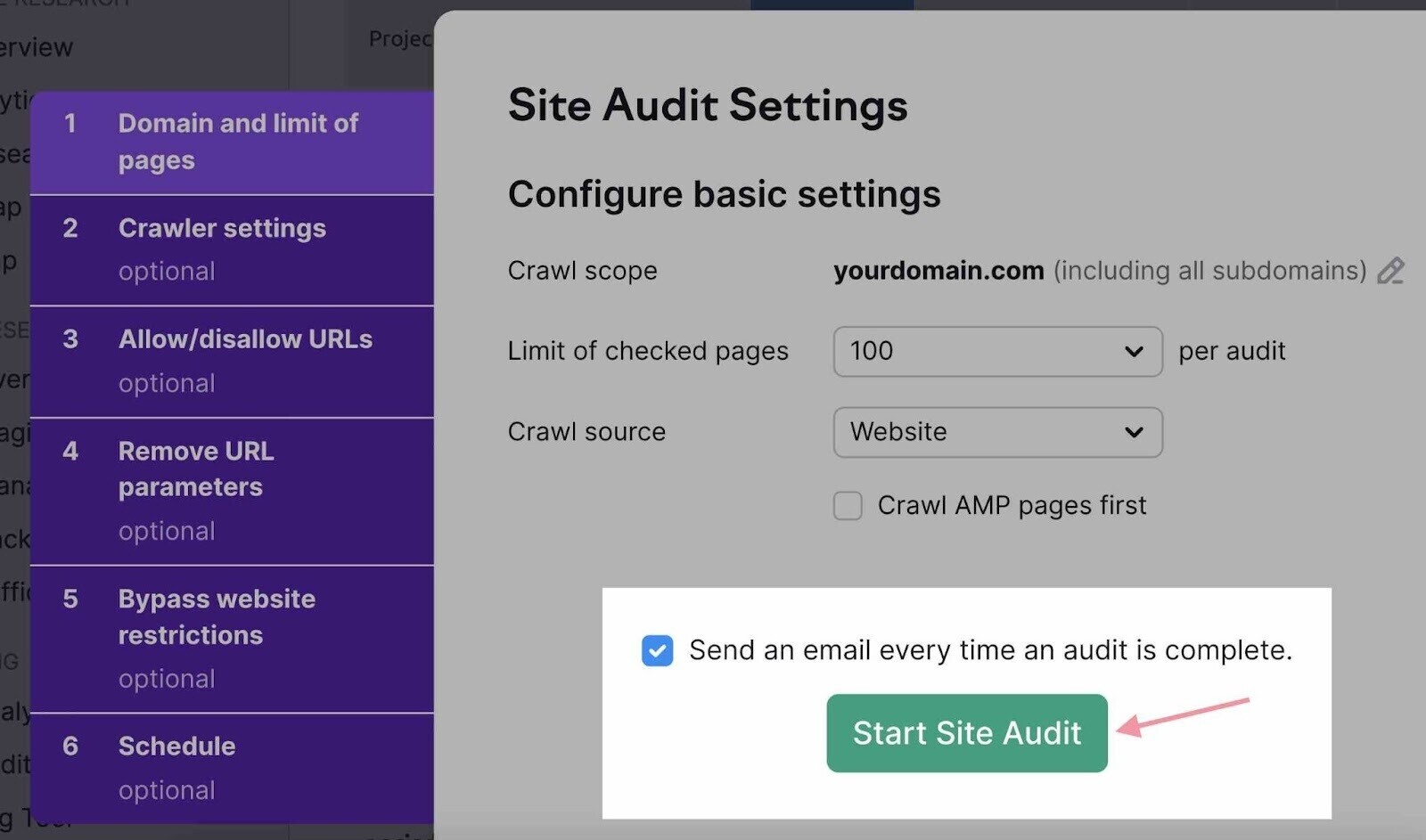

Finally, click the “Start Site Audit” button at the bottom of the window.

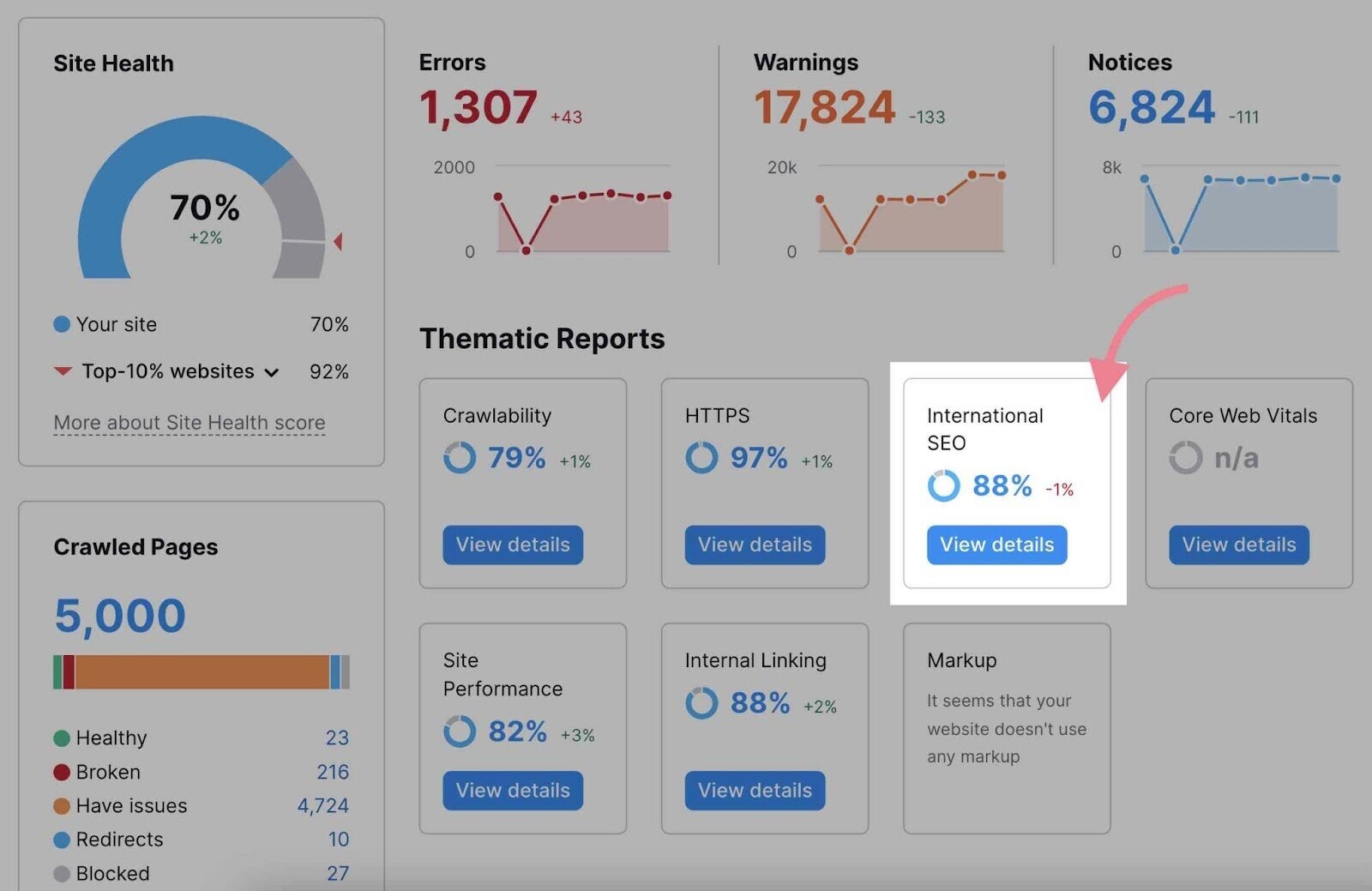

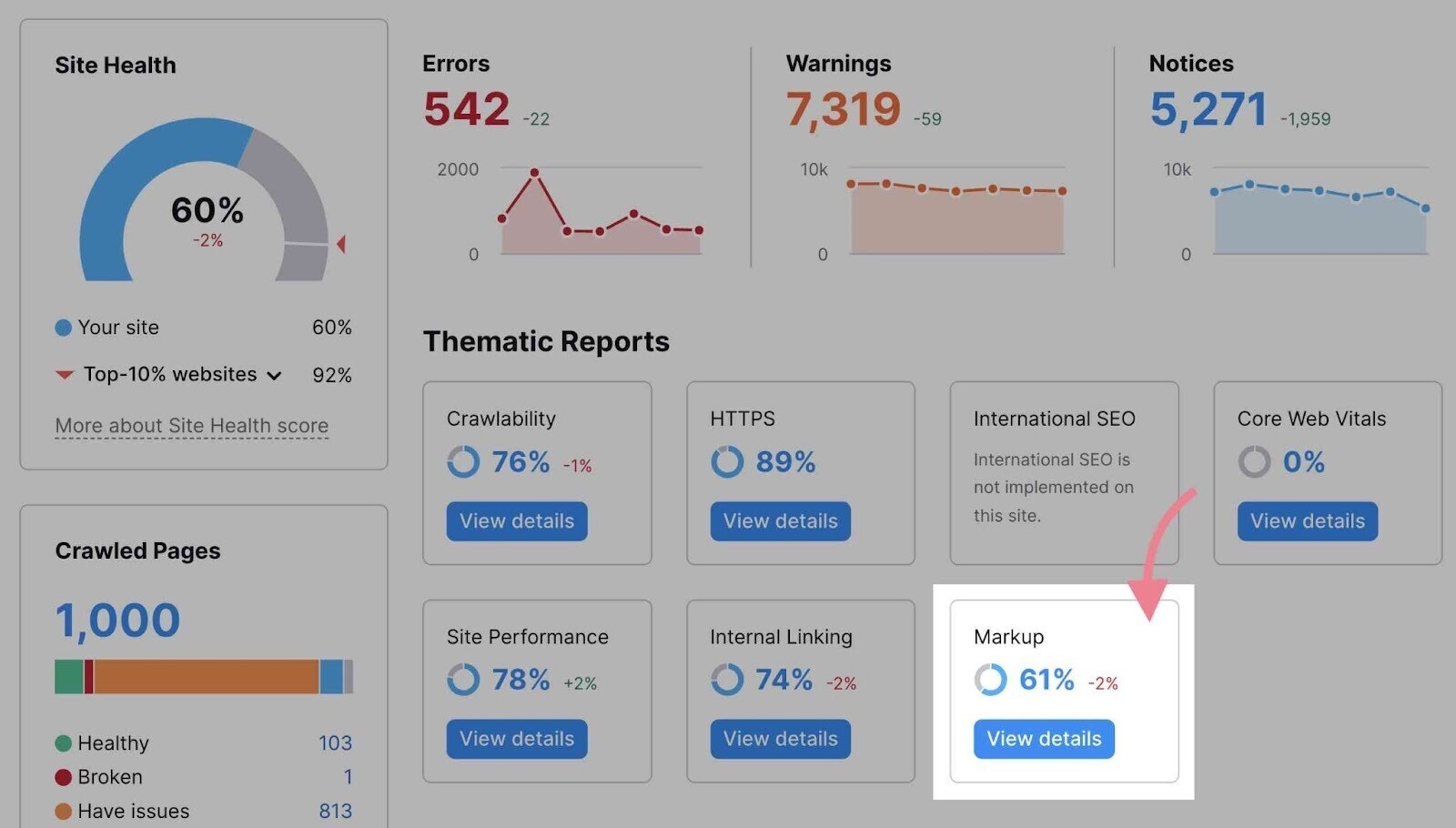

After the tool crawls your site, it generates an overview of your site’s health with the Site Health metric.

This metric grades your website health on a scale from 0 to 100. And tells you how you compare with other sites in your industry.

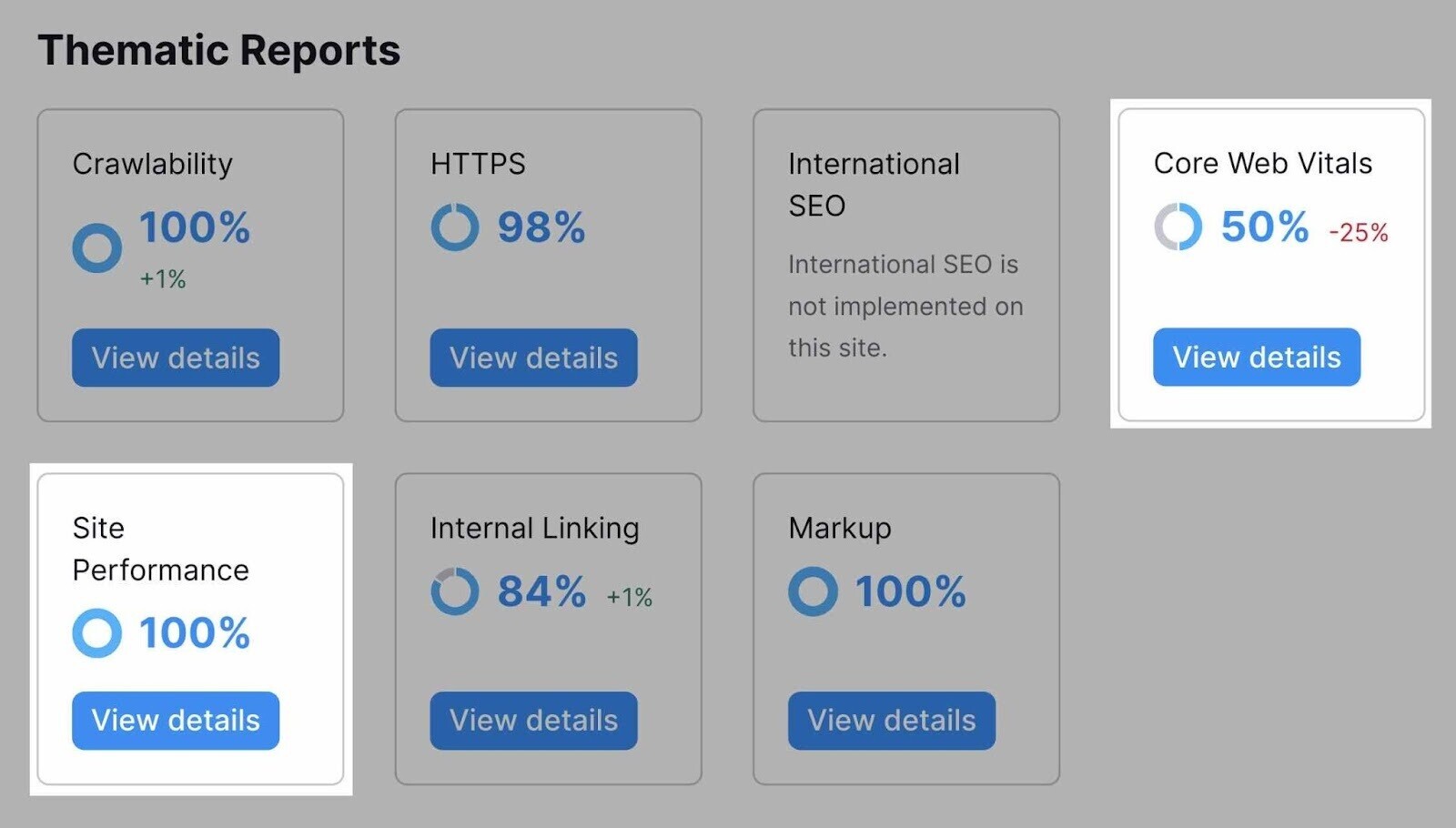

You’ll also get an overview of your issues by severity (through the “Errors,” “Warnings,” and “Notices” categories). Or you can focus on specific areas of technical SEO with the “Thematic Reports.” (We’ll get to those later.)

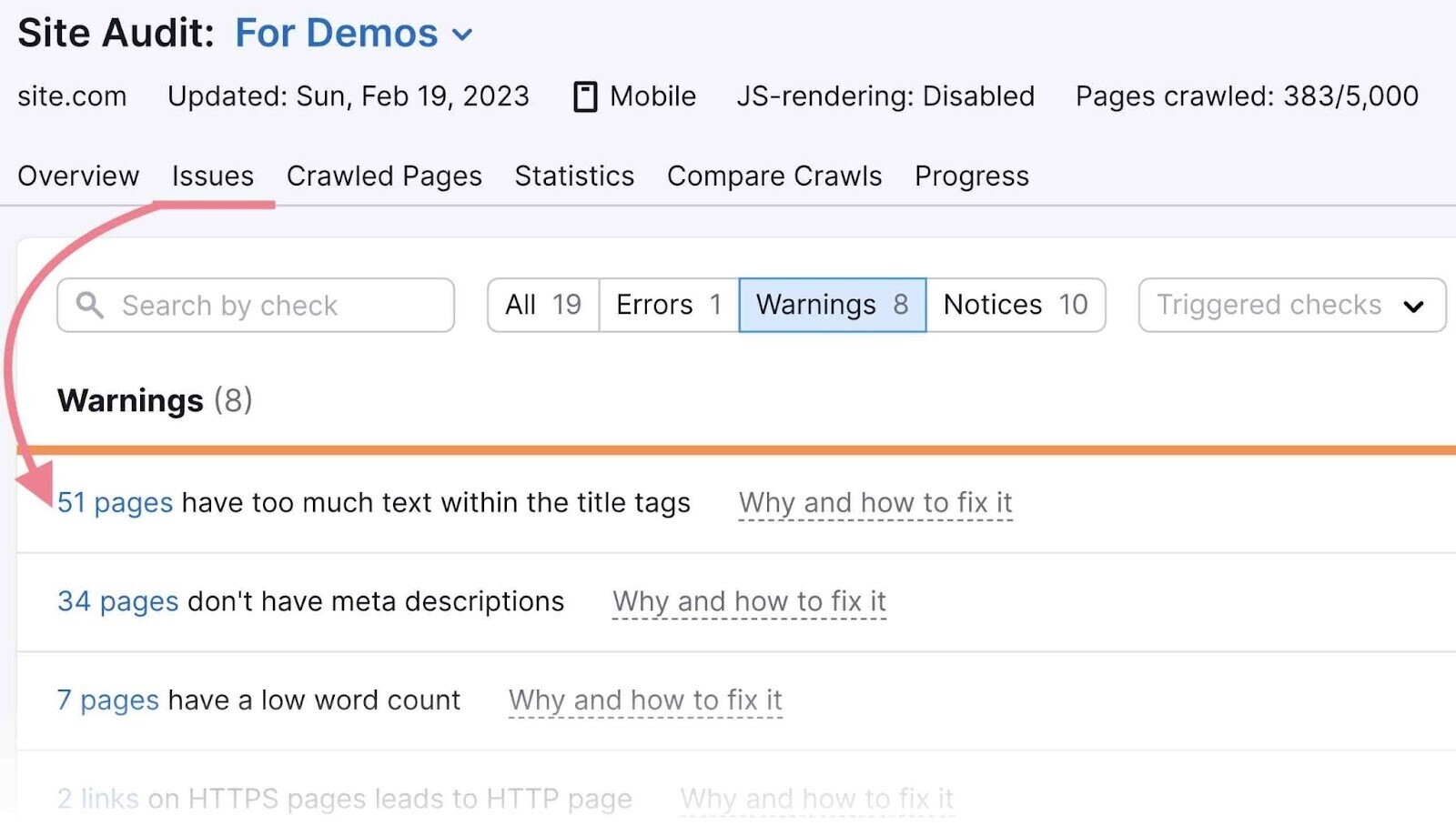

Finally, switch to the “Issues” tab. There, you’ll see a complete list of all the issues. Along with the number of affected pages.

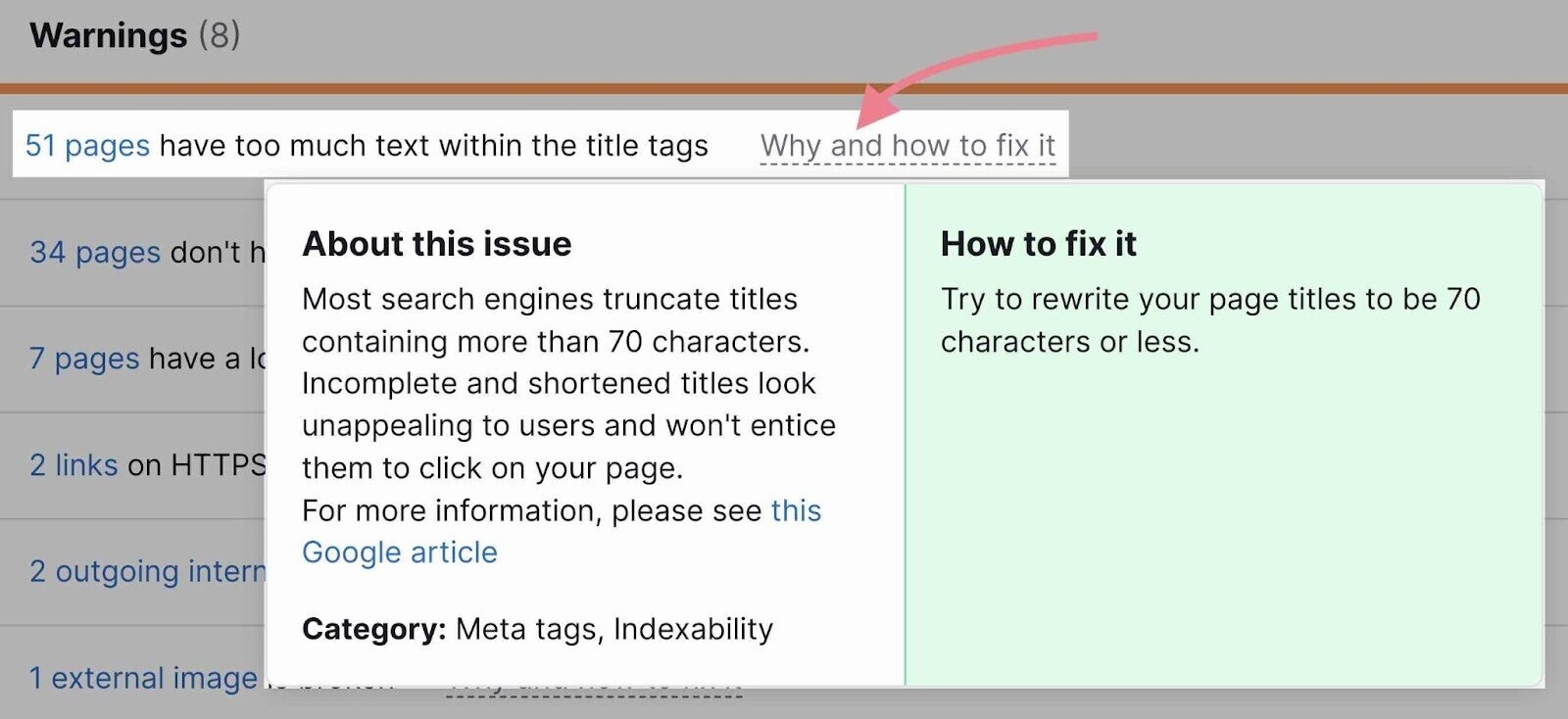

Each issue line includes a “Why and how to fix it” link. When you click it, you’ll get a short description of the issue, tips on how to fix it, and useful links to relevant tools or resources.

The issues you find here will fit into one of two categories, depending on your skill level:

- Issues you can fix on your own

- Issues a developer or system administrator will need to help you fix

The first time you audit a website, it can seem like there’s just too much to do. That’s why we’ve put together this detailed guide. It will help beginners, especially, to make sure they don’t miss anything major.

We recommend performing a technical SEO audit on any new site you are working with.

After that, audit your site at least once per quarter (ideally monthly). Or whenever you see a decline in rankings.

1. Spot and Fix Crawlability and Indexability Issues

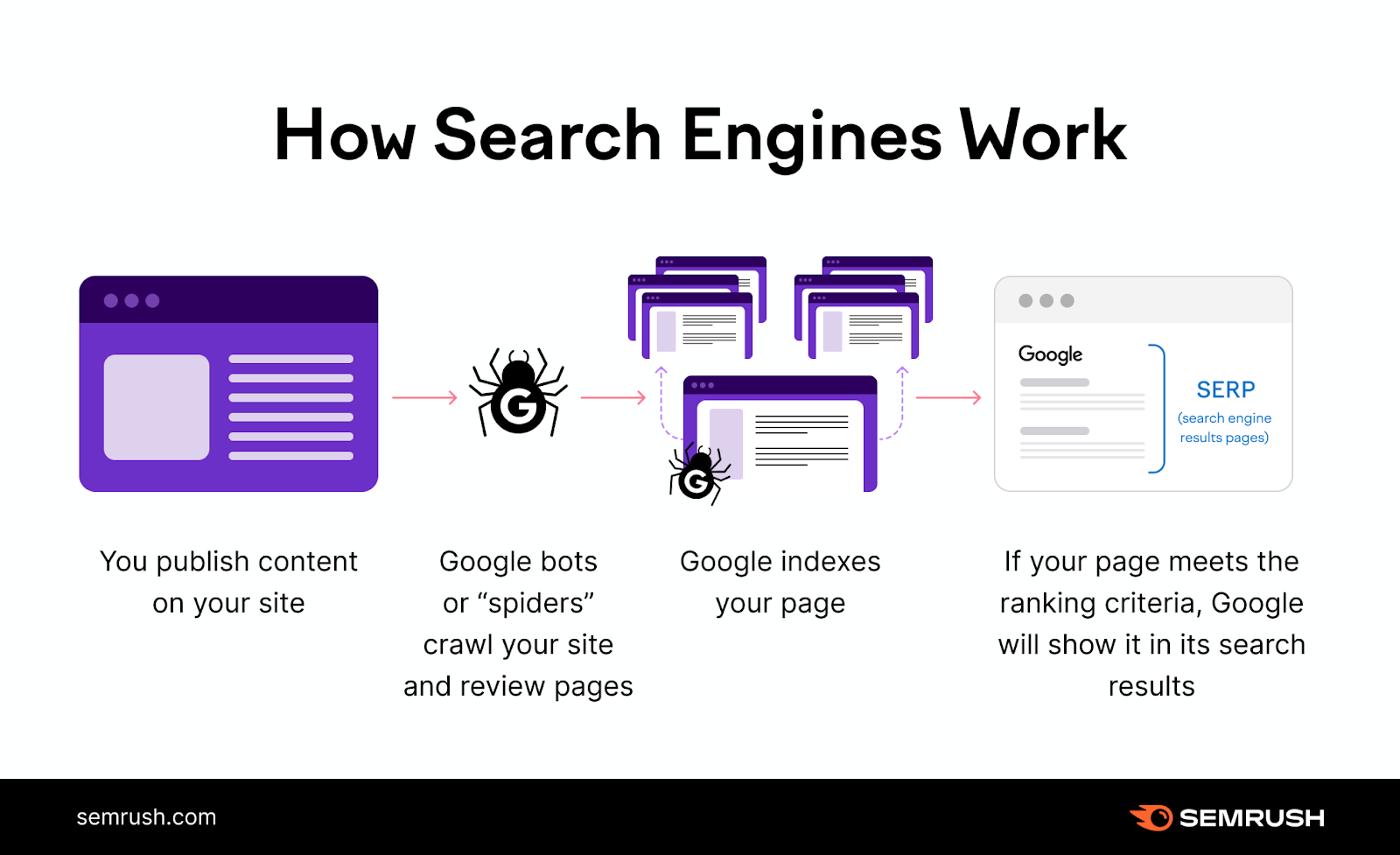

Google and other search engines must be able to crawl and index your webpages in order to rank them.

That’s why crawlability and indexability are a huge part of SEO.

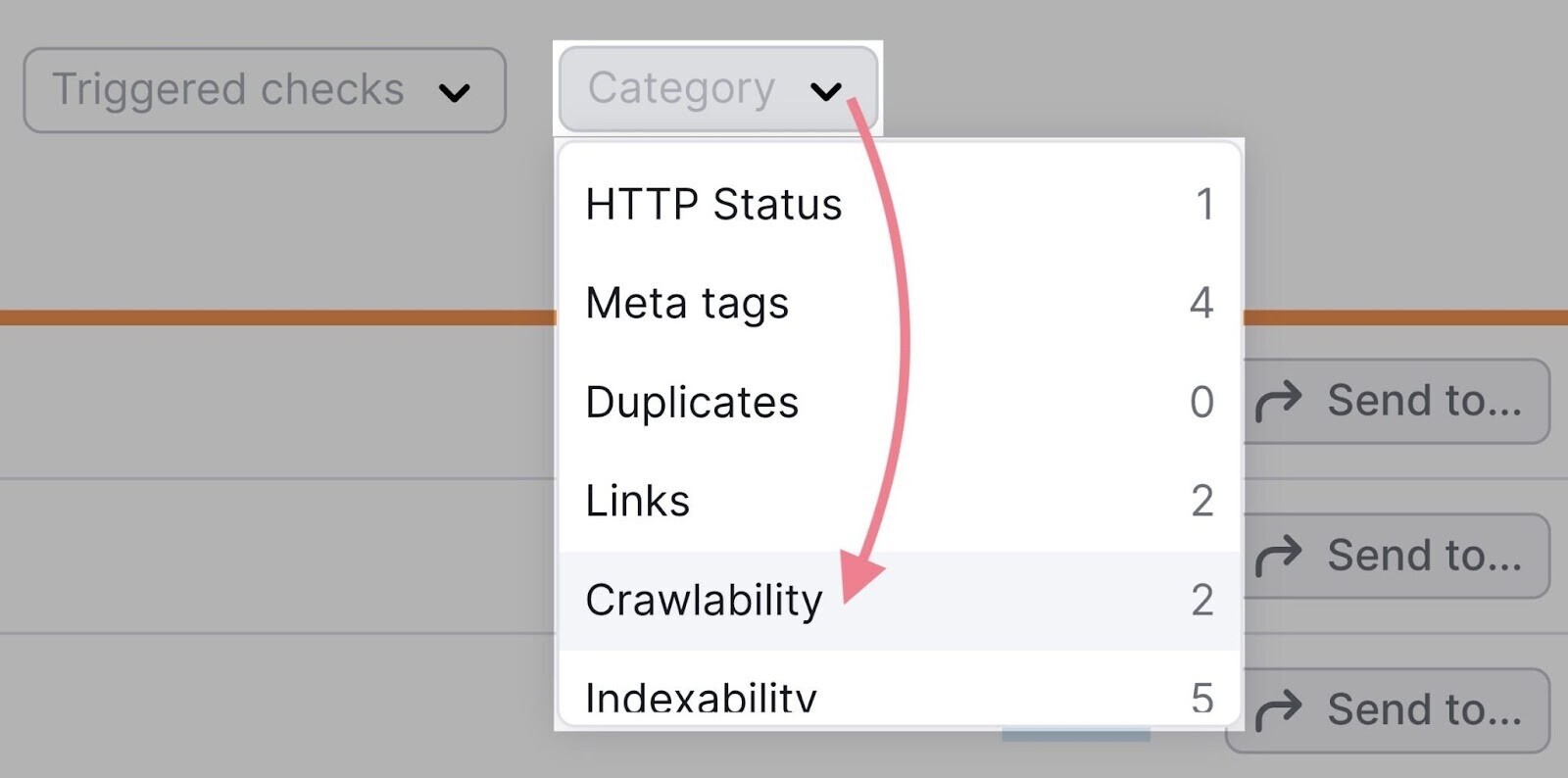

To check if your site has any crawlability or indexability issues, go to the “Issues” tab in Site Audit.

Then, click “Category” and select “Crawlability.”

You can repeat the same process with the “Indexability” category.

Issues connected to crawlability and indexability will very often be at the top of the results—in the “Errors” section—because they tend to be quite serious.

We’ll cover several of these issues in different sections of this guide. Because many technical SEO issues are connected to crawlability and indexability in one way or another.

Now, we’ll take a closer look at two important website files—robots.txt and sitemap.xml—that have a huge impact on how search engines discover your site.

Check for and Fix Robots.txt Issues

Robots.txt is a website text file that tells search engines which pages they should or shouldn’t crawl. It can usually be found in the root folder of the site: https://domain.com/robots.txt.

A robots.txt file helps you:

- Point search engine bots away from private folders

- Keep bots from overwhelming server resources

- Specify the location of your sitemap

A single line of code in robots.txt can prevent search engines from crawling your entire site. So you need to make sure your robots.txt file doesn’t disallow any folder or page you want to appear in search results.

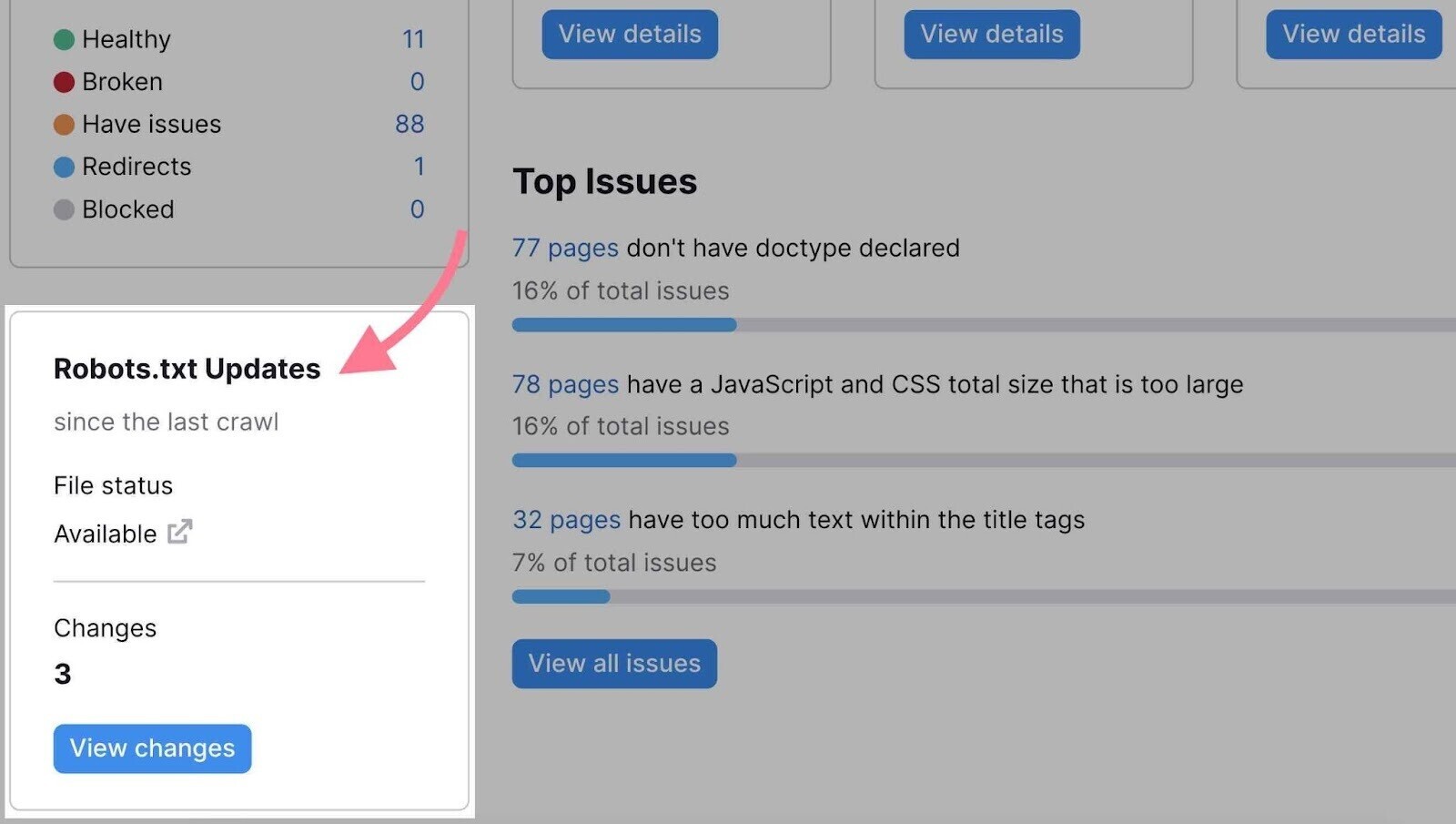

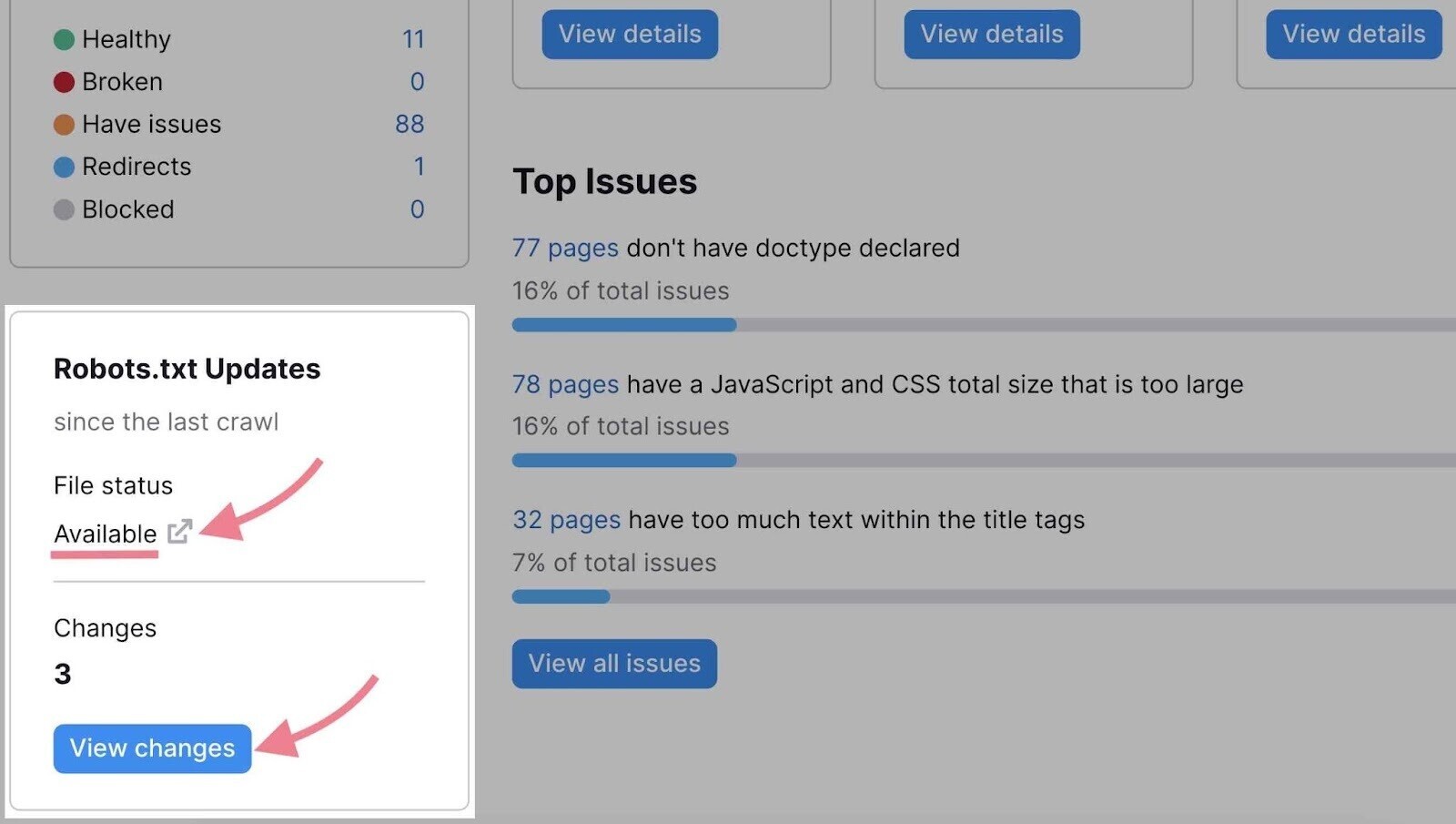

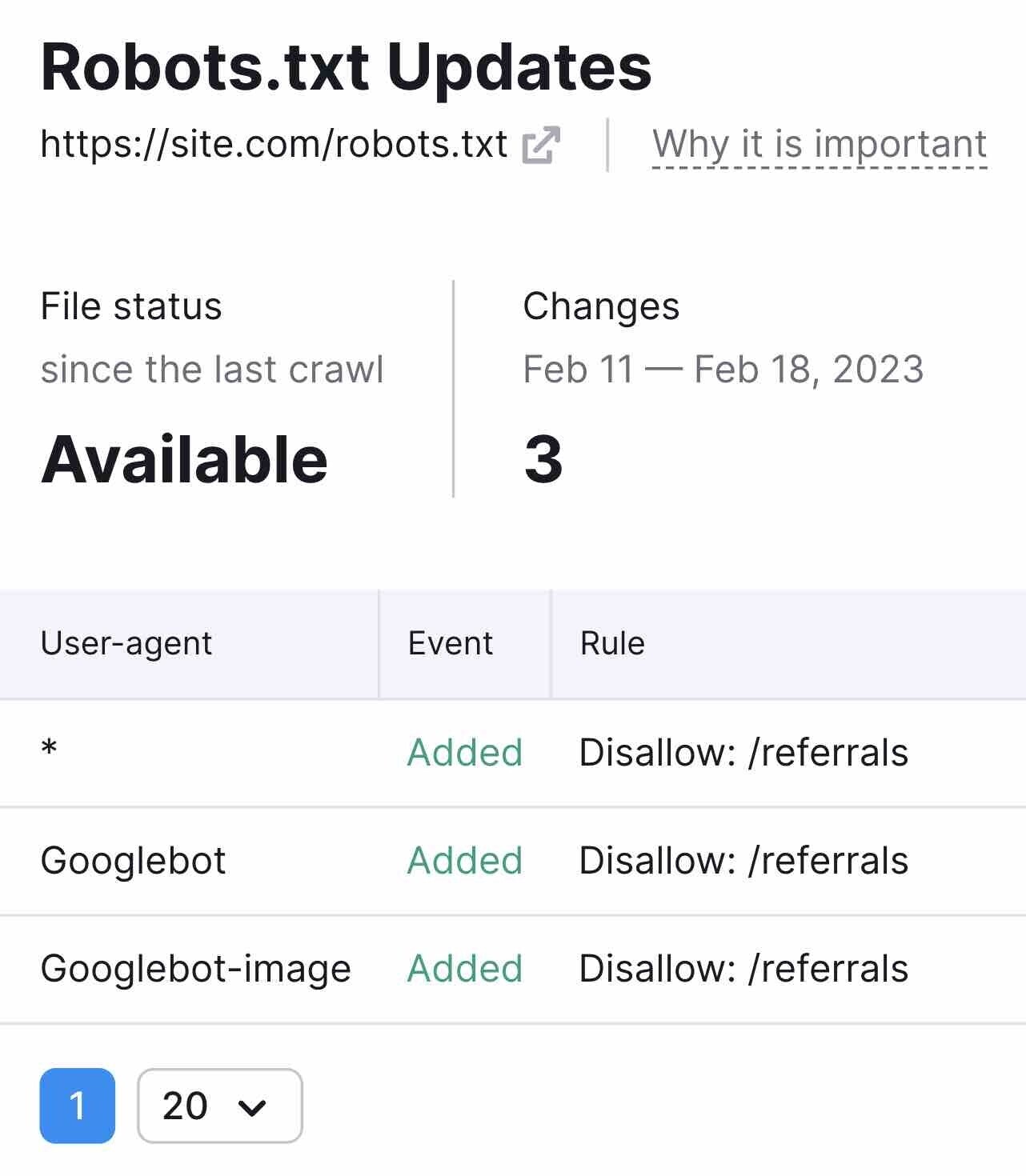

To check your robots.txt file, open Site Audit and scroll down to the “Robots.txt Updates” box at the bottom.

Here, you’ll see if the crawler has detected the robots.txt file on your website.

If the file status is “Available” you can review your robots.txt file by clicking the link icon next to it.

Or you can focus only on the robots.txt file changes since the last crawl by clicking the “View changes” button.

Further reading: Reviewing and fixing the robots.txt file requires technical knowledge. You should always follow Google’s robots.txt guidelines. Read our guide to robots.txt to learn about its syntax and best practices.

To find further issues, you can open the “Issues” tab and search for “robots.txt.” Some issues that may appear include the following:

- Robots.txt file has format errors

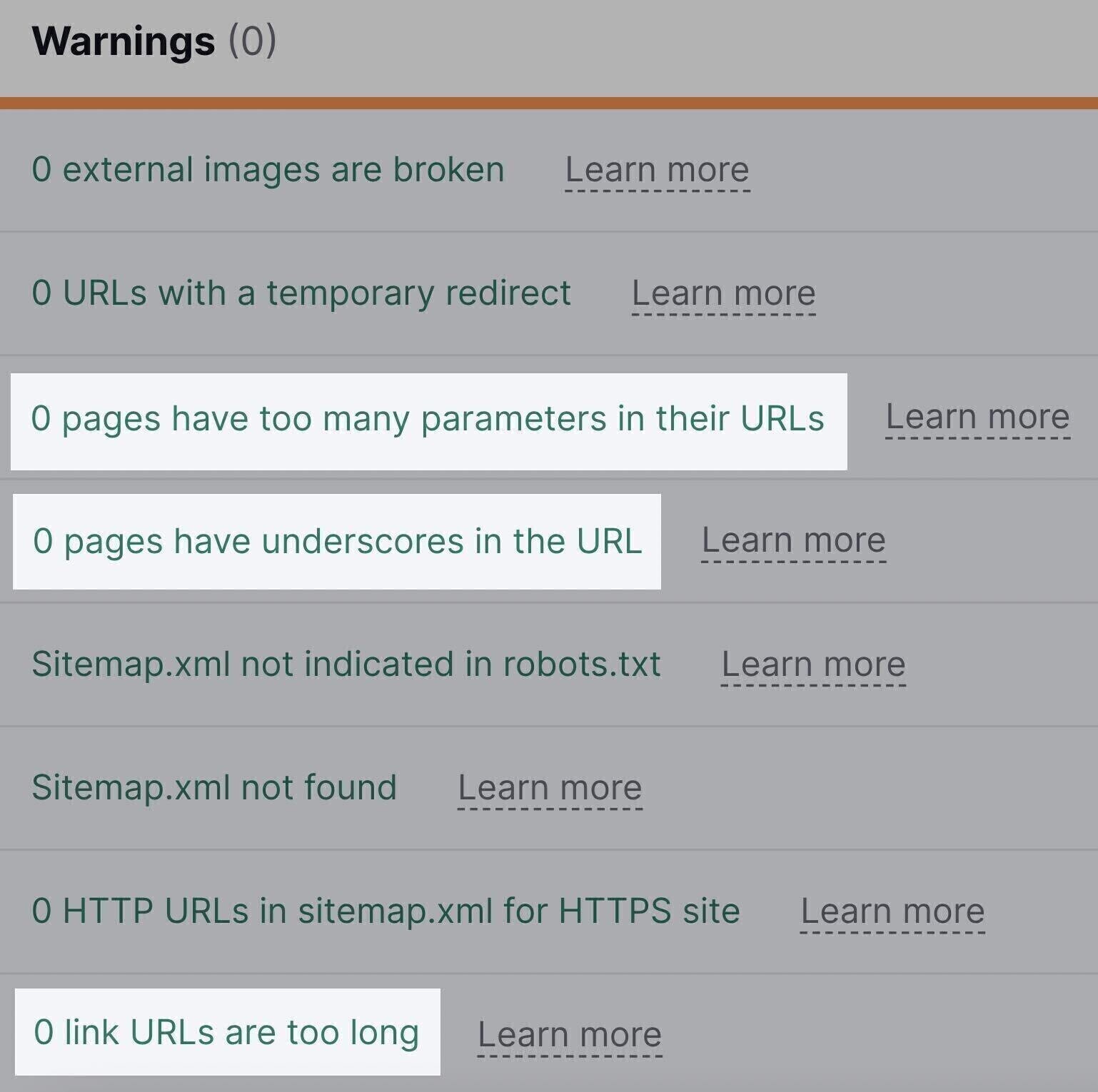

- Sitemap.xml not indicated in robots.txt

- Blocked internal resources in robots.txt

Click the link with the number of found issues. From there, you can inspect them in detail and learn how to fix them.

Note: Besides the robot.txt file, there are two other ways to provide further instructions for search engine crawlers: the robots meta tag and x-robots tag. Site Audit will alert you of issues related to these tags.Learn how to use them in our guide to robots meta tags.

Spot and Fix XML Sitemap Issues

An XML sitemap is a file that lists all the pages you want search engines to index—and, ideally, rank.

Review your XML sitemap during every technical SEO audit to make sure it includes any page you want to rank.

On the other hand, it’s important to check that the sitemap doesn’t include pages you don’t want in the SERPs. Like login pages, customer account pages, or gated content.

Note: If your site doesn’t have a sitemap.xml file, read our guide on how to create an XML sitemap.

Next, check whether your sitemap works correctly.

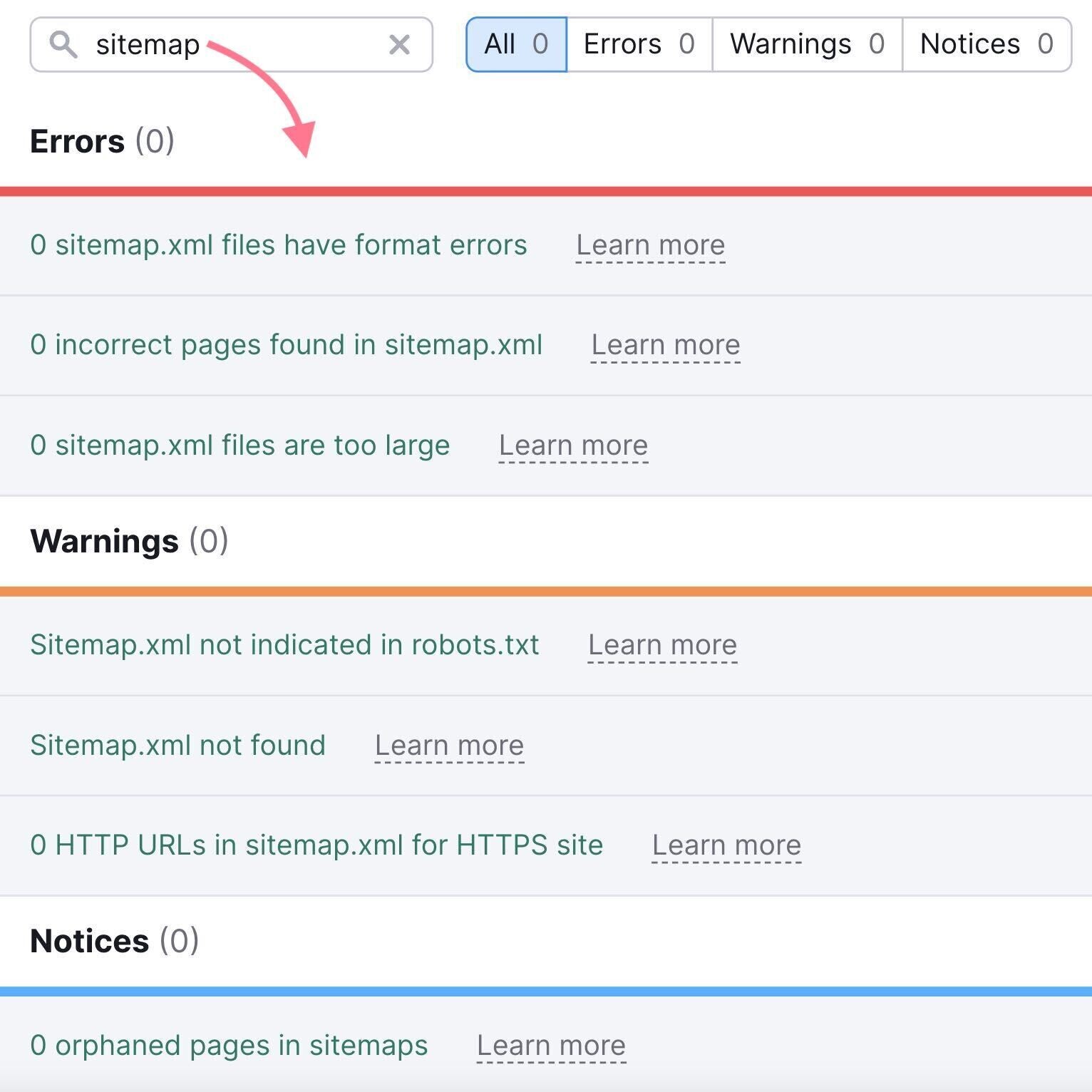

The Site Audit tool can detect common sitemap-related issues, such as:

- Incorrect pages in your sitemap

- Format errors in your sitemap

All you need to do is go to the “Issues” tab and type “sitemap” in the search field:

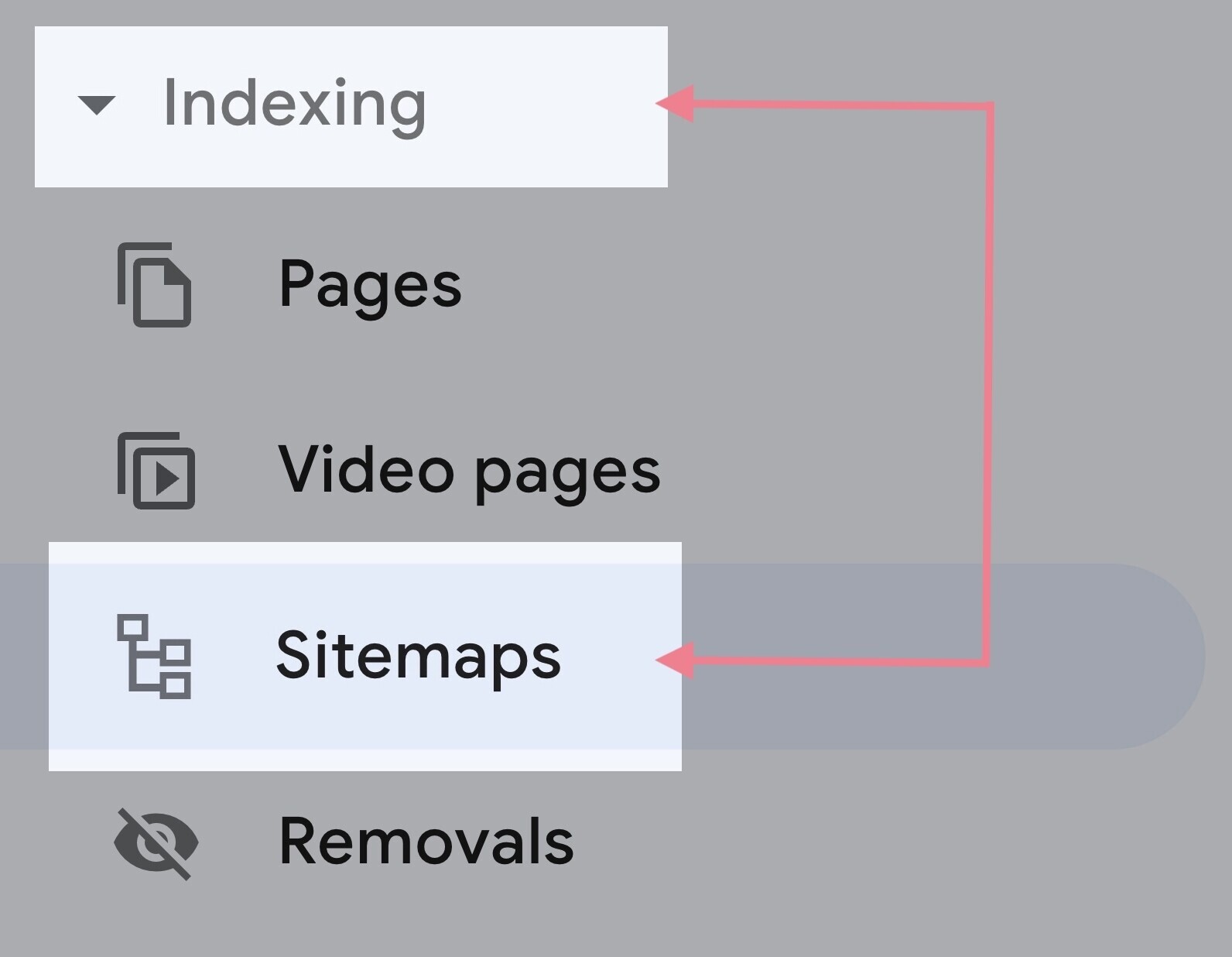

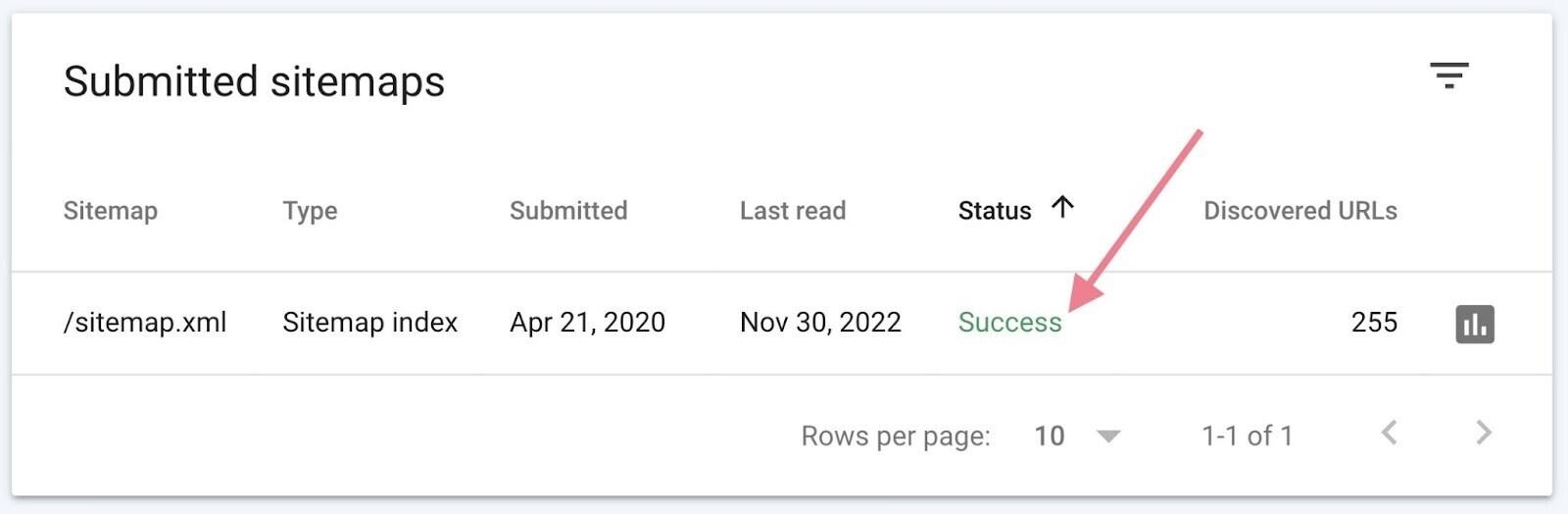

You can also use Google Search Console to identify sitemap issues.

Visit the “Sitemaps” report to submit your sitemap to Google, view your submission history, and review any errors.

You can find it by clicking “Sitemaps” under the “Indexing” section to the left.

If you see “Success” listed next to your sitemap, there are no errors. But the other two potential results—“Has errors” and “Couldn’t fetch”—indicate a problem.

If there are issues, the report will flag them individually. You can follow Google’s troubleshooting guide and fix them.

Further reading: XML sitemaps

2. Audit Your Site Architecture

Site architecture refers to the hierarchy of your webpages and how they are connected through links. You should organize your website in a way that is logical for users and easy to maintain as your website grows.

Good site architecture is important for two reasons:

- It helps search engines crawl and understand the relationships between your pages

- It helps users navigate your site

Let’s take a look at three key aspects of site architecture.

Site Hierarchy

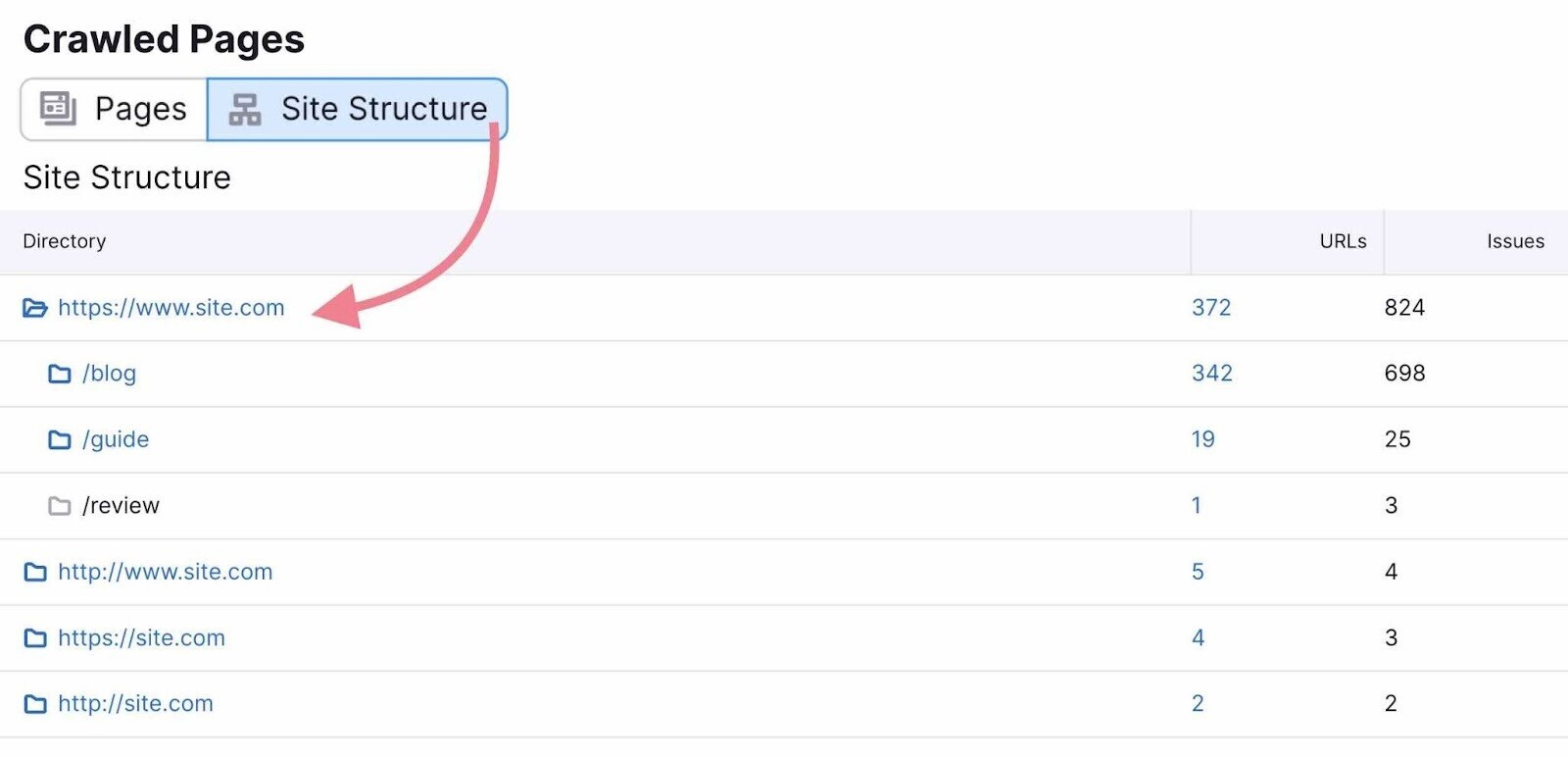

Site hierarchy (or, simply, site structure) is how your pages are organized into subfolders.

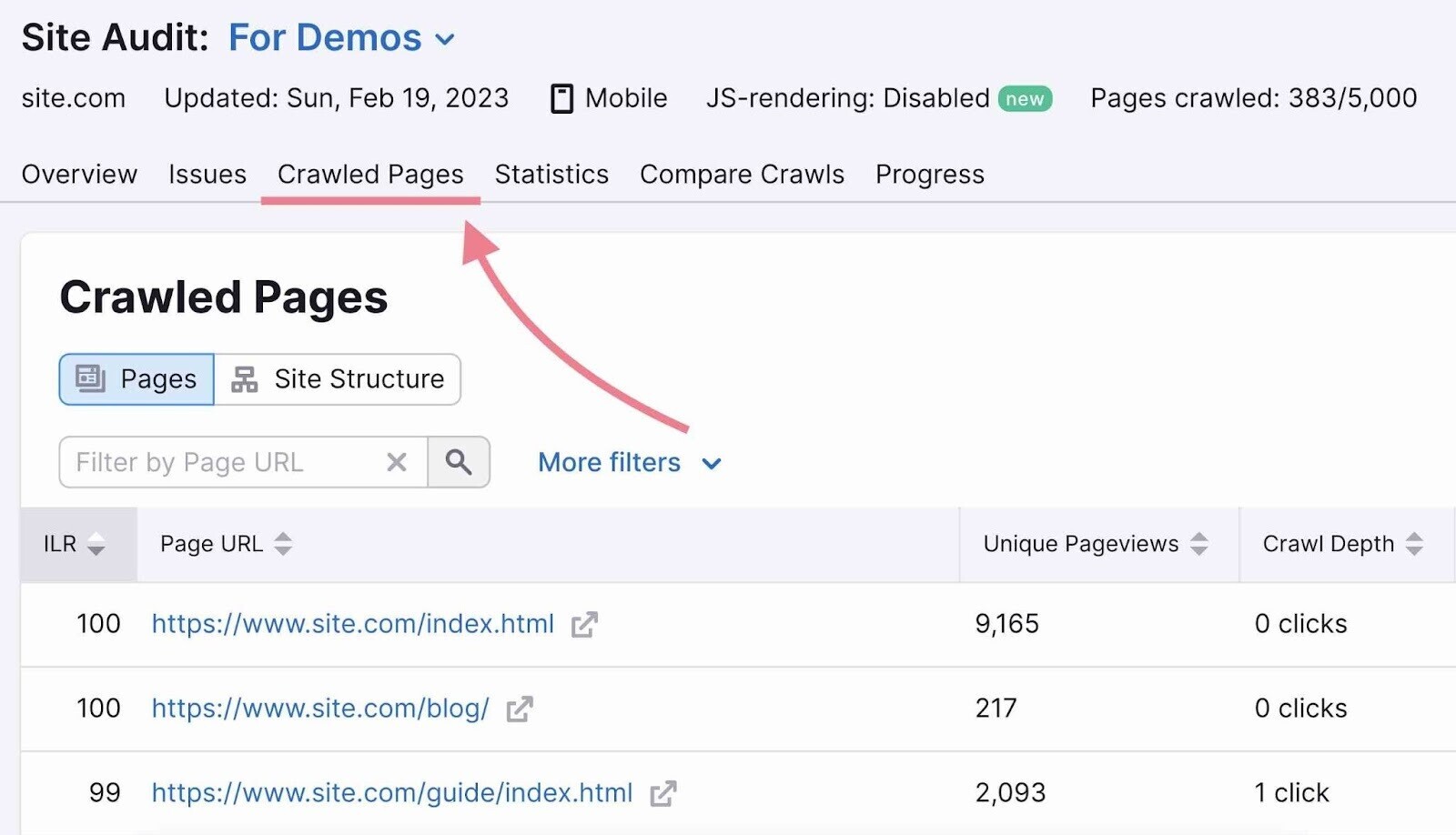

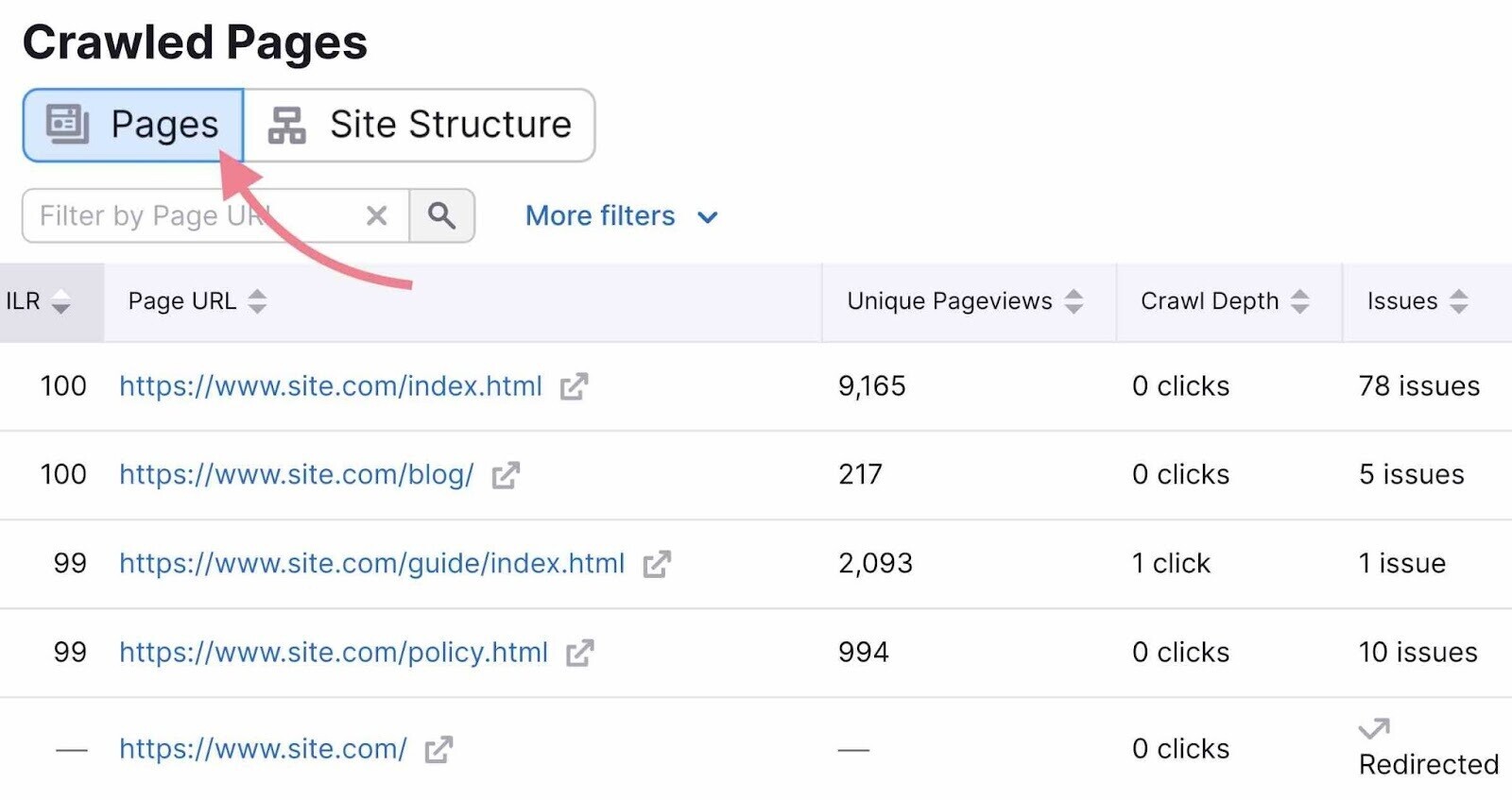

To get a good overview of your site’s hierarchy, go to the “Crawled Pages” tab in Site Audit.

Then, switch the view to “Site Structure.”

You’ll see an overview of your website’s subdomains and subfolders. Review them to make sure the hierarchy is organized and logical.

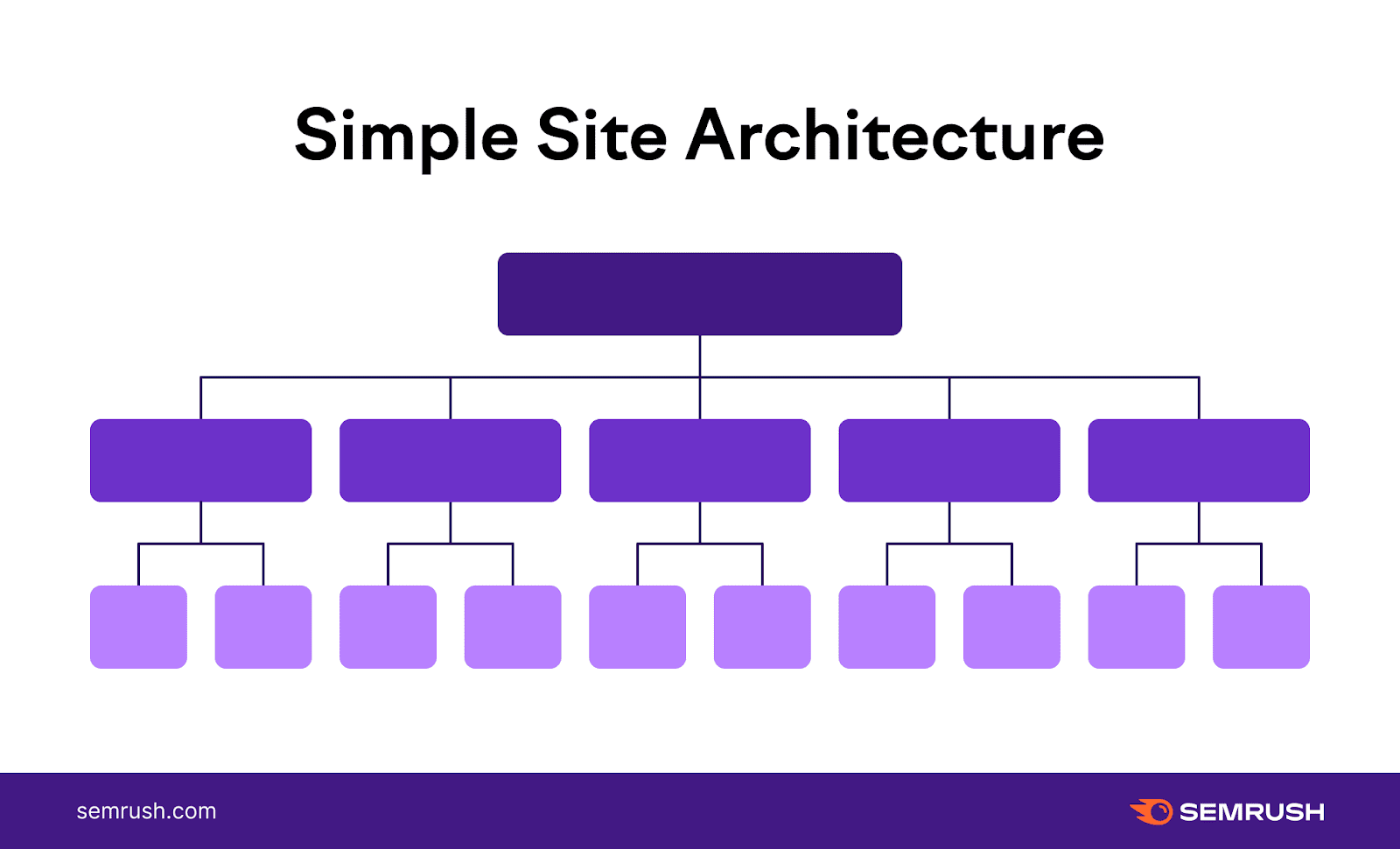

Aim for a flat site architecture, which looks like this:

Ideally, it should only take a user three clicks to find the page they want from the homepage.

When it takes more than three clicks, your site’s hierarchy is too deep. Search engines consider pages deeper in the hierarchy to be less important or relevant.

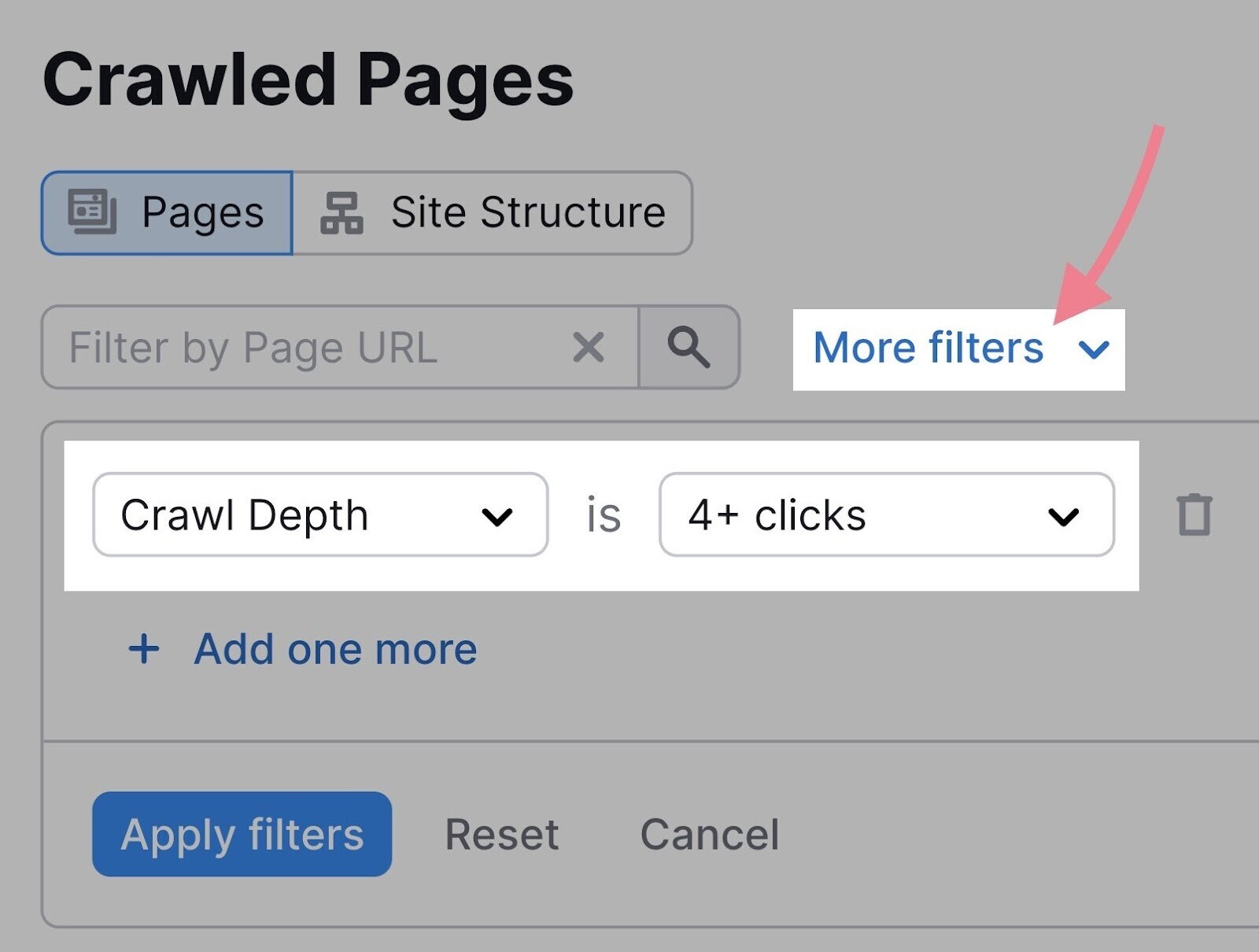

To make sure all your pages fulfill this requirement, stay within the “Crawled Pages” tab and switch back to the “Pages” view.

Then, click “More filters” and select the following parameters: “Crawl Depth” is “4+ clicks.”

To fix this issue, add internal links to pages that are too deep in the site’s structure. (More on internal linking in the next chapter.)

Navigation

Your site’s navigation (like menus, footer links, and breadcrumbs) should make it easier for users to navigate your site.

This is an important pillar of good site architecture.

Your navigation should be:

- Simple. Try to avoid mega menus or non-standard names for menu items (like “Idea Lab” instead of “Blog”)

- Logical. It should reflect the hierarchy of your pages. A great way to achieve this is to use breadcrumbs.

It’s harder to navigate a site with messy architecture. Conversely, when a website has a clear and easy-to-use navigation, the architecture will be easier to understand for both users and bots.

No tool can help you create user-friendly menus. You need to review your website manually and follow the UX best practices for navigation.

URL Structure

Like a website’s hierarchy, a site’s URL structure should be consistent and easy to follow.

Let’s say a website visitor follows the menu navigation for girls’ shoes:

Homepage > Children > Girls > Footwear

The URL should mirror the architecture:

domain.com/children/girls/footwear

Some sites should also consider using a URL structure that shows a page or website is relevant to a specific country. For example, a website for Canadian users of a product may use either “domain.com/ca” or “domain.ca.”

Lastly, make sure your URL slugs are user-friendly and follow best practices.

Site Audit will help you identify some common issues with URLs, such as:

- Use of underscores in URLs

- Too many parameters in URLs

- URLs that are too long

Further reading: Website architecture

3. Fix Internal Linking Issues

Internal links are links that point from one page to another page within your domain.

Here’s why internal links matter:

- They are an essential part of a good website architecture

- They distribute link equity (also known as “link juice” or “authority”) across your pages to help search engines identify important pages

As you improve your site’s structure and make it easier for both search engines and users to find content, you’ll need to check the health and status of the site’s internal links.

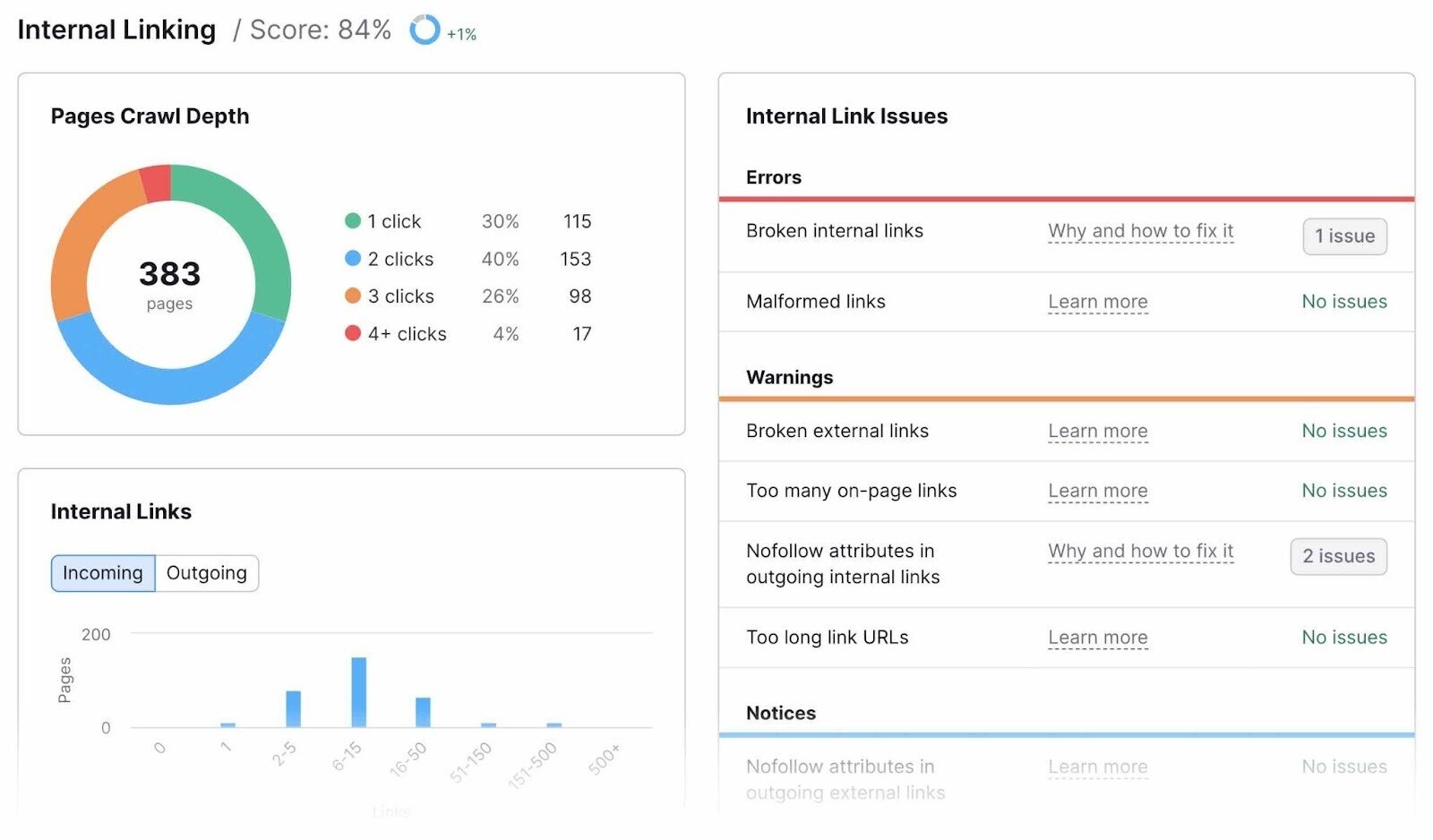

Refer back to the Site Audit report and click “View details” under your “Internal Linking” score.

In this report, you’ll see a breakdown of the site’s internal link issues.

Tip: Check out Semrush’s study on the most common internal linking mistakes and how to fix them.

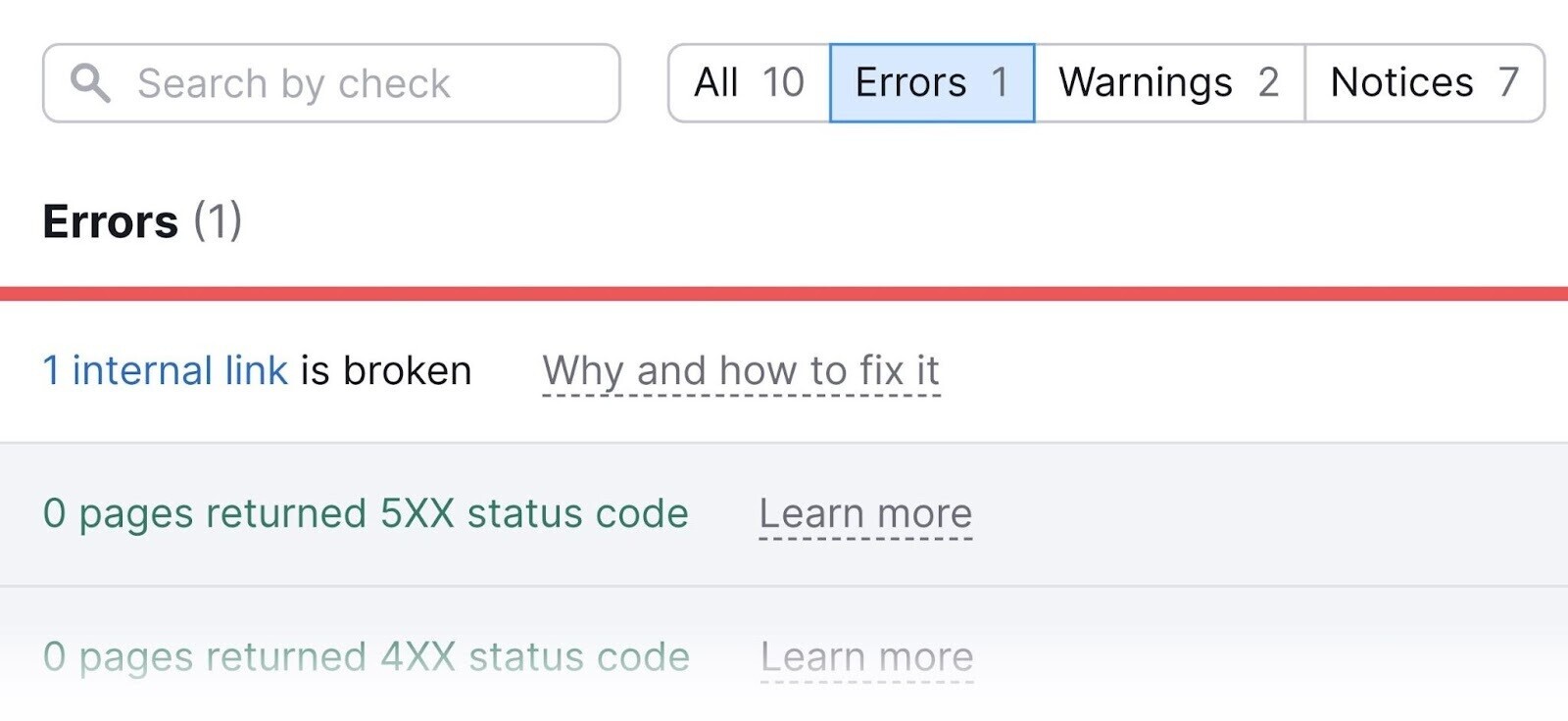

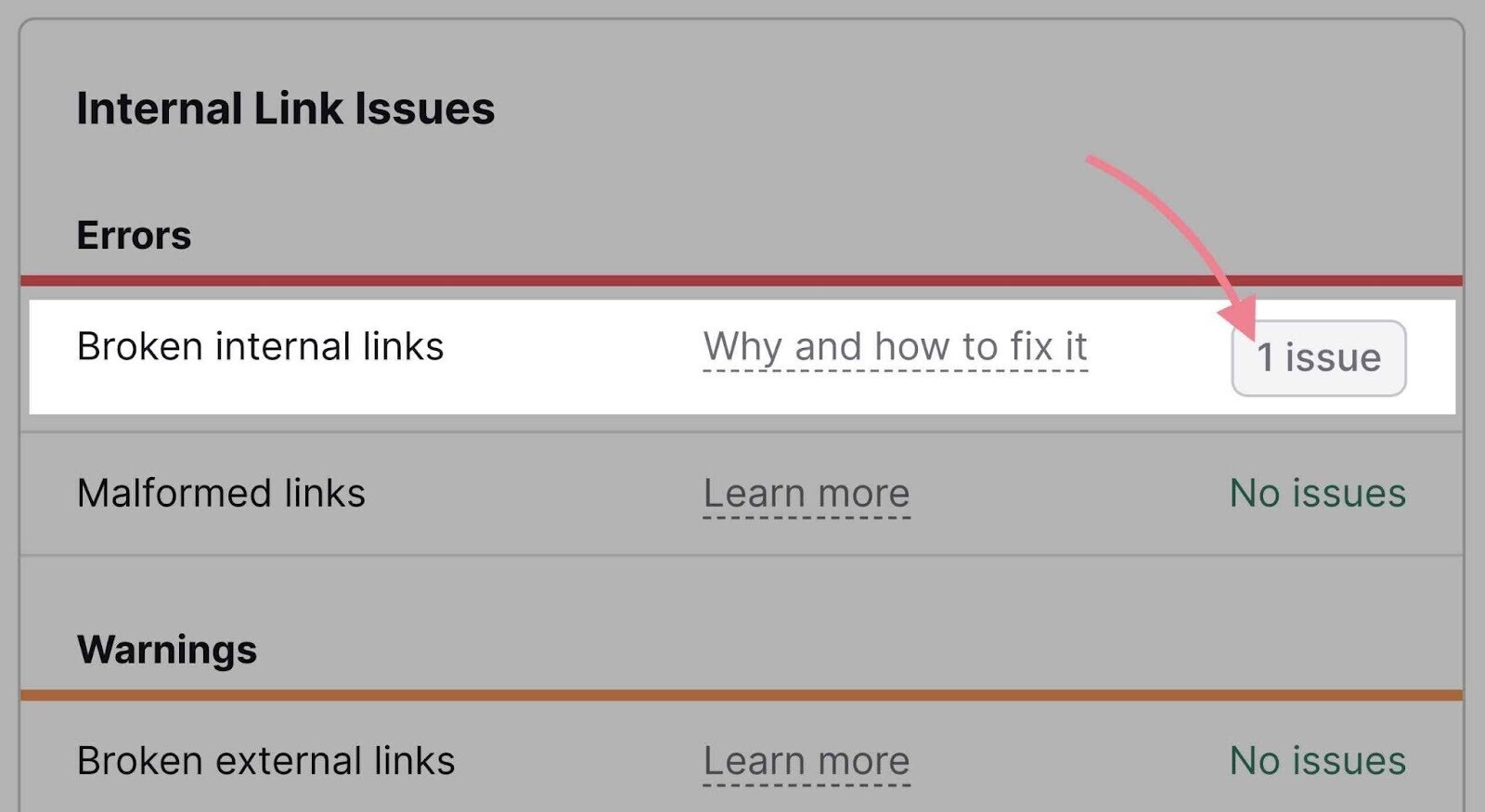

A typical issue that is fairly easy to fix is broken internal linking. This refers to links that point to pages that no longer exist.

All you need to do is to click the number of issues in the “Broken internal links” error and manually update the broken links you’ll see in the list.

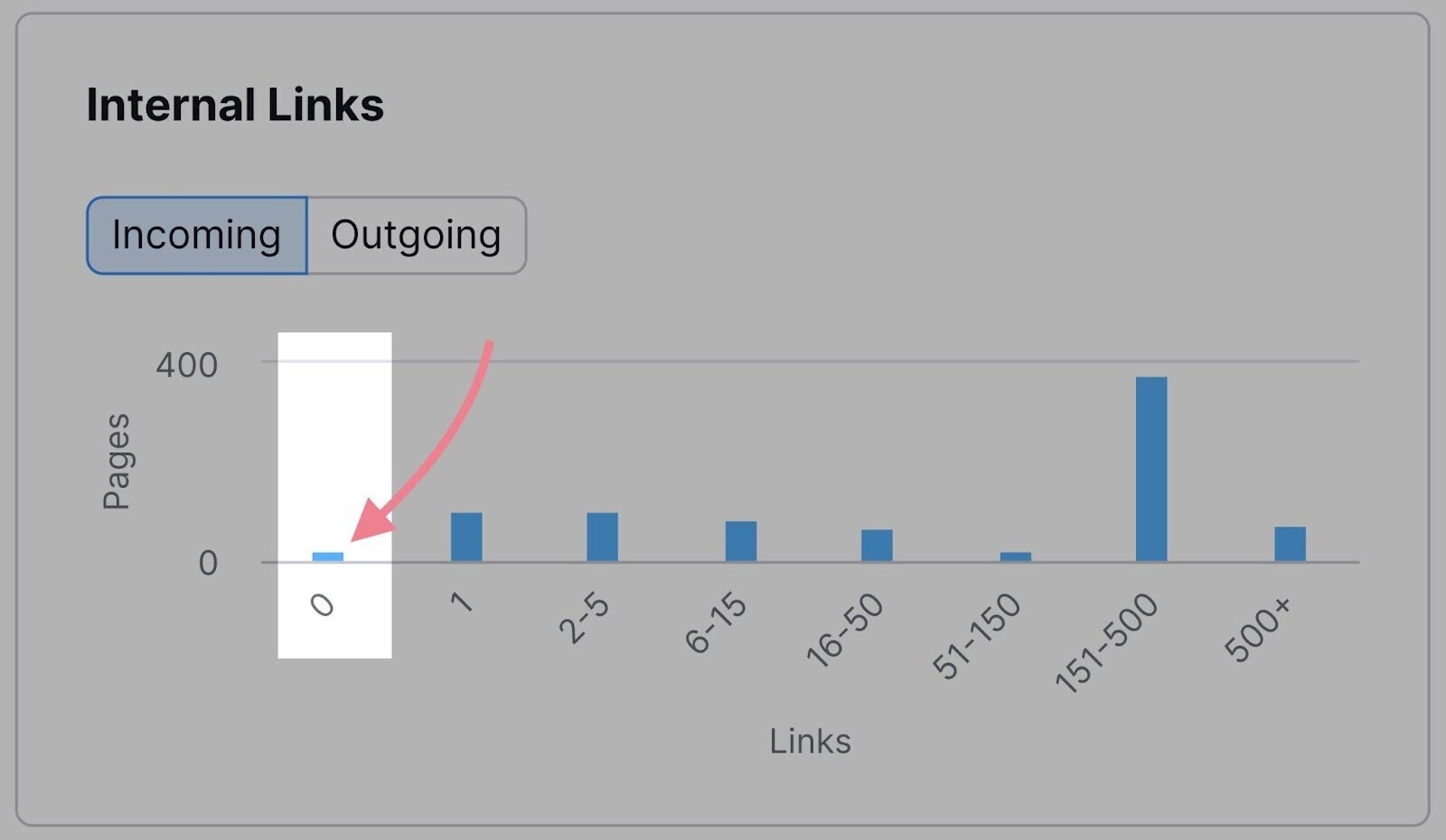

Another easy fix is orphaned pages. These are pages with no links pointing to them. That means you can’t gain access to them via any other page on the same website.

Check the “Internal Links” bar graph and see if there are any pages with zero links.

Add at least one internal link to each of these pages.

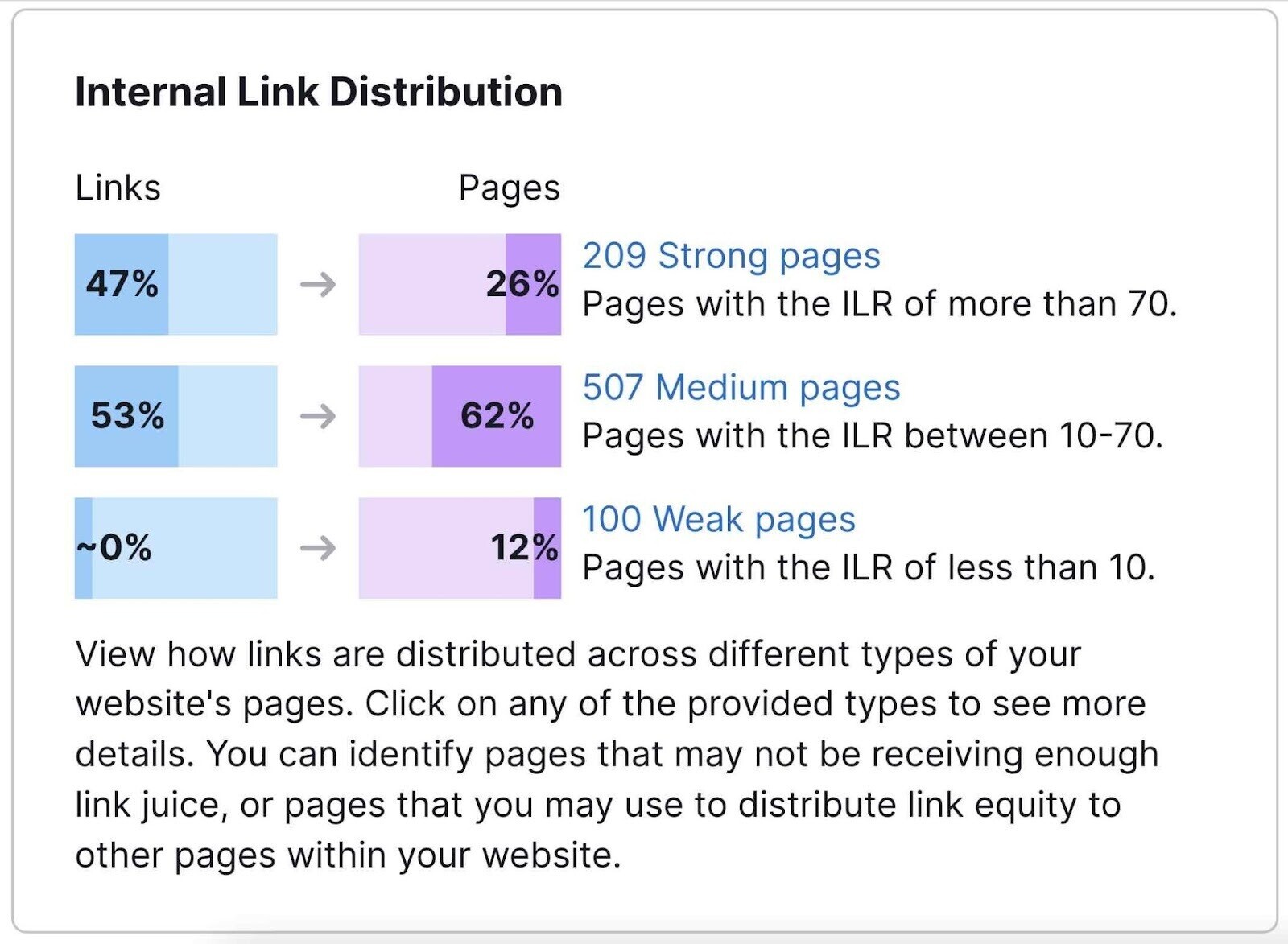

Last but not least, you can use the “Internal Link Distribution” graph to see the distribution of your pages according to their Internal LinkRank (ILR).

ILR shows how strong a page is in terms of internal linking. The closer to 100, the stronger a page is.

Use this metric to find out which pages could benefit from additional internal links. And which pages you can use to distribute more link equity across your domain.

Of course, you may be fixing issues that could have been avoided. That’s why you should ensure that you’ll follow the internal linking best practices in the future:

- Make internal linking part of your content creation strategy

- Every time you create a new page, make sure to link to it from existing pages

- Don’t link to URLs that have redirects (link to the redirect destination instead)

- Link to relevant pages and provide relevant anchor text

- Use internal links to show search engines which pages are important

- Don’t use too many internal links (use common sense here—a standard blog post probably doesn’t need 300 internal links)

- Learn about nofollow attributes and use them correctly

Further reading: Internal links

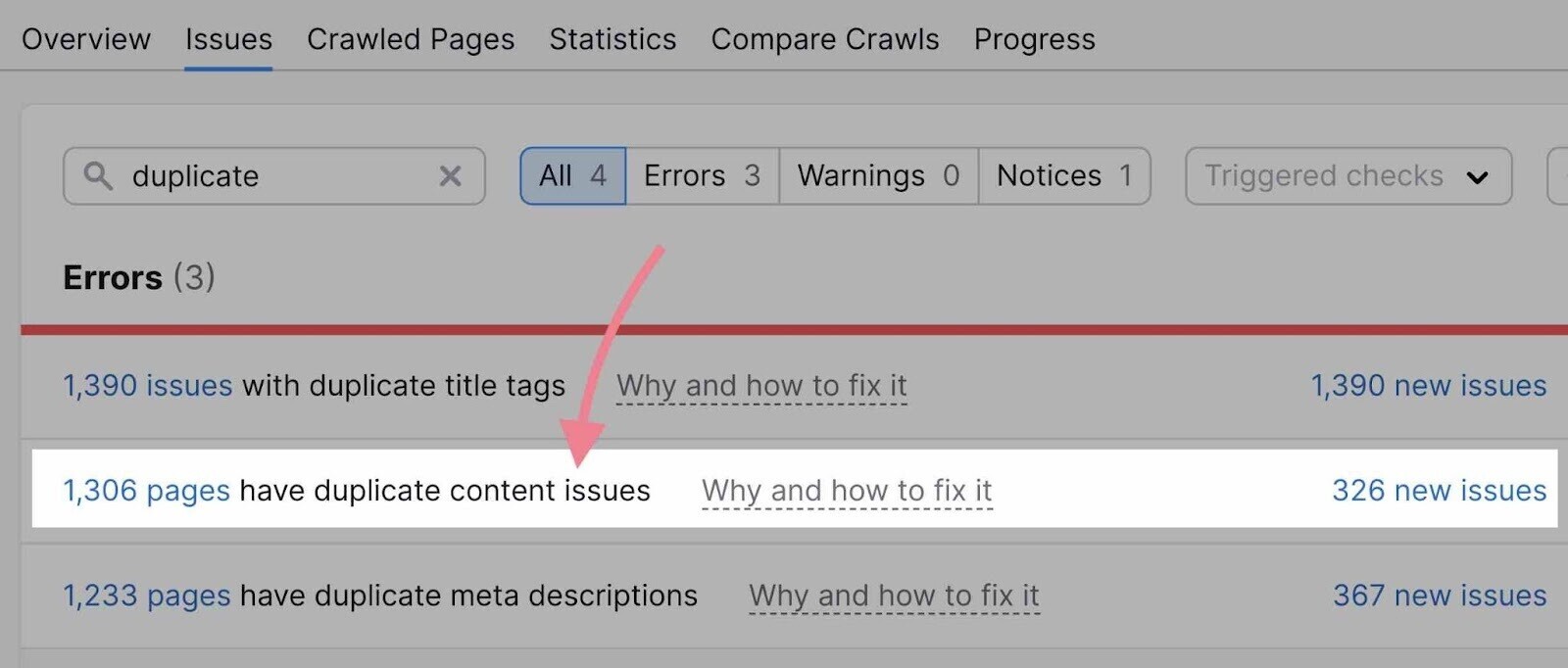

4. Spot and Fix Duplicate Content Issues

Duplicate content means multiple webpages contain identical or nearly identical content.

It can lead to several problems, including the following:

- An incorrect version of your page may display in SERPs

- Pages may not perform well in SERPs, or they may have indexing problems

Site Audit flags pages as duplicate content if their content is at least 85% identical.

Duplicate content may happen for two common reasons:

- There are multiple versions of URLs

- There are pages with different URL parameters

Let’s take a closer look at each of these issues.

Multiple Versions of URLs

One of the most common reasons a site has duplicate content is if you have several versions of the URL. For example, a site may have:

- An HTTP version

- An HTTPS version

- A www version

- A non-www version

For Google, these are different versions of the site. So if your page runs on more than one of these URLs, Google will consider it a duplicate.

To fix this issue, select a preferred version of your site and set up a sitewide 301 redirect. This will ensure only one version of your pages is accessible.

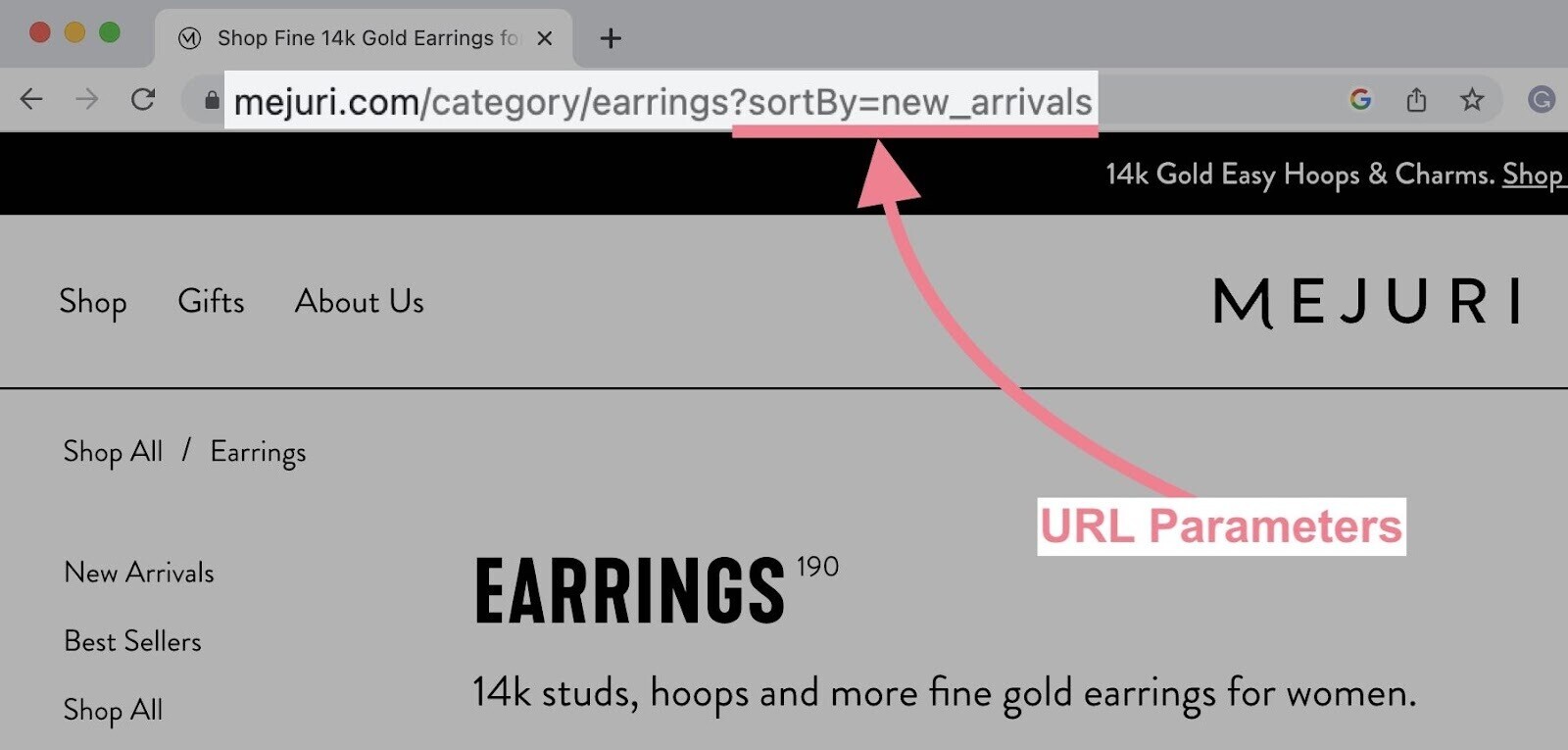

URL Parameters

URL parameters are extra elements of a URL used to filter or sort website content. They’re commonly used for product pages with very slight changes (e.g., different color variations of the same product).

You can identify them because they have a question mark and equal sign.

Since URLs with parameters have almost the same content as their counterparts without parameters, they can often be identified as duplicates.

Google usually groups these pages and tries to select the best one to use in search results. In other words, Google will probably take care of this issue.

Nevertheless, Google recommends a few actions to reduce potential problems:

- Reducing unnecessary parameters

- Using canonical tags pointing to the URLs with no parameters

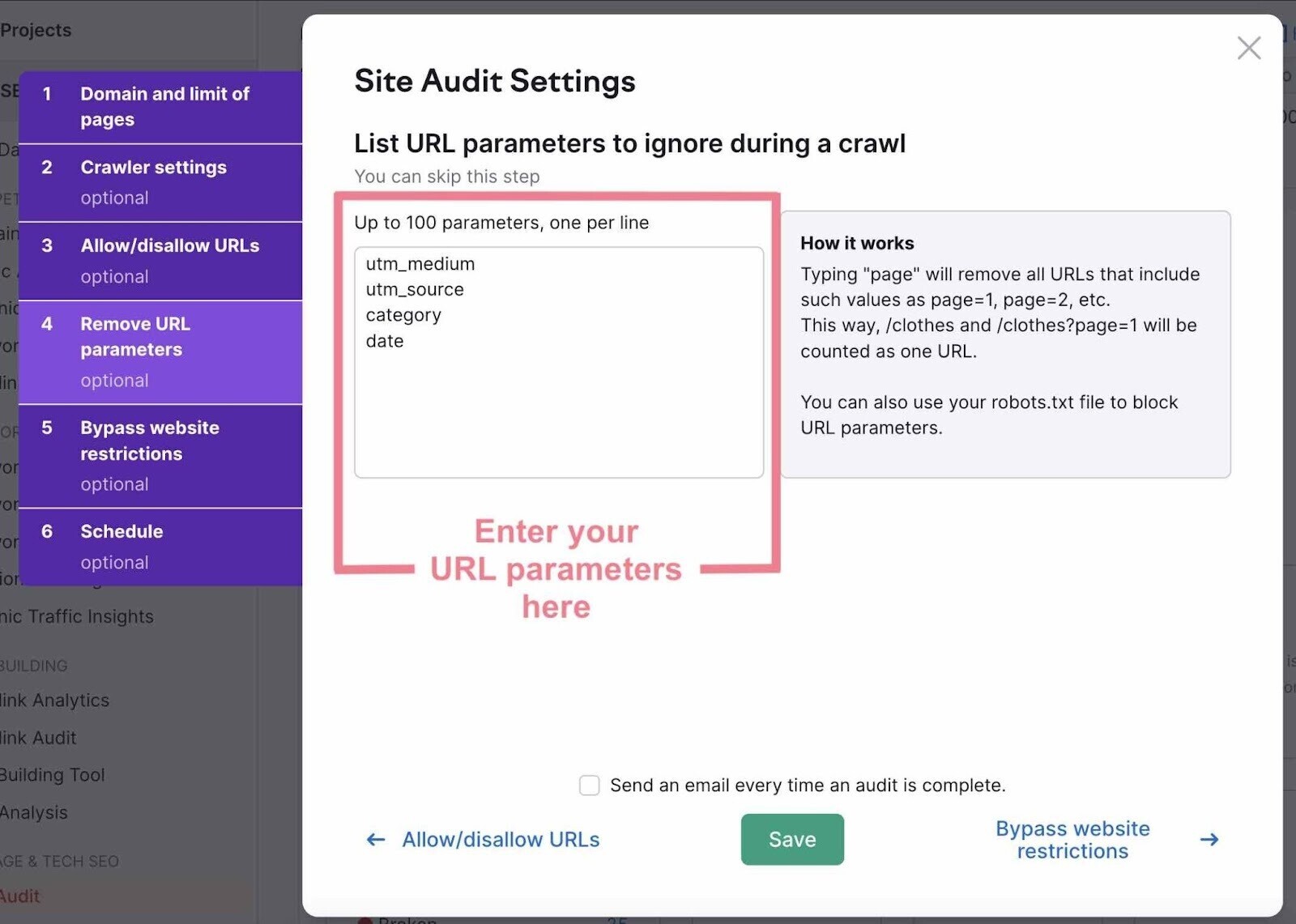

You can avoid crawling pages with URL parameters when setting up your SEO audit. This will ensure the Site Audit tool only crawls pages you want to analyze—not their versions with parameters.

Customize the “Remove URL parameters” section by listing all the parameters you want to ignore:

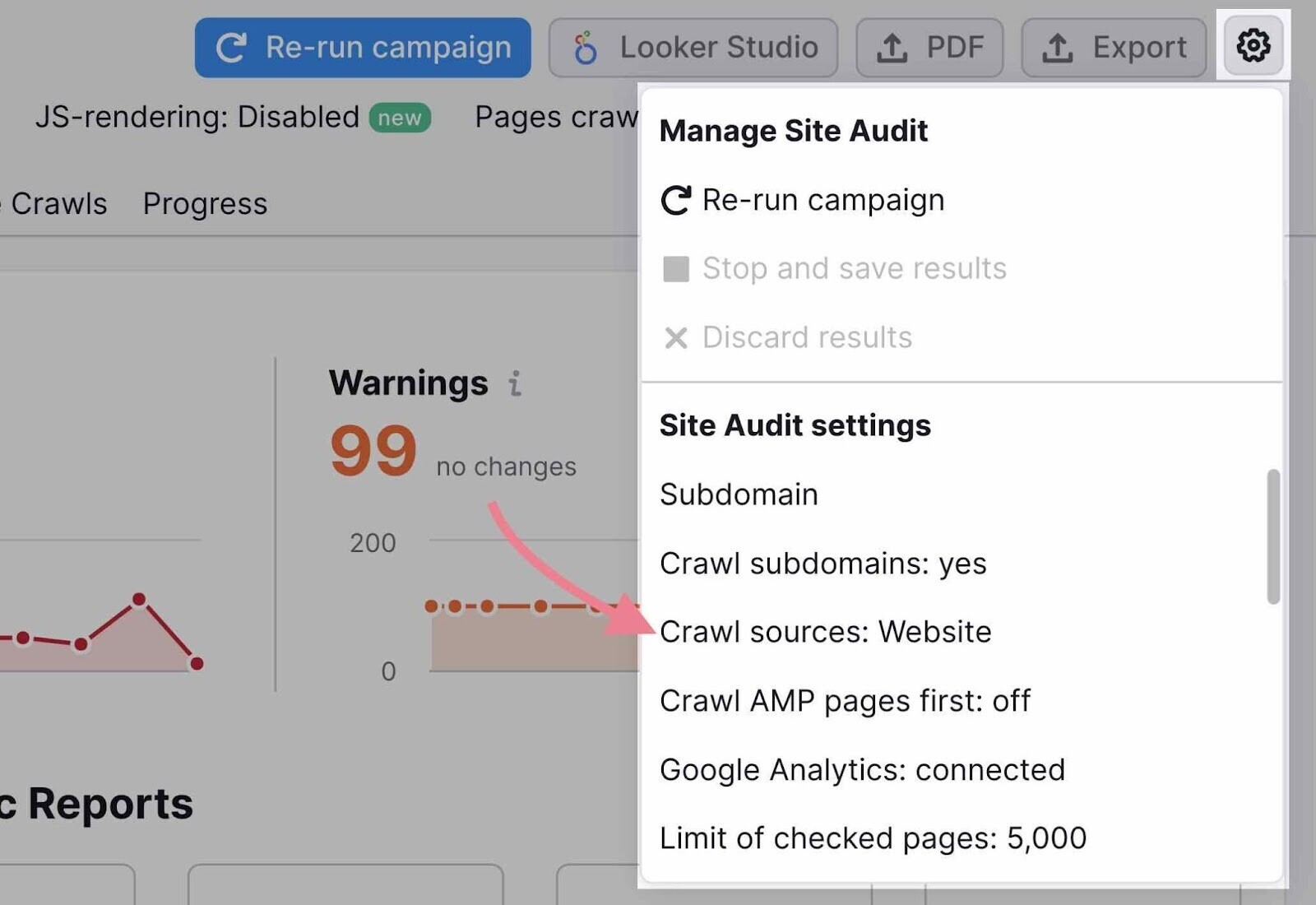

If you need to access these settings later, click the settings icon in the top-right corner and then “Crawl sources: Website” under the Site Audit settings.

Further reading: URL parameters

5. Audit Your Site Performance

Site speed is an important aspect of the overall page experience. Google pays a lot of attention to it. And it has long been a Google ranking factor.

When you audit a site for speed, consider two data points:

- Page speed: How long it takes one webpage to load

- Site speed: The average page speed for a sample set of page views on a site

Improve page speed, and your site speed improves.

This is such an important task that Google has a tool specifically made to address it: PageSpeed Insights.

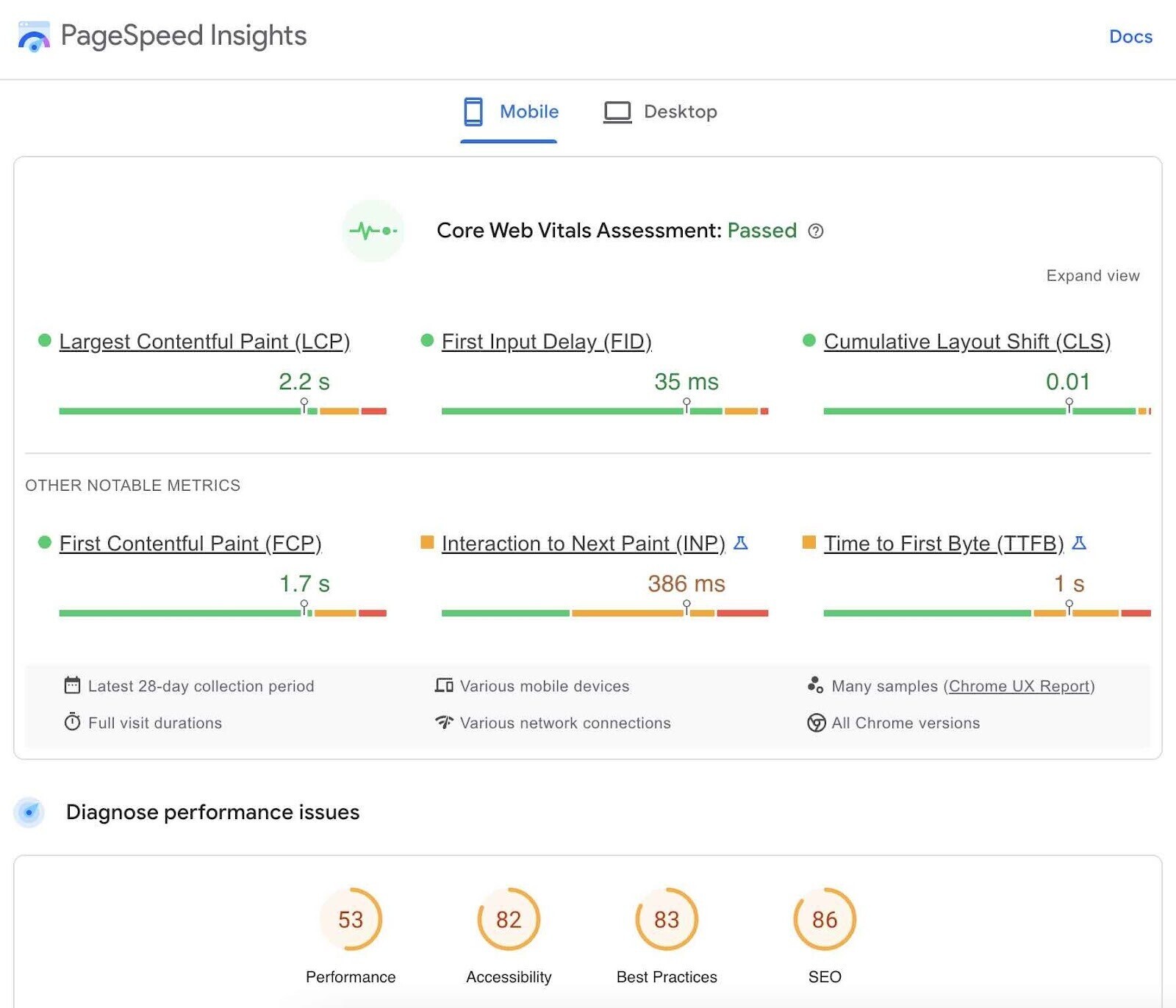

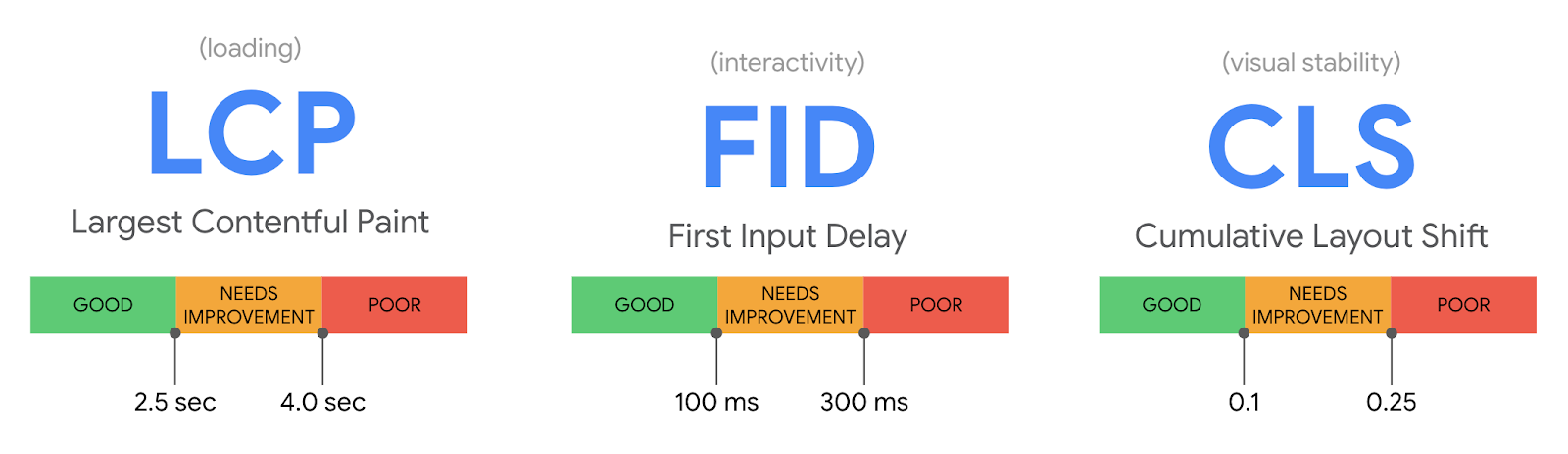

A handful of metrics influence PageSpeed scores. The three most important ones are called Core Web Vitals.

They include:

- Largest Contentful Paint (LCP): measures how fast the main content of your page loads

- First Input Delay (FID): measures how quickly your page is interactive

- Cumulative Layout Shift (CLS): measures how visually stable your page is

Image courtesy: web.dev

Image courtesy: web.dev

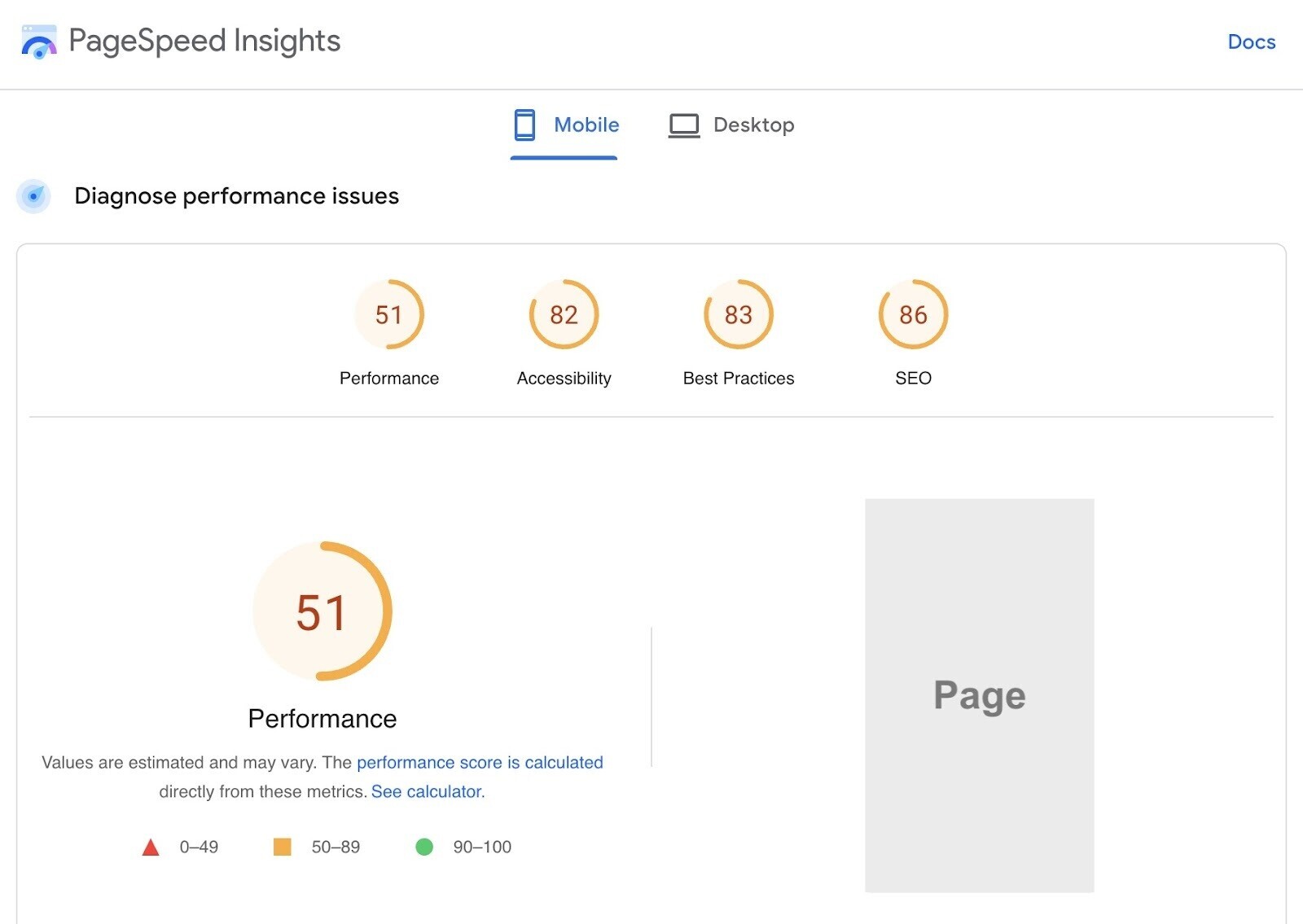

The tool provides details and opportunities to improve your page in four main areas:

- Performance

- Accessibility

- Best Practices

- SEO

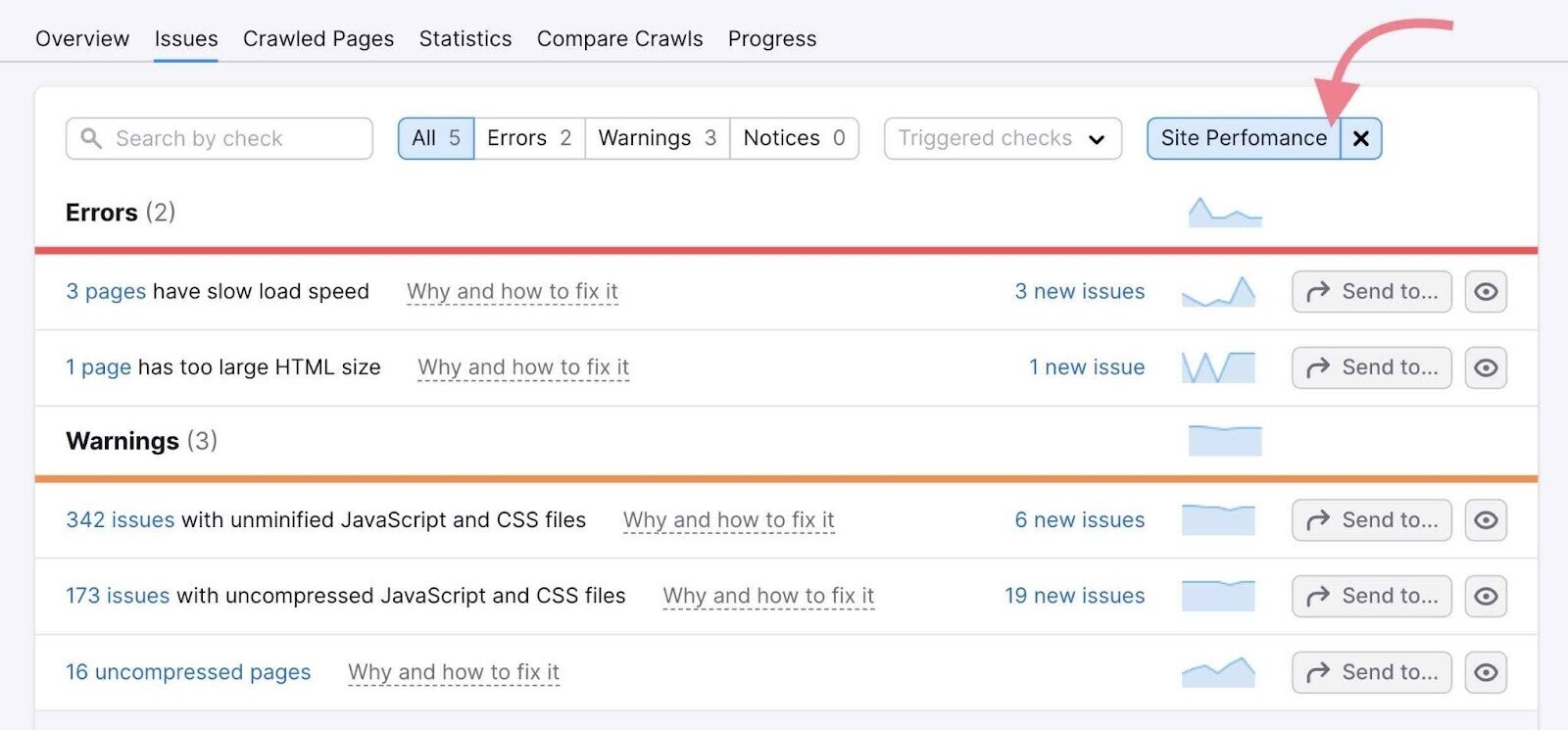

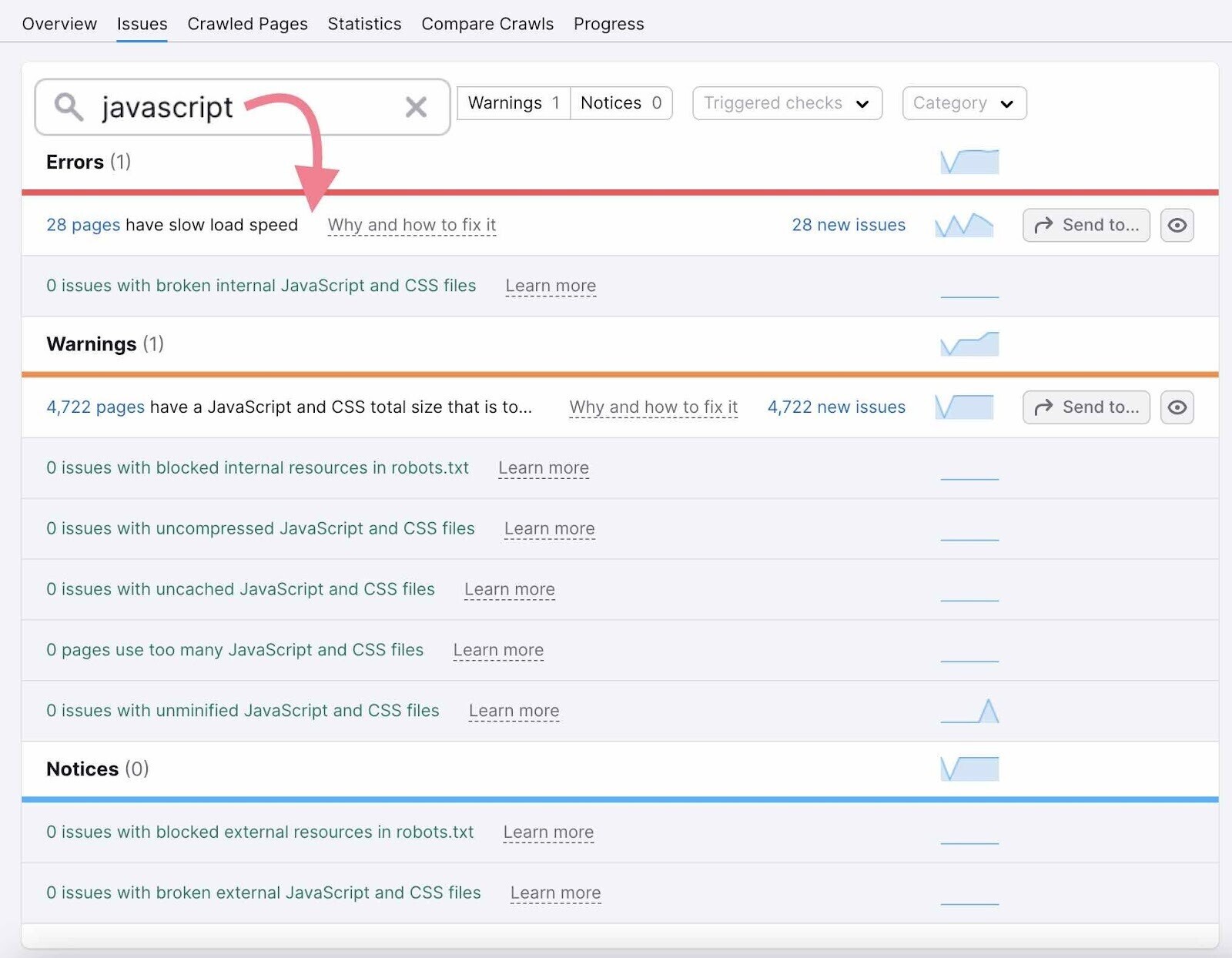

However, PageSpeed Insights can only analyze one URL at a time. To get the sitewide view, you can either use Google Search Console or a website audit tool like Semrush’s Site Audit.

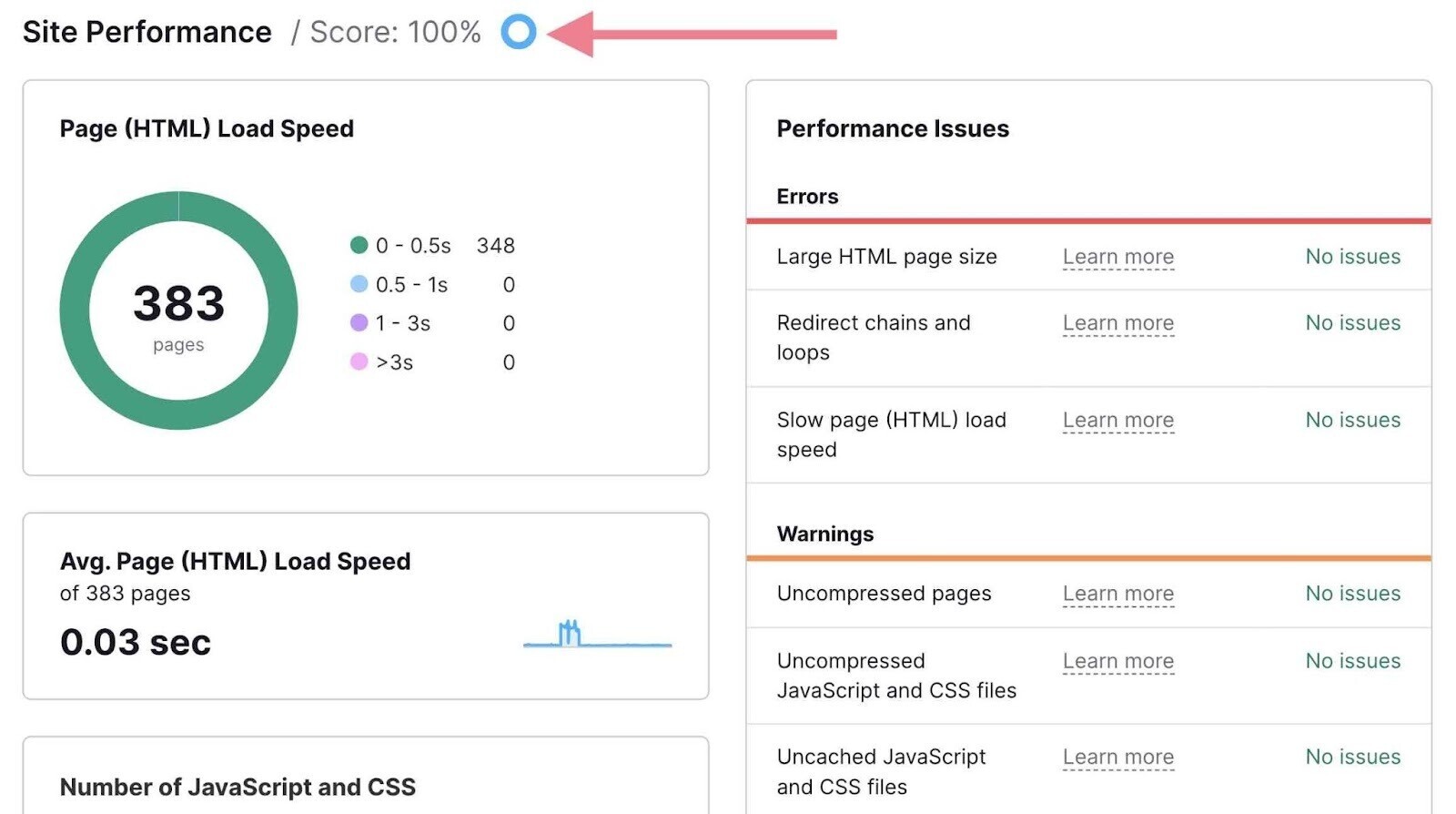

Let’s use Site Audit for this example. Head to the “Issues” tab and select the “Site Performance” category.

Here, you can see all the pages a specific issue affects—like slow load speed, for example.

There are also two detailed reports dedicated to performance—the “Site Performance” report and the “Core Web Vitals” report.

You can access both of them from the Site Audit overview.

The “Site Performance” report provides an additional “Site Performance Score,” or a breakdown of your pages by their load speed and other useful insights.

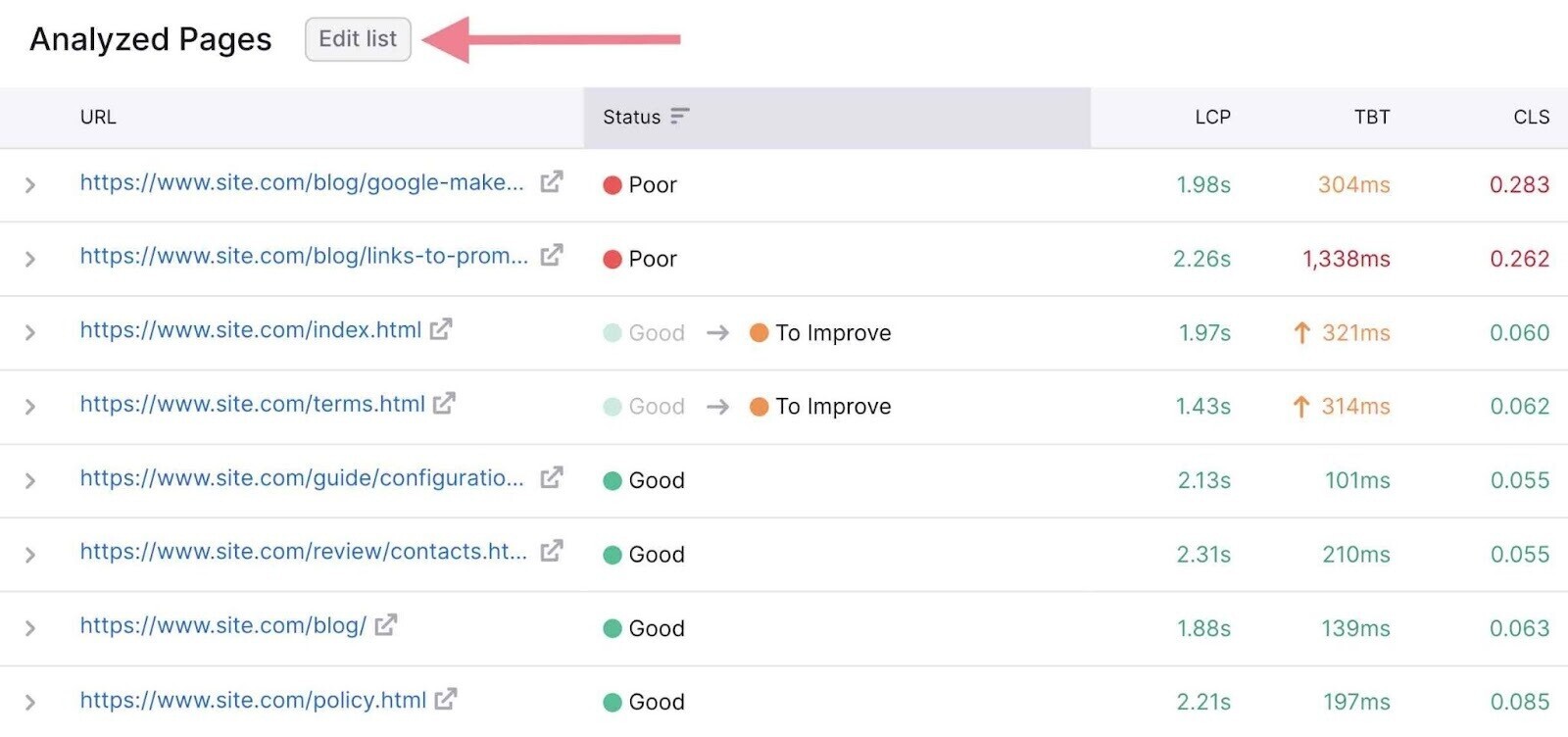

The Core Web Vitals report will break down your Core Web Vitals metrics based on 10 URLs. You can track your performance over time with the “Historical Data” graph.

You can also edit your list of analyzed pages so the report covers various types of pages on your site (e.g., a blog post, a landing page, and a product page).

Just click “Edit list” in the “Analyzed pages” section.

Further reading: Site performance is a broad topic and one of the most important aspects of technical SEO. To learn more about the topic, check out our page speed guide, as well as our detailed guide to Core Web Vitals.

6. Discover Mobile-Friendliness Issues

As of February 2023, more than half (59.4%) of web traffic happens on mobile devices.

And Google primarily indexes the mobile version of all websites rather than the desktop version. (This is known as mobile-first indexing.)

That’s why you need to ensure your website works perfectly on mobile devices.

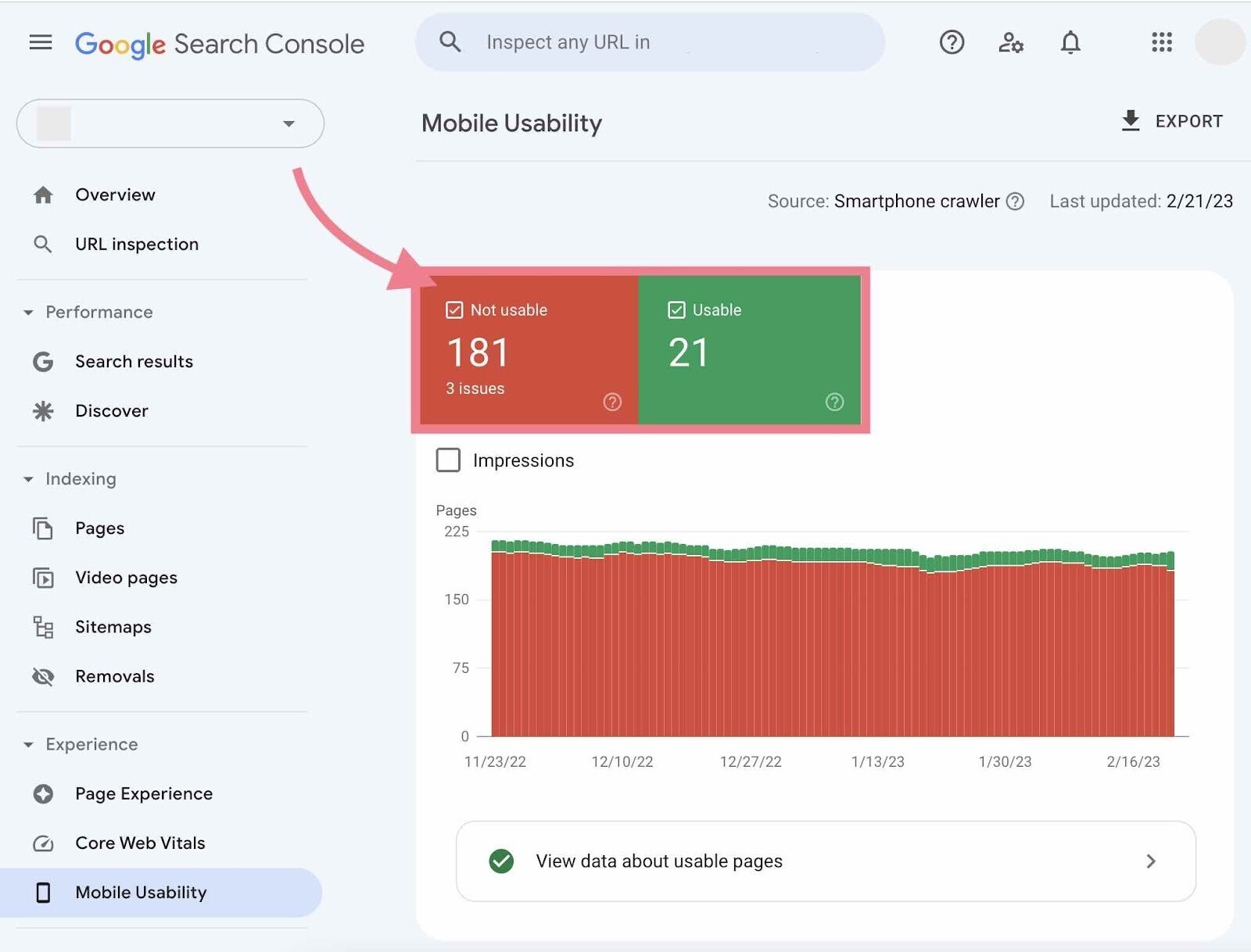

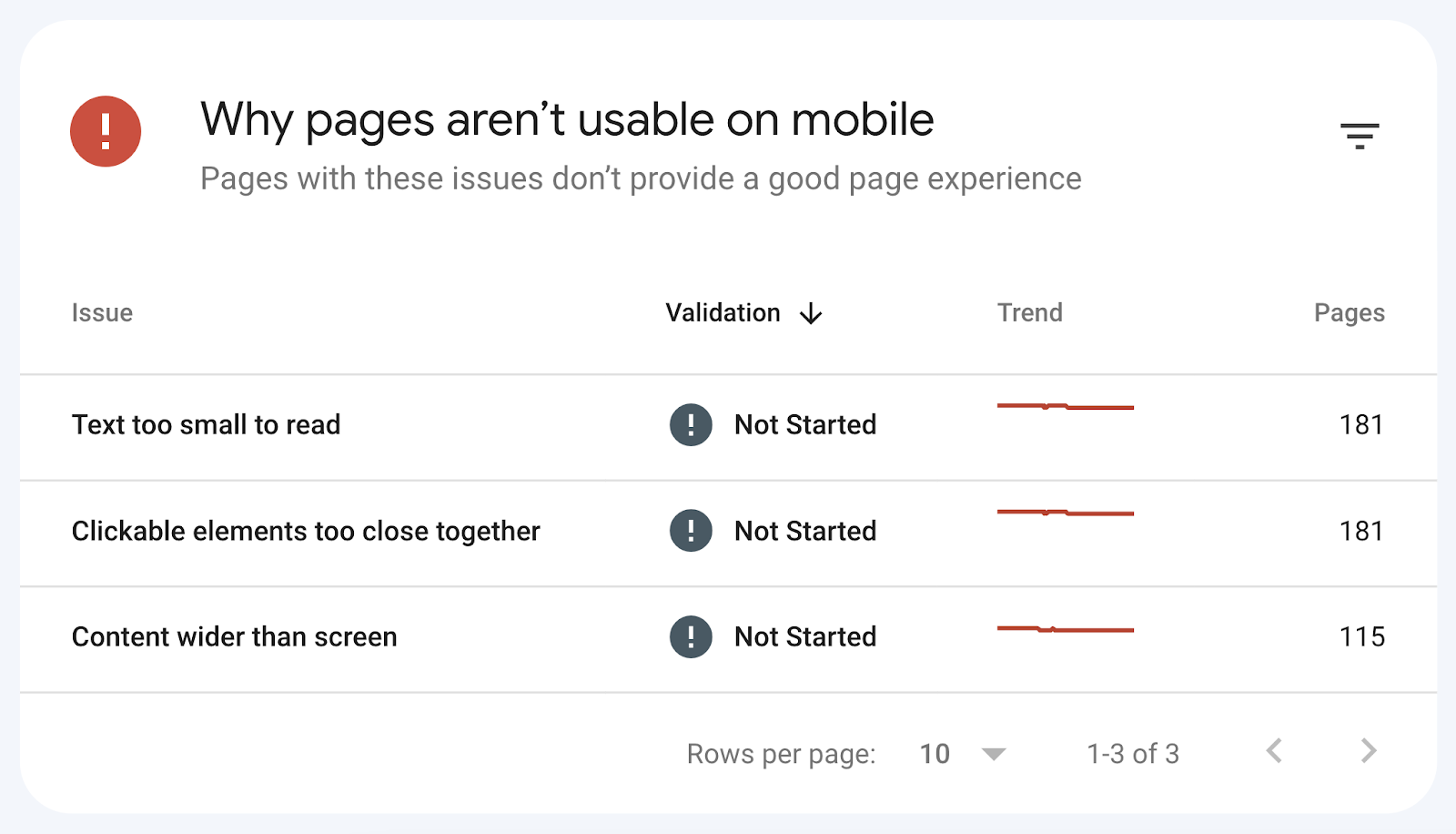

Google Search Console provides a helpful “Mobile Usability” report.

Here, you can see your pages divided into two simple categories—“Not Usable” and “Usable.”

Below, you’ll see a section called “Why pages aren’t usable on mobile.”

It lists all the detected issues.

After you click on a specific issue, you’ll see all the affected pages. As well as links to Google’s guidelines on how to fix the problem.

Tip: Want to quickly check mobile usability for one specific URL? You can use Google’s Mobile-Friendly Test.

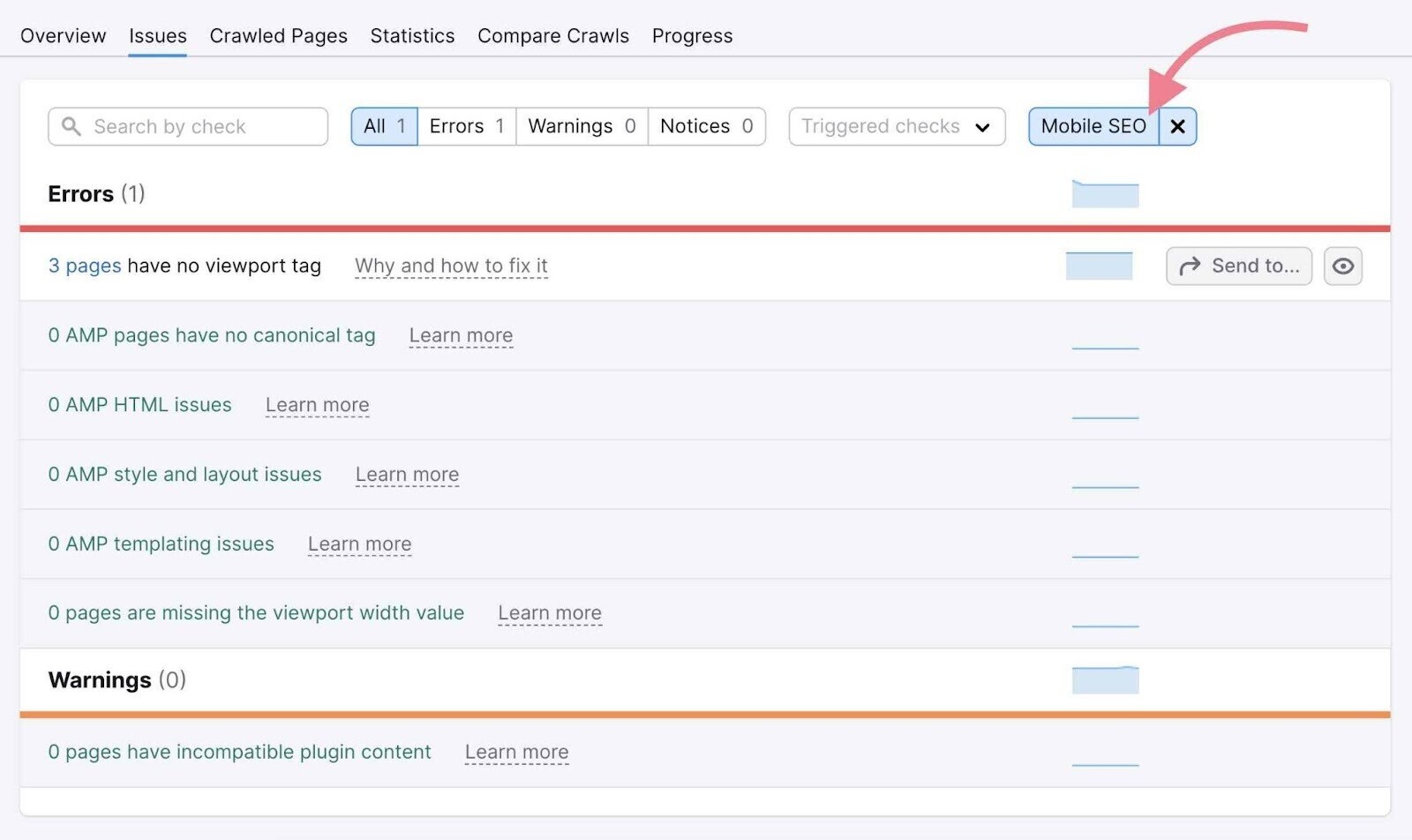

With Semrush, you can check two important aspects of mobile SEO—viewport tag and AMP pages.

Just select the “Mobile SEO” category in the “Issues” tab of the Site Audit tool.

A viewport meta tag is an HTML tag that helps you scale your page to different screen sizes. It automatically alters the page size based on the user’s device (when you have a responsive design).

Another way to improve the site performance on mobile devices is to use Accelerated Mobile Pages (AMPs), which are stripped-down versions of your pages.

AMPs load quickly on mobile devices because Google runs them from its cache rather than sending requests to your server.

If you use AMP pages, it’s important to audit them regularly to make sure you’ve implemented them correctly to boost your mobile visibility.

Site Audit will test your AMP pages for various issues divided into three categories:

- AMP HTML issues

- AMP style and layout issues

- AMP templating issues

Further reading: Accelerated Mobile Pages

7. Spot and Fix Code Issues

Regardless of what a webpage looks like to human eyes, search engines only see it as a bunch of code.

So, it’s important to use proper syntax. And relevant tags and attributes that help search engines understand your site.

During your technical SEO audit, keep an eye on several different parts of your website code and markup. Namely, HTML (that includes various tags and attributes), JavaScript, and structured data.

Let’s take a closer look at some of them.

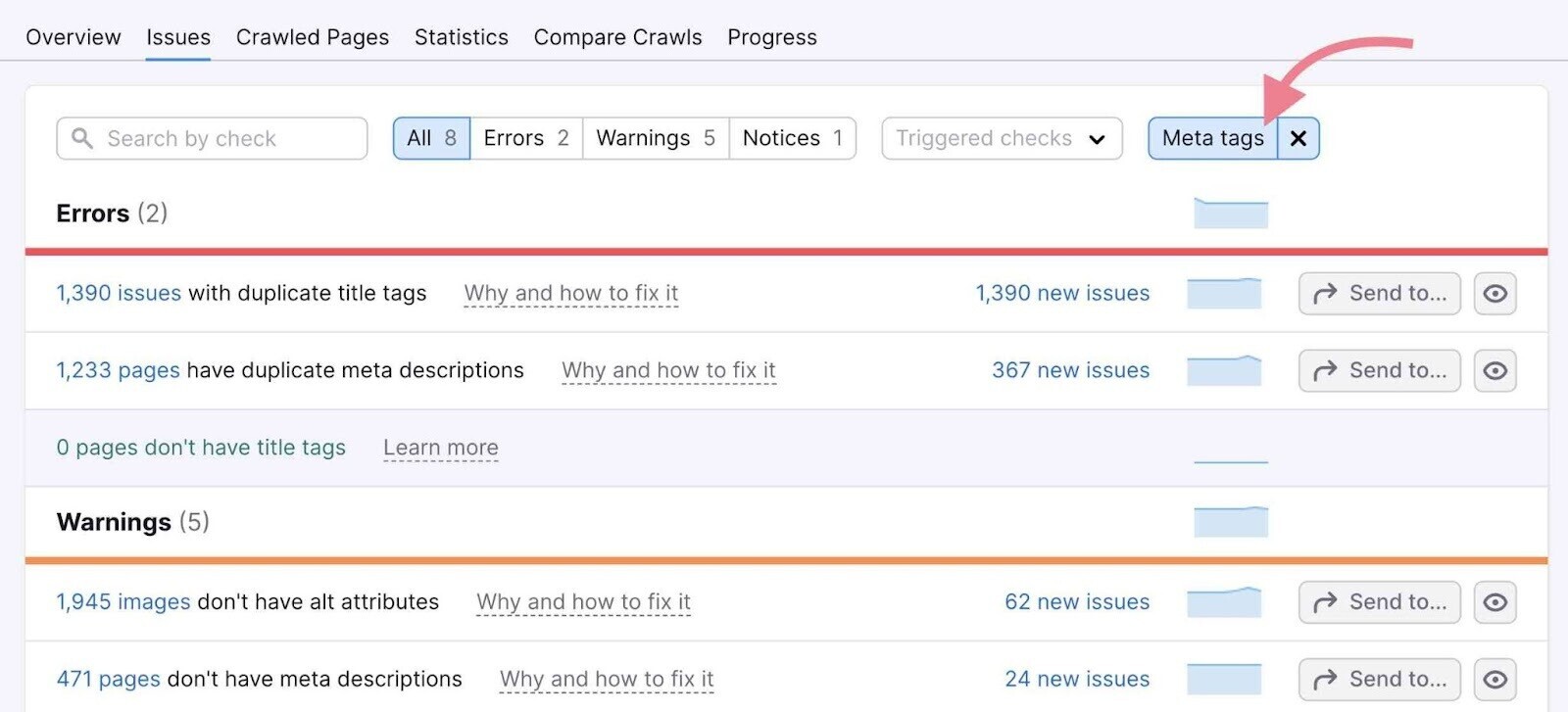

Meta Tag Issues

Meta tags are text snippets that provide search engine bots with additional data about a page’s content. These tags are present in your page’s header as a piece of HTML code.

We’ve already covered the robots meta tag (related to crawlability and indexability) and the viewport meta tag (related to mobile-friendliness).

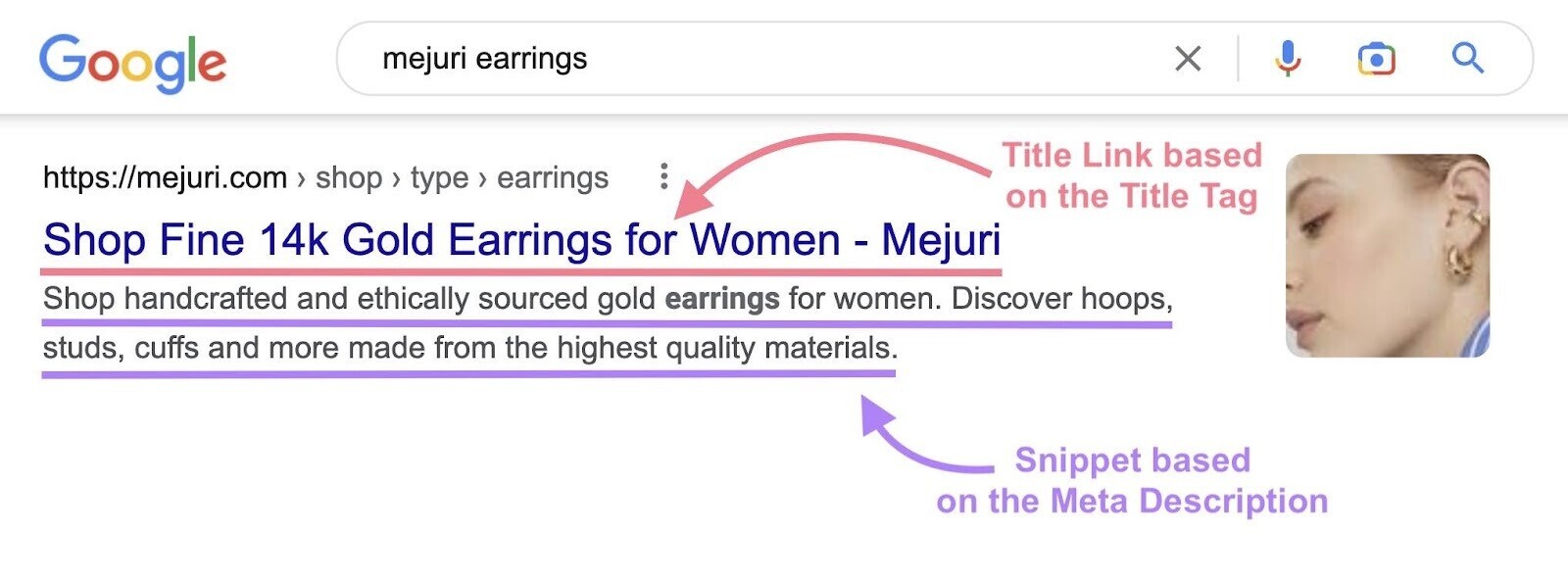

You should understand two other types of meta tags:

- Title tag: Indicates the title of a page. Search engines use title tags to form the clickable blue link in the search results. Read our guide to title tags to learn more.

- Meta description: A brief description of a page. Search engines use it to form the snippet of a page in the search results. While it’s not directly tied to Google’s ranking algorithm, a well-optimized meta description has other potential SEO benefits.

To see issues related to these meta tags in your Site Audit report, select the “Meta tags” category in the “Issues” tab.

Canonical Tag Issues

Canonical tags are used to point out the “canonical” (or “main”) copy of a page. They tell search engines which page needs to be indexed in case there are multiple pages with duplicate or similar content.

A canonical tag is placed in the <head> section of a page’s code and points to the “canonical” version.

It looks like this:

<link rel=”canonical” href=”https://www.domain.com/the-canonical-version-of-a-page/” />

A common canonicalization issue is that a page has either no canonical tag or multiple canonical tags. And, of course, you may have a broken canonical tag.

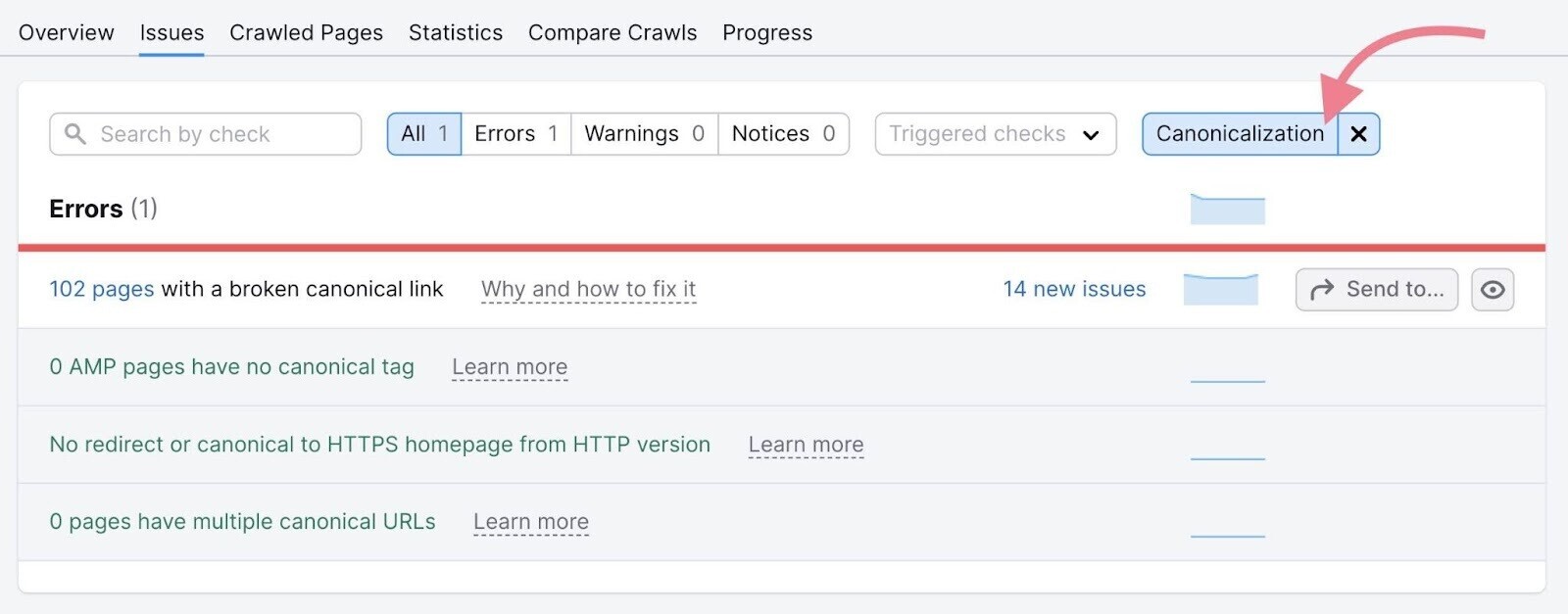

The Site Audit tool can detect all of these issues. If you want to only see the canonicalization issues, go to “Issues” and select the “Canonicalization” category in the top filter.

Further reading: Canonical URLs

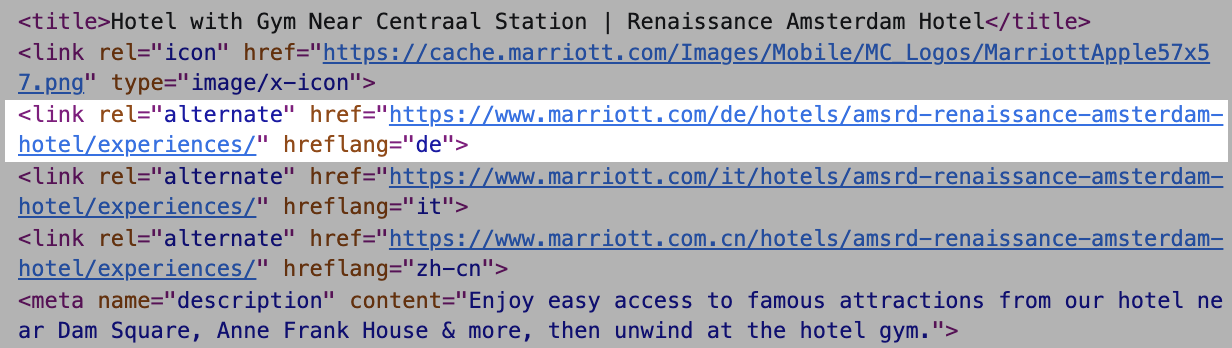

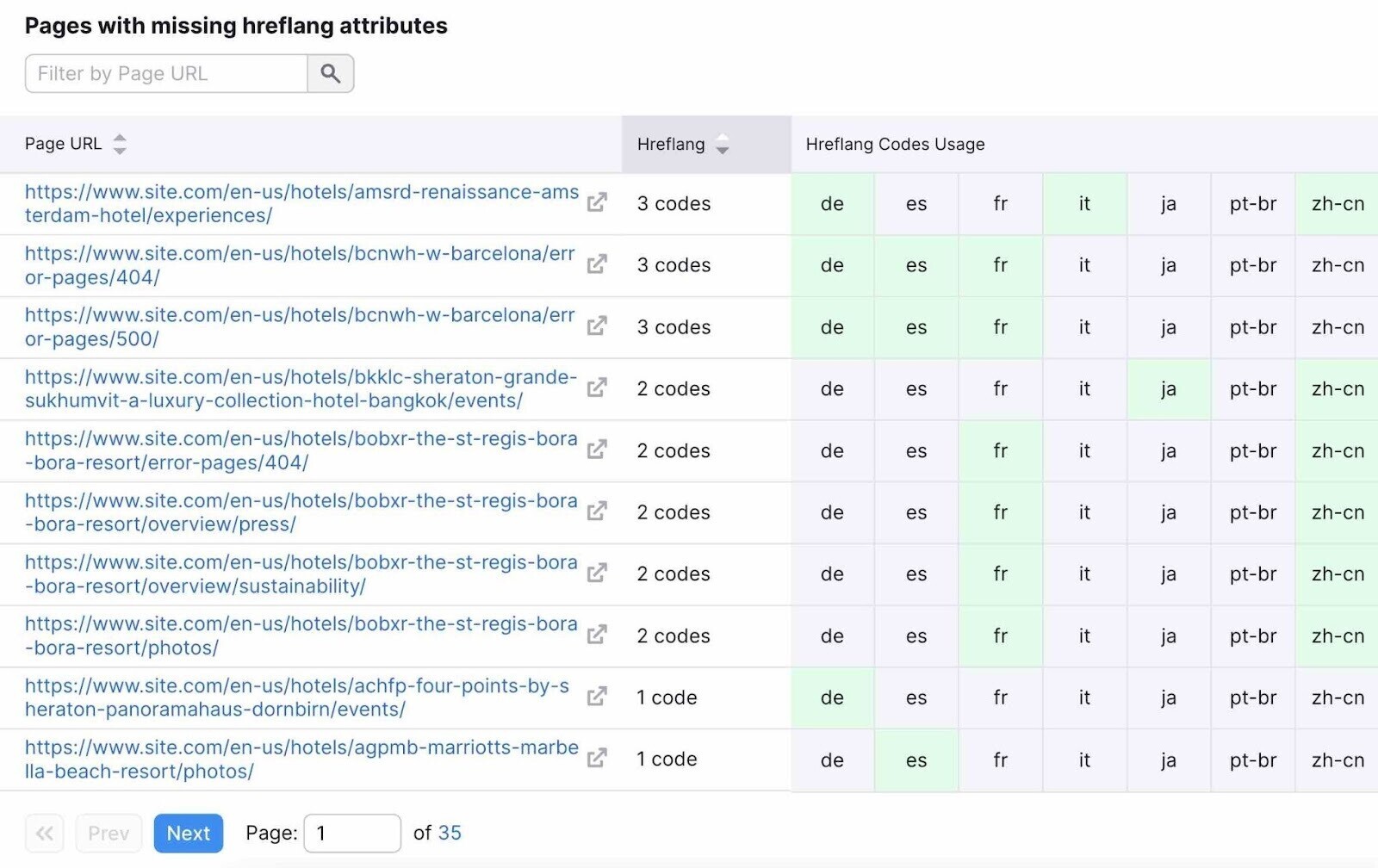

Hreflang Attribute Issues

The hreflang attribute denotes the target region and language of a page. It helps search engines serve the right variation of a page, based on the user’s location and language preferences.

If you need your site to reach audiences in more than one country, you need to use hreflang attributes in <link> tags.

That will look like this:

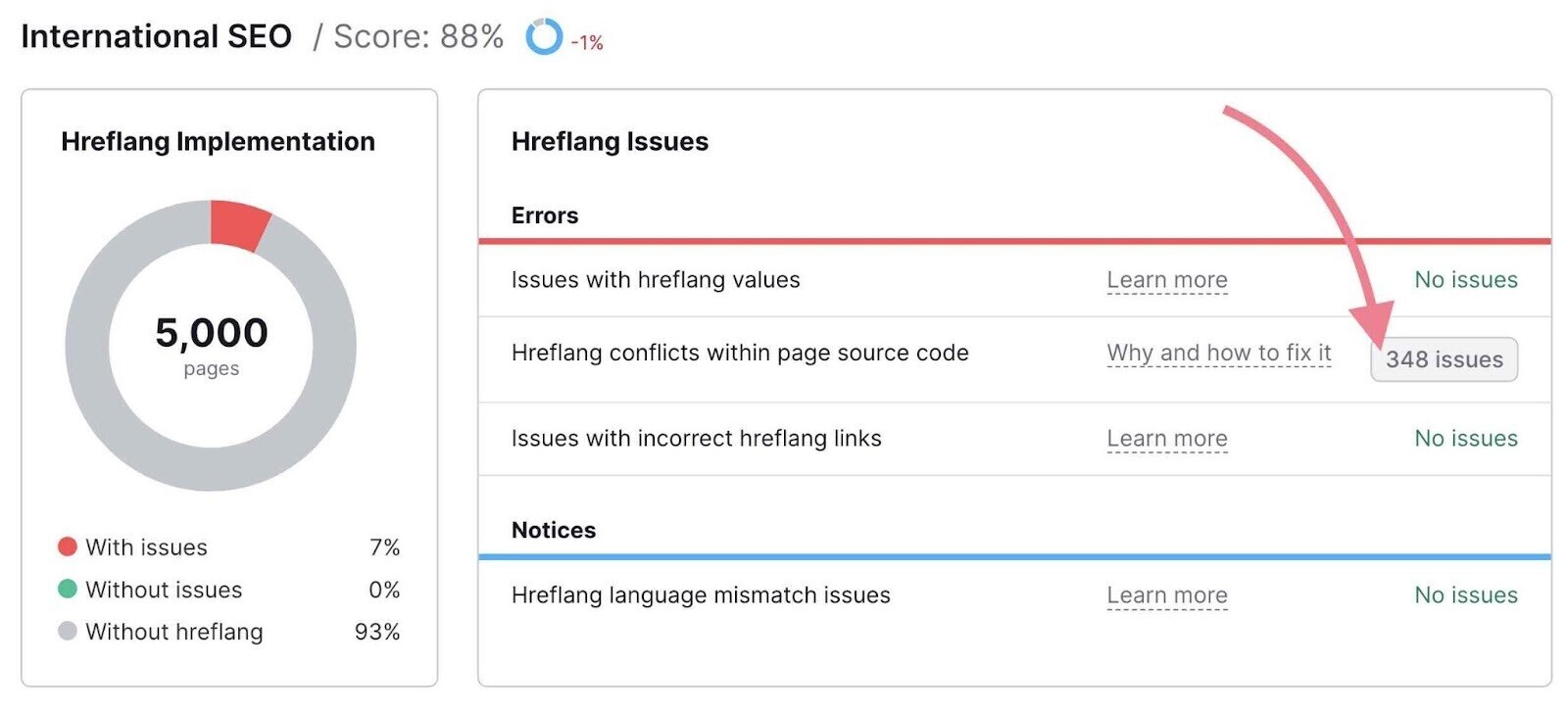

To audit your hreflang annotations, go to the “International SEO” thematic report in Site Audit.

It will give you a comprehensive overview of all the hreflang issues on your site:

At the bottom of the report, you’ll also see a detailed list of pages with missing hreflang attributes on the total number of language versions your site has.

Further reading: Hreflang is one of the most complicated SEO topics. To learn more about hreflang attributes, check out our beginner’s guide to hreflang or this guide to auditing hreflang annotations by Aleyda Solis.

JavaScript Issues

JavaScript is a programming language used to create interactive elements on a page.

Search engines like Google use JavaScript files to render the page. If Google can’t get the files to render, it won’t index the page properly.

The Site Audit tool will detect any broken JavaScript files and flag the affected pages.

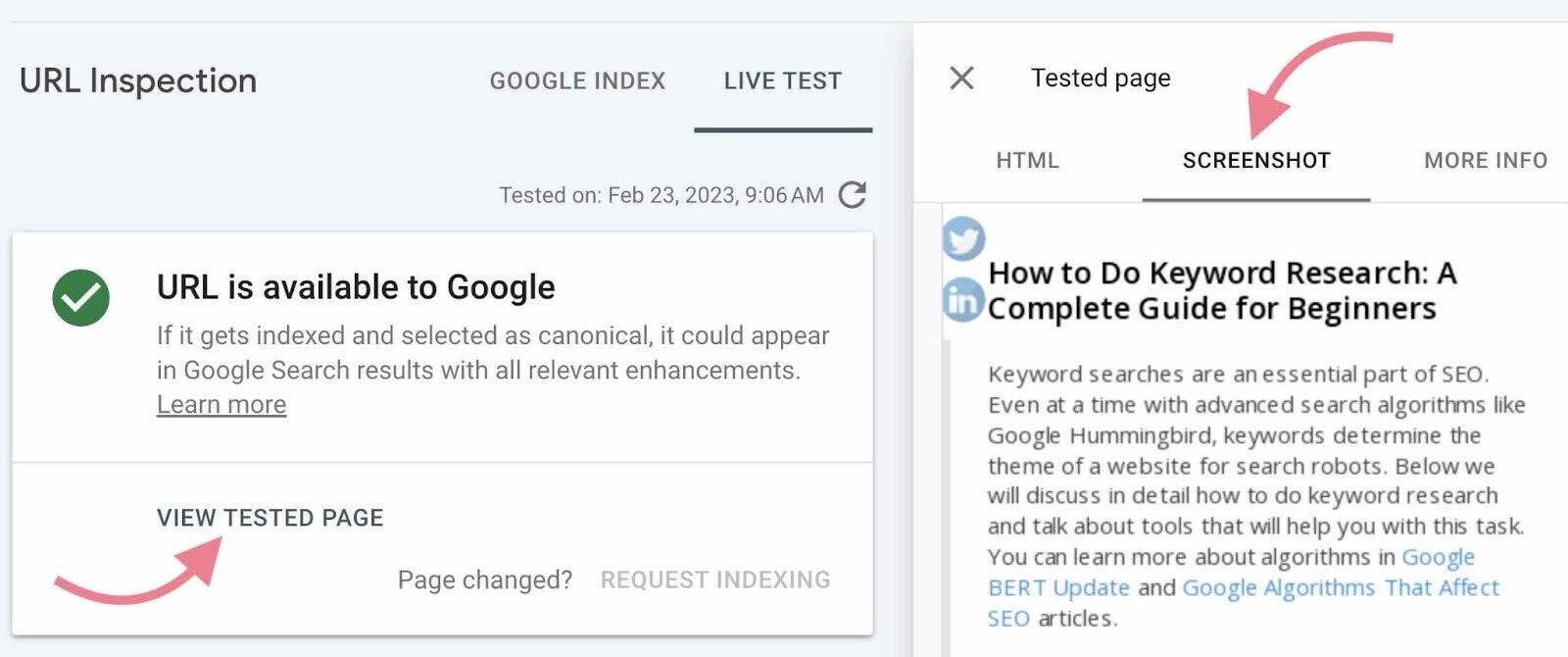

To check how Google renders a page that uses JavaScript, go to Google Search Console and use the “URL Inspection Tool.”

Enter your URL into the top search bar and hit enter.

Once the inspection is over, you can test the live version of the page by clicking the “Test Live URL” button in the top-right corner. The test may take a minute or two.

Now, you can see a screenshot of the page exactly how Google renders it. So you can check whether the search engine is reading the code correctly.

Just click the “View Tested Page” link and then the “Screenshot” tab.

Check for discrepancies and missing content to find out if anything is blocked, has an error, or times out.

Our JavaScript SEO guide can help you diagnose and fix JavaScript-specific problems.

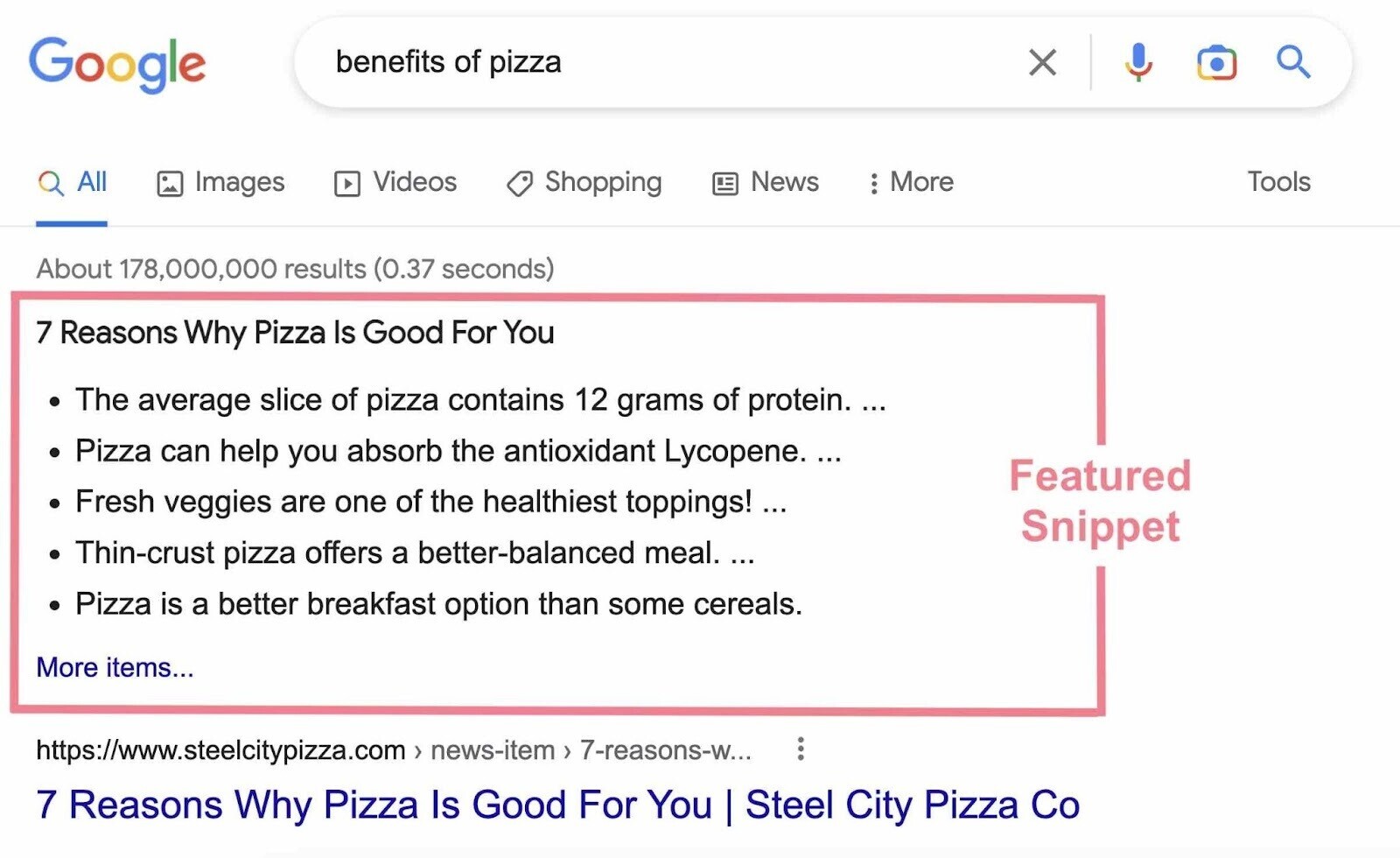

Structured Data Issues

Structured data is data organized in a specific code format (markup) that provides search engines with additional information about your content.

One of the most popular shared collections of markup language among web developers is Schema.org.

Using schema can make it easier for search engines to index and categorize pages correctly. Plus, it can help you capture SERP features (also known as rich results).

SERP features are special types of search results that stand out from the rest of the results due to their different formats. Examples include the following:

- Featured snippets

- Reviews

- FAQs

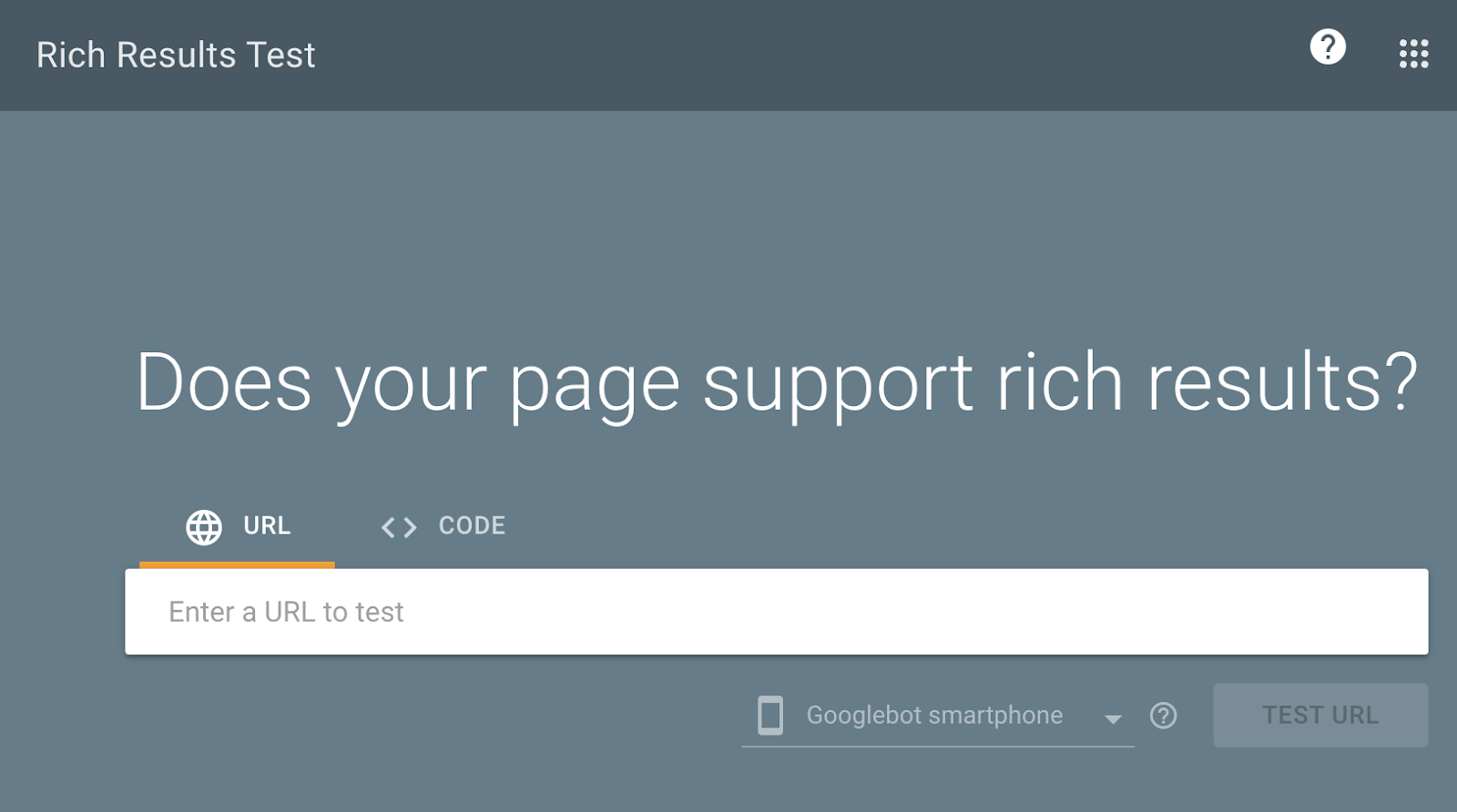

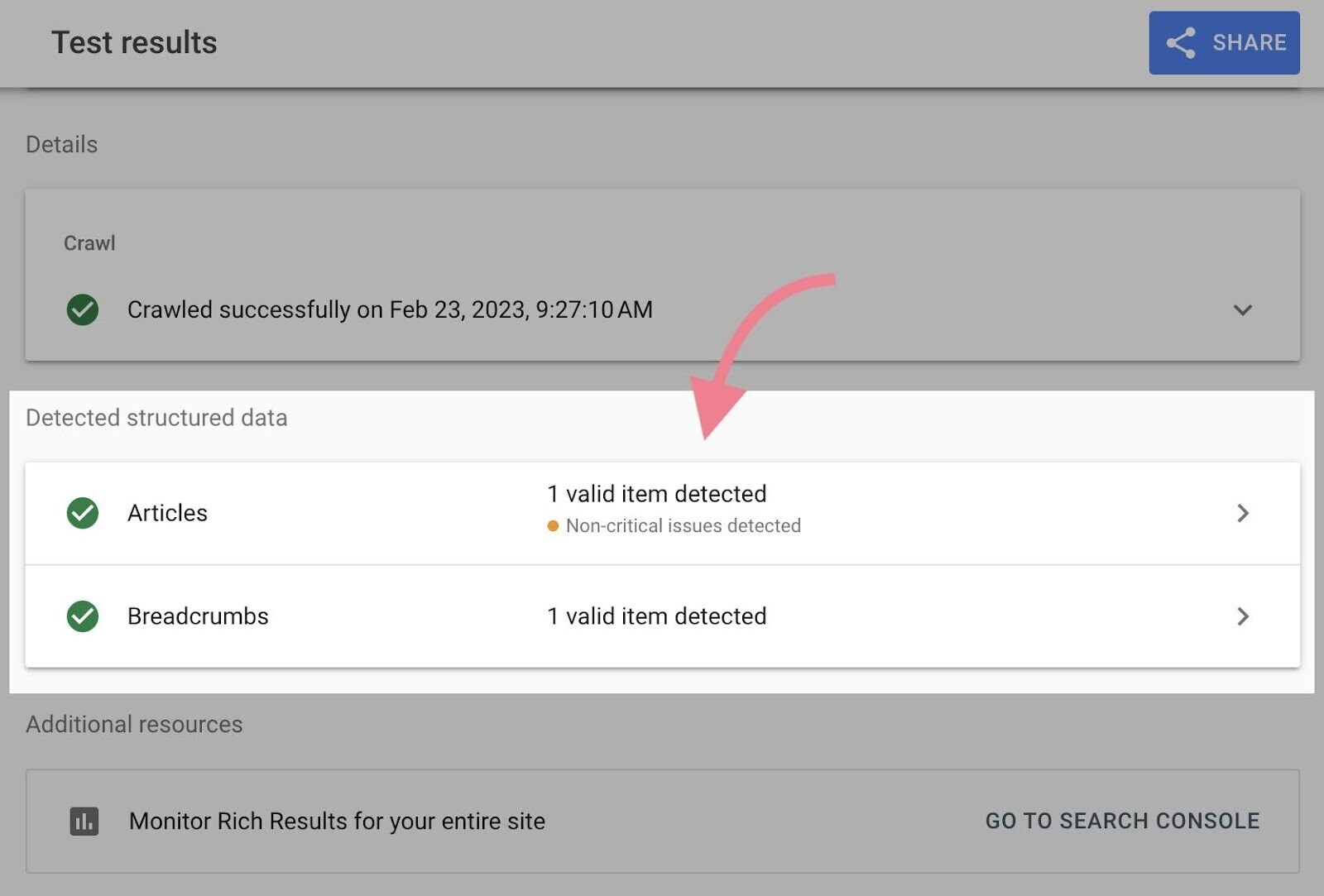

A great tool to check whether your page is eligible for rich results is Google’s Rich Results Test tool.

Simply enter your URL. You will see all the structured data items detected on your page.

For example, this blog post uses “Articles” and “Breadcrumbs” structured data.

The tool will list any issues next to specific structured data items, along with links to Google’s documentation on how to fix the issues.

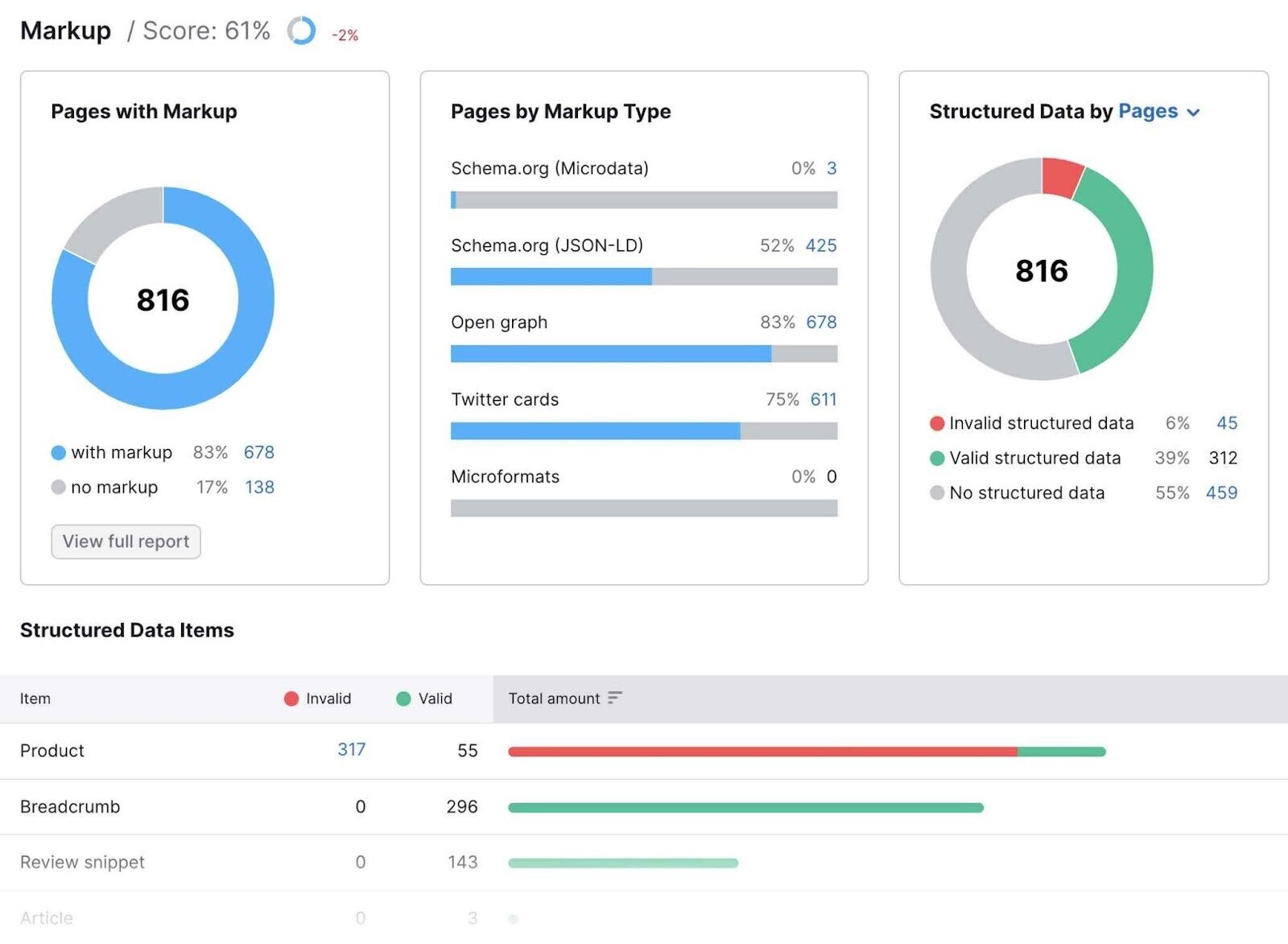

You can also use the “Markup” thematic report in the Site Audit tool to identify structured data issues.

Just click “View details” in the “Markup” box in your audit overview.

The report will provide an overview of all the structured data types your site uses. And a list of all the invalid items.

Further reading: Learn more about the “Markup” report and how to generate schema markup for your pages.

8. Check for and Fix HTTPS Issues

Your website should be using an HTTPS protocol (as opposed to HTTP, which is not encrypted).

This means your site runs on a secure server that uses a security certificate called an SSL certificate from a third-party vendor.

It confirms the site is legitimate and builds trust with users by showing a padlock next to the URL in the web browser:

What’s more, HTTPS is a confirmed Google ranking signal.

Implementing HTTPS is not difficult. But it can bring about some issues. Here’s how to address HTTPS issues during your technical SEO audit:

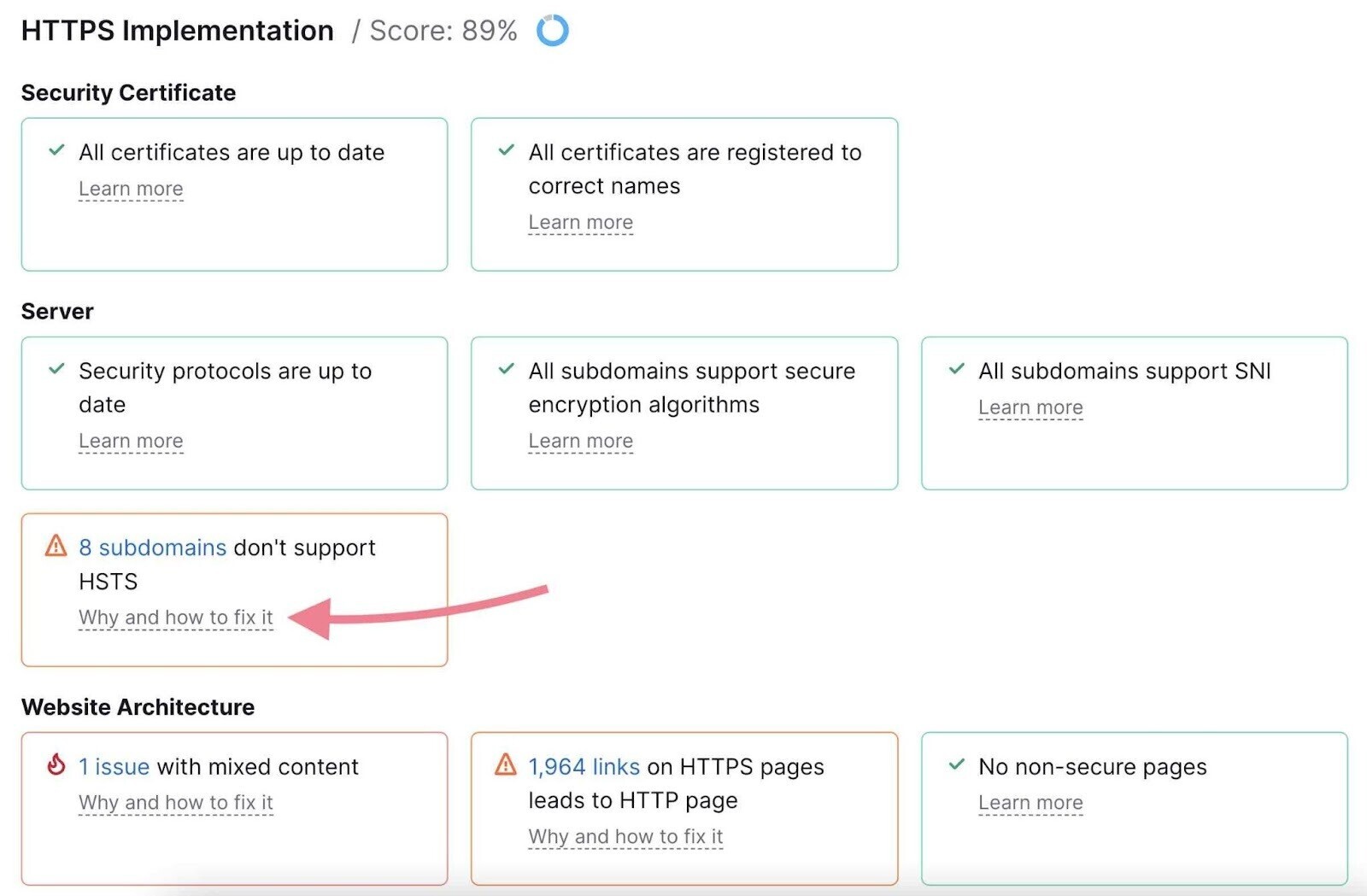

Open the “HTTPS” report in the Site Audit overview:

Here, you’ll find a list of all issues connected to HTTPS. If your site triggers an issue, you can see the affected URLs and advice on how to fix the problem.

Common issues include the following:

- Expired certificate: Lets you know if your security certificate needs to be renewed

- Old security protocol version: Informs you if your website is running an old SSL or TLS (Transport Layer Security) protocol

- No server name indication: Lets you know if your server supports SNI (Server Name Indication), which allows you to host multiple certificates at the same IP address to improve security

- Mixed content: Determines if your site contains any unsecure content, which can trigger a “not secure” warning in browsers

9. Find and Fix Problematic Status Codes

HTTP status codes indicate a website server’s response to the browser’s request to load a page.

1XX statuses are informational. And 2XX statuses report a successful request. We don’t need to be concerned about them.

Instead, we’ll review the other three categories—3XX, 4XX, and 5XX statuses. And how to deal with them.

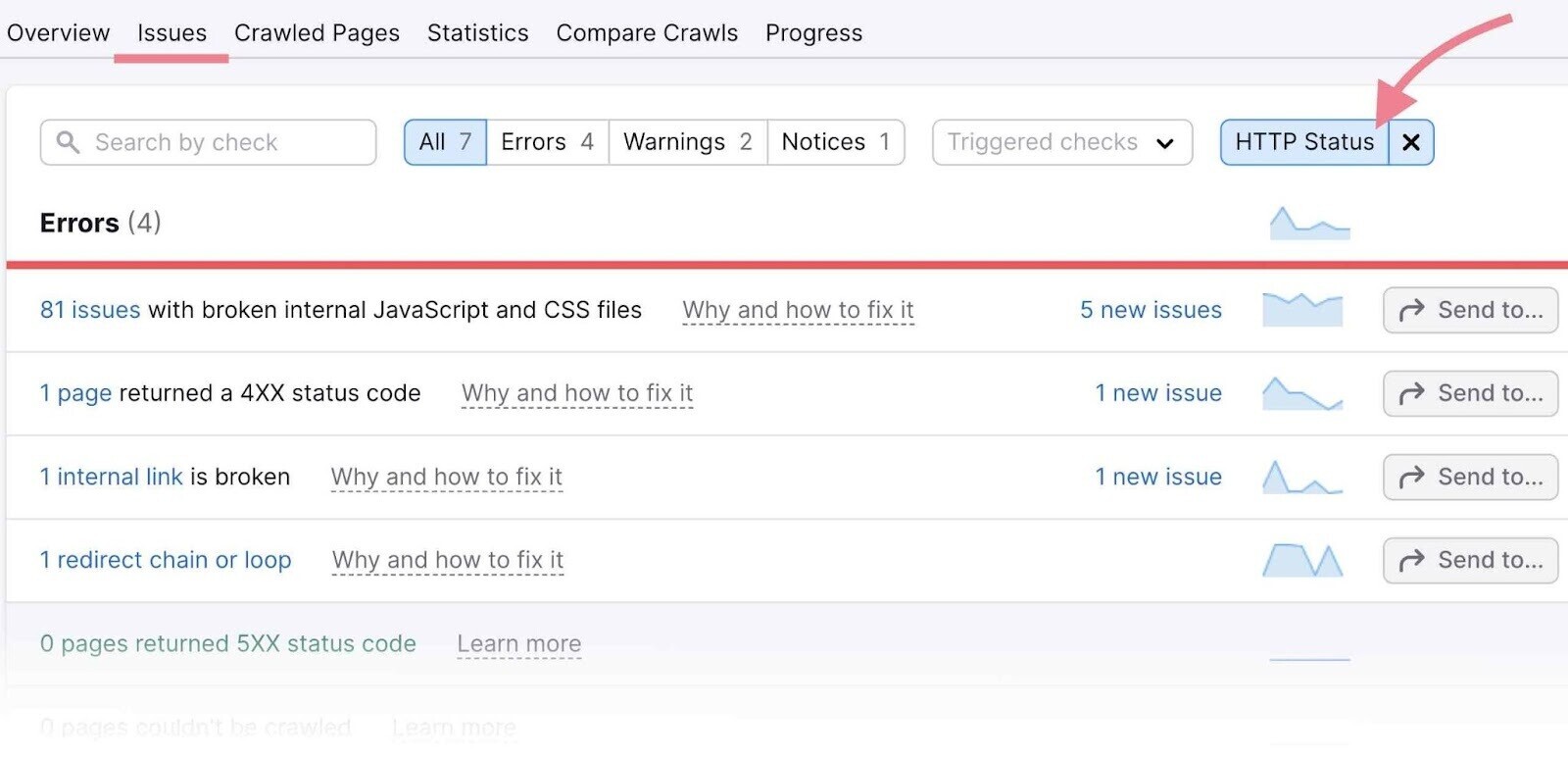

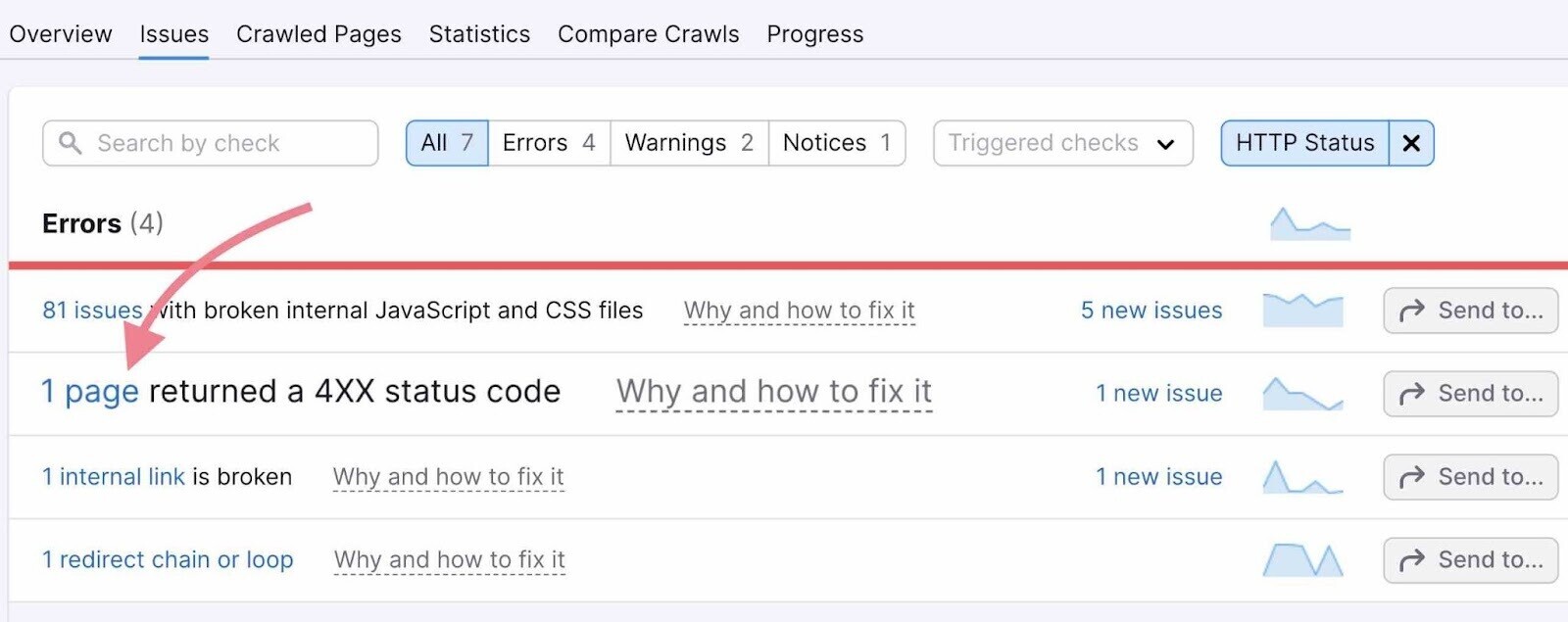

To begin, open the “Issues” tab in Site Audit and select the “HTTP Status” category in the top filter.

This will list all the issues and warnings related to HTTP statuses.

Click a specific issue to see the affected pages.

3XX Status Codes

3XX status codes indicate redirects—instances when users (and search engine crawlers) land on a page but are redirected to a new page.

Pages with 3XX status codes are not always problematic. However, you should always make sure they are used correctly in order to avoid any problems.

The Site Audit tool will detect all your redirects and flag any related issues.

The two most common redirect issues are as follows:

- Redirect chains: When multiple redirects exist between the original and final URL

- Redirect loops: When the original URL redirects to a second URL that redirects back to the original

Audit your redirects and follow the instructions provided within Site Audit to fix any errors.

Further reading: Redirects

4XX Status Codes

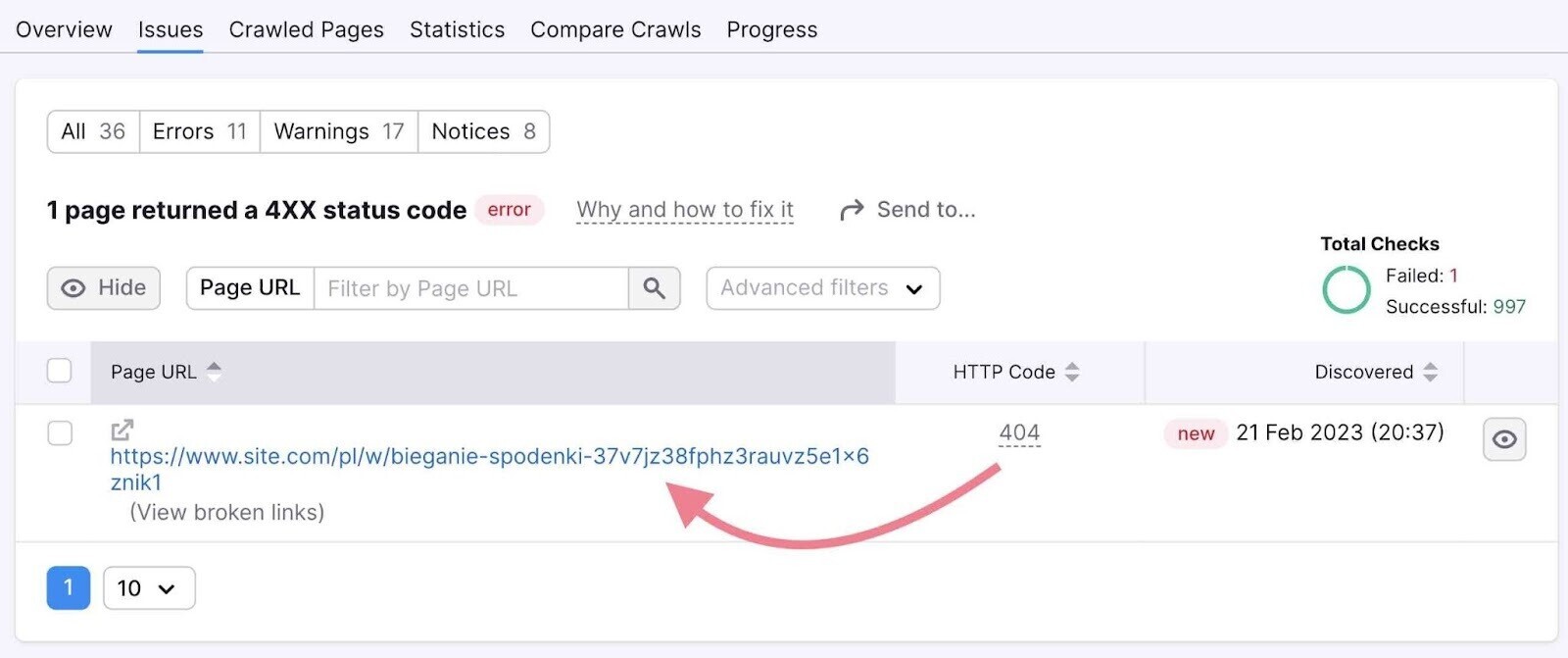

4XX errors indicate that a requested page can’t be accessed. The most common 4XX error is the 404 error: Page not found.

If Site Audit finds pages with a 4XX status, you’ll need to remove all the internal links pointing to those pages.

First, open the specific issue by clicking on the corresponding number of the pages:

You’ll get a list of all affected URLs:

Click “View broken links” in each line to see internal links that point to the 4XX pages listed in the report.

Remove the internal links pointing to the 4XX pages. Or replace the links with relevant alternatives.

5XX Status Codes

5XX errors are on the server side. They indicate that the server could not perform the request.These errors can happen for many reasons. Some common ones are as follows:

- The server being temporarily down or unavailable

- Incorrect server configuration

- Server overload

You’ll need to investigate the reasons why these errors occurred and fix them if possible.

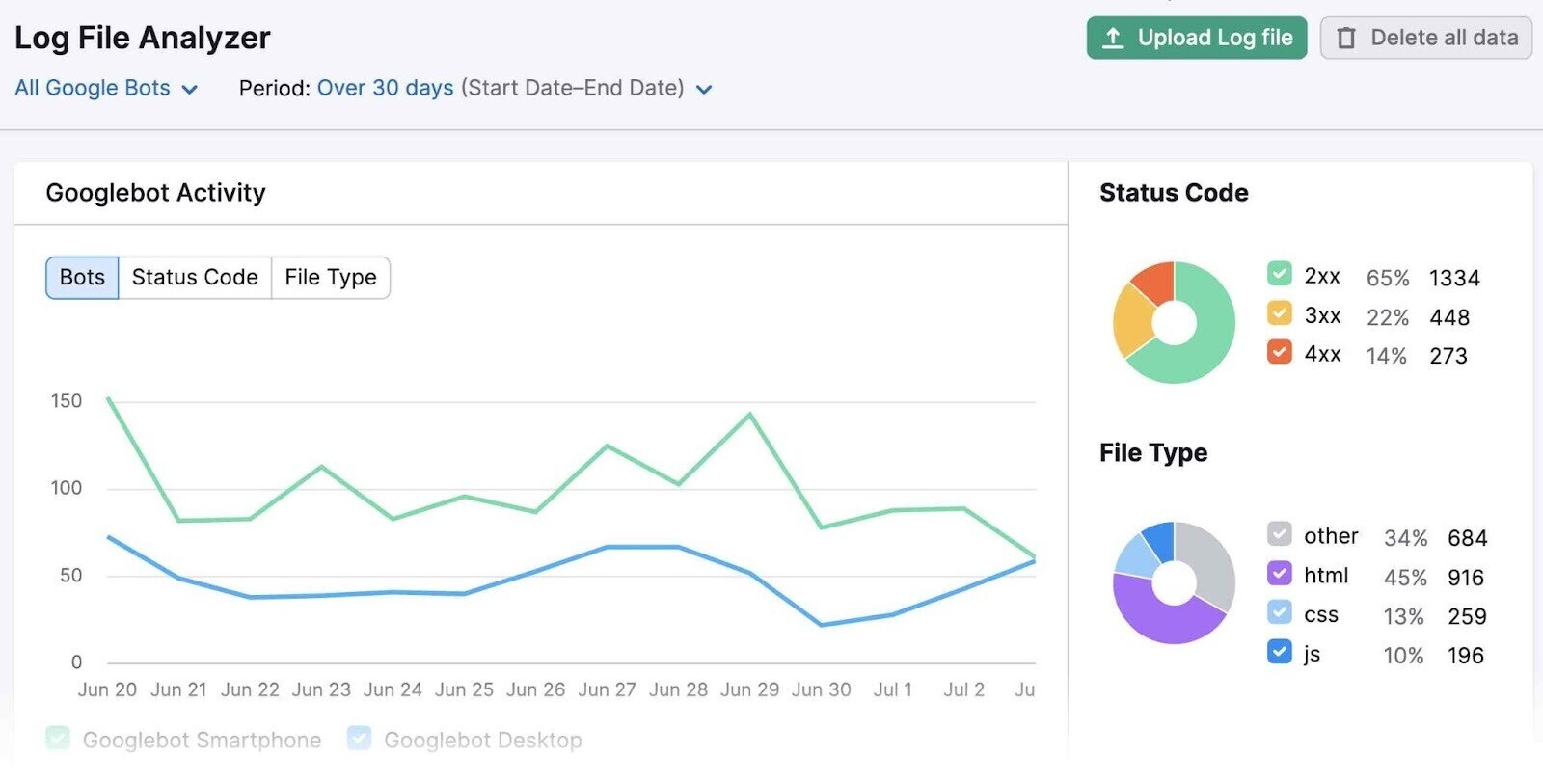

10. Perform Log File Analysis

Your website’s log file records information about every user and bot that visits your site.

Log file analysis helps you look at your website from a web crawler’s point of view to understand what happens when a search engine crawls your site.

It would be very impractical to analyze the log file manually. So we recommended using a tool like Semrush’s Log File Analyzer.

You’ll need a copy of your access log file to begin your analysis. Access it on your server’s file manager in the control panel or via an FTP (File Transfer Protocol) client.

Then, upload the file to the tool and start the analysis. The tool will analyze Googlebot activity on your site and provide a report. It will look like this:

It can help you answer several questions about your website, including the following:

- Are errors preventing my website from being crawled fully?

- Which pages are crawled the most?

- Which pages are not being crawled?

- Do structural issues affect the accessibility of some pages?

- How efficiently is your crawl budget being spent?

Answering these questions can help you refine your SEO strategy or resolve issues with the indexing or crawling of your webpages.

For example, if Log File Analyzer identifies errors that prevent Googlebot from fully crawling your website, you or a developer can take steps to resolve the errors.

To learn more about the tool, read our Log File Analyzer guide.

Wrapping Up

A thorough technical SEO audit can have huge effects on your website’s search engine performance.

All you have to do is get started:

Use our Site Audit tool to identify and fix issues. And watch your performance improve over time.

This post was updated in 2023. Excerpts from the original article by A.J. Ghergich may remain.

We all want to be satisfied, even though we know some people who will never be that way, and others who see satisfaction as a foreign emotion that they can’t hope to ever feel.

We all want to be satisfied, even though we know some people who will never be that way, and others who see satisfaction as a foreign emotion that they can’t hope to ever feel.

Newspaper Ads Canyon Crest CA

Click To See Full Page Ads

Click To See Half Page Ads

Click To See Quarter Page Ads

Click To See Business Card Size Ads

If you have questions before you order, give me a call @ 951-235-3518 or email @ canyoncrestnewspaper@gmail.com

Like us on Facebook Here

What should Google rank in Search when all the content sucks?

Many people have an incredibly low bar for what they consider to be “great” content that...

Google Search Wants To Reward The Best Content No Matter The Size Of The Site

For the past 20 years, probably even longer, the debate about Google giving preferential...

0 Comments